import warnings

import time

import math

from pathlib import Path

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from cycler import cycler

from numba import njit, prange

from scipy.stats import norm

import jax

import jax.numpy as jnp

from jax import grad, vmap

from IPython.display import display

import quantfinlab.fixed_income as fi

warnings.filterwarnings("ignore")

pd.set_option("display.float_format", lambda x: f"{x:,.6f}")

palette = [

"#069af3", "#fe420f", "#00008b", "#008080", "#800080", "#7bc8f6", "#0072b2", "#04d8b2",

"#cc79a7", "#ff8072", "#9614fa", "#dc143c",

]

plt.rcParams["axes.prop_cycle"] = cycler(color=palette)

plt.rcParams.update({

"figure.dpi": 150,

"savefig.dpi": 300,

"axes.grid": True,

"grid.alpha": 0.20,

"axes.spines.top": False,

"axes.spines.right": False,

"axes.titlesize": 11,

"axes.labelsize": 11,

"xtick.labelsize": 9,

"ytick.labelsize": 9,

"legend.fontsize": 8,

})

seed = 7

np.random.seed(seed)

4. black–scholes, implied volatility, greeks, arbitrage diagnostics, and hedging

This is one of the most important projects in this series and the pillar for lots of future projects. In this notebook we will get into some of the most important topics including: - stochastic processes - risk-neutral pricing - the Black–Scholes–Merton model - implied volatility with two different solvers - Greeks with analytic and autodiff approaches - market-quality diagnostics (Dispersion, No-arb) - hedging Greeks with SPY

imports and plotting style

1. Introduction to Options

An option is a financial derivative whose value depends on an underlying asset such as a stock, index, or ETF. The key feature of an option is that it gives the holder a right, but not an obligation, to buy or sell the underlying asset at a predetermined price.

1.1) Call Options: The Right to Buy

A call option gives the buyer the right to buy the underlying asset at a specified price called the strike price (\(K\)) at or before a specified date called the maturity or expiration date (\(T\)).

How it works in practice:

Suppose a stock is currently trading at 100. You believe that in 3 months, the price will rise above 120. You have two choices:

Choice 1: Buy the stock directly - You pay $100 now - If the stock rises to$140 in 3 months, you profit 40 - If the stock falls to 80 in 3 months, you lose 20 - Your potential loss is substantial (could lose most of your investment if stock crashes)

Choice 2: Buy a call option - You pay a premium (option price) of, say, $5 to the seller - You choose a strike price \(K = 120\) and maturity \(T = 3\) months - The seller agrees to give you the right to buy the stock at $120 in 3 months - Both the option premium (5) and strike price (120) are determined and agreed upon when you buy the option

At maturity (3 months later), you have a choice:

- If stock price is 140 (above strike \(K = 120\)):

- You exercise your right and buy at 120

- You immediately have an asset worth 140

- Your profit = 140 - 120 - 5 (premium) = 15

- If stock price is 95 (below strike \(K = 120\)):

- You don’t exercise (why buy at 120 when market price is 95?)

- You lose only the premium: 5

- This is your maximum possible loss, regardless of how low the stock goes

- If stock price is 65 (far below strike):

- You still don’t exercise

- You still lose only the premium: 5

- Whether the stock is at 95, 65, or even 10, your loss is capped at 5

This is the power of options for hedging: Your downside risk is limited to the premium you paid, while your upside potential is unlimited (stock could go to $200, $300, etc.).

Mathematical representation of call option payoff:

At maturity \(T\), if the stock price is \(S_T\), your payoff is:

\[\text{Call Payoff} = \max(S_T - K, 0)\]

This means: - If \(S_T > K\): You exercise and get \(S_T - K\) - If \(S_T \leq K\): You don’t exercise and get \(0\)

Your net profit is:

\[\text{Call Profit} = \max(S_T - K, 0) - C_0\]

where \(C_0\) is the premium you paid initially (the $5 in our example).

1.2 The Other Side: Selling Call Options

When you sell (write) a call option, you are on the opposite side of the transaction:

- You receive the premium ($5) upfront

- You are obligated to sell the stock at strike price \(K\) if the buyer exercises

Payoff structure for the seller:

- If stock price is 95 (below strike \(K = 120\)):

- Buyer doesn’t exercise

- You keep the premium: profit = 5

- This is your maximum profit

- If stock price is 140 (above strike \(K = 120\)):

- Buyer exercises

- You must sell stock at 120 (even though it’s worth 140)

- Your loss = 140 - 120 = 20

- Net loss = 20 - 5 (premium) = 15

- If stock price is 160:

- Your loss = 160 - 120 = 40

- Net loss = 40 - 5 = 35

- If stock price is 200:

- Your loss = 200 - 120 = 80

- Net loss = 80 - 5 = 75

Key insight: As a call option seller, your profit is capped at the premium, but your potential loss is unlimited.

Why would anyone sell call options? We’ll explore option strategies later, but briefly: sellers can use this for income generation (collecting premiums) or as part of hedging strategies (e.g., covered calls where you already own the stock).

1.3 Put Options: The Right to Sell

A put option gives the buyer the right to sell the underlying asset at the strike price \(K\) at or before maturity \(T\). This is exactly the opposite of a call option.

How it works in practice:

Suppose a stock is currently trading at 100. You believe the price will fall below 80 in 3 months.

Buy a put option: - You pay a premium of, say, 4 - You choose strike price \(K = 80\) and maturity \(T = 3\) months - The seller agrees to give you the right to sell the stock at 80 in 3 months

At maturity:

- If stock price is 60 (below strike \(K = 80\)):

- You exercise your right and sell at 80

- The stock is only worth $60 in the market

- Your profit = 80 - 60 - 4 (premium) = 16

- If stock price is 95 (above strike \(K = 80\)):

- You don’t exercise (why sell at 80 when market price is 95?)

- You lose only the premium: 4

put option payoff:

At maturity \(T\), your payoff is:

\[\text{Put Payoff} = \max(K - S_T, 0)\]

This means: - If \(S_T < K\): You exercise and get \(K - S_T\) - If \(S_T \geq K\): You don’t exercise and get \(0\)

Your net profit is:

\[\text{Put Profit} = \max(K - S_T, 0) - P_0\]

where \(P_0\) is the premium you paid initially.

Use case for put options: Put options are commonly used for portfolio protection. If you own a stock and worry about a price decline, you can buy a put option as insurance. If the stock falls, your put option gains value and offsets the loss in your stock position.

1.4 Selling Put Options

When you sell (write) a put option:

- You receive the premium upfront

- You are obligated to buy the stock at strike price \(K\) if the buyer exercises

Maximum loss for put seller: Unlike call sellers (unlimited loss), put sellers have a maximum loss of \(K - P_0\) (if stock goes to zero).

1.5 European vs American Options

European Options: - Can only be exercised at maturity date \(T\) - You cannot exercise early, even if it would be profitable - Simpler to analyze and price mathematically - Most theoretical models (like Black-Scholes) assume European options

For example, you buy a European call with strike \(K = 100\) and maturity in 3 months. After 1 month, the stock price jumps to $150. Even though exercising now would be profitable, you cannot. You must wait until the 3-month maturity date.

American Options: - Can be exercised at any time up to and including maturity date \(T\) - Provides more flexibility to the holder - Always worth at least as much as a European option - More complex to price

If you buy an American call with strike \(K = 100\) and maturity in 3 months and After 1 month, the stock price jumps to $150, You can choose to exercise immediately and lock in your profit, or wait and see if the price goes even higher.

For call options on non-dividend-paying stocks, Early exercise is never optimal.Because: - By holding the option, you maintain the right to buy later - You delay paying the strike price \(K\) (time value of money) - You maintain protection against downside (if stock crashes, you don’t exercise)

For call options on dividend-paying stocks, early exercise might be optimal just before a large dividend payment.

For put options, Early exercise can be optimal even without dividends. If the stock price falls very low, the time value of money makes it better to exercise early and receive the cash \((K - S)\) now rather than later.

Pricing difference: American options are harder to price because we must consider all possible exercise times. We will get to them in later projects.

| Option Type | Right | Profit When | Max Profit | Max Loss |

|---|---|---|---|---|

| Long Call | Buy at \(K\) | Price rises | Unlimited | Premium paid |

| Short Call | Obligated to sell at \(K\) | Price falls | Premium received | Unlimited |

| Long Put | Sell at \(K\) | Price falls | \(K\) - Premium | Premium paid |

| Short Put | Obligated to buy at \(K\) | Price rises | Premium received | \(K\) - Premium |

1.6 No-Arbitrage Principle

Arbitrage is a risk-free profit with zero initial investment. You make money without taking any risk. In efficient markets, such opportunities should not exist — or if they do, they disappear almost instantly.

Suppose a stock trades at \(\$100\) on Exchange A and \(\$102\) on Exchange B at the same time. You could:

- Buy the stock on Exchange A for \(\$100\)

- Sell it on Exchange B for \(\$102\)

- Make \(\$2\) profit with zero risk

This is arbitrage. In practice, traders exploit these gaps so quickly that prices realign almost immediately.

The no-arbitrage principle is the foundation of all derivatives pricing. The core idea is:

Two portfolios that produce the same payoff in every future state must have the same price today.

1.7 Put-Call Parity

If we have two European options, a call and a put on the same underlying stock \(S\), with same strike price \(K\) and same maturity \(T\)

We build two portfolios and show they produce the exact same payoff at expiration. By no-arbitrage, they must have the same price today.

Portfolio A: Buy one call + invest \(Ke^{-rT}\) in a risk-free bond (grows to \(K\) at time \(T\))

Portfolio B: Buy one put + buy one share of stock at \(S_0\)

At time \(T\), the stock price is \(S_T\). There are two cases:

| \(S_T > K\) | \(S_T \leq K\) | |

|---|---|---|

| Portfolio A | \((S_T - K) + K = S_T\) | \(0 + K = K\) |

| Portfolio B | \(0 + S_T = S_T\) | \((K - S_T) + S_T = K\) |

Since both portfolios have identical payoffs, no-arbitrage forces them to have the same price today:

\[C + Ke^{-rT} = P + S_0\]

Rearranging:

\[C - P = S_0 - Ke^{-rT}\]

where: - \(C\) = European call price - \(P\) = European put price - \(S_0\) = current stock price - \(K\) = strike price - \(r\) = risk-free rate - \(T\) = time to maturity

Moneyness: ITM, ATM, and OTM

Moneyness describes the relationship between the current stock price \(S\) and the option’s strike price \(K\). It tells you whether exercising the option right now would be profitable, break-even, or a loss.

In-the-Money (ITM): - Call: \(S > K\). The stock price is above the strike, so exercising gives you immediate profit - Put: \(S < K\). The stock price is below the strike, so exercising gives you immediate profit

At-the-Money (ATM): - Call or Put: \(S \approx K\). The stock price is roughly equal to the strike

Out-of-the-Money (OTM): - Call: \(S < K\). The stock price is below the strike, exercising would be a loss - Put: \(S > K\). The stock price is above the strike, exercising would be a loss

Log Moneyness

Instead of comparing \(S\) and \(K\) directly, we often use log moneyness:

\[m = \ln\left(\frac{S}{K}\right)\]

or sometimes normalized by volatility and time:

\[m = \frac{\ln(S/K)}{\sigma\sqrt{T}}\]

| Regime | Call Condition | Put Condition | Log-moneyness \(m\) |

|---|---|---|---|

| Deep ITM | \(S \gg K\) | \(K \gg S\) | \(m \ll -0.1\) (call) |

| ITM | \(S > K\) | \(K > S\) | \(-0.1 < m < -0.02\) |

| ATM | \(S \approx K\) | \(S \approx K\) | \(\lvert m \rvert < 0.02\) |

| OTM | \(S < K\) | \(K < S\) | \(0.02 < m < 0.1\) |

| Deep OTM | \(S \ll K\) | \(K \ll S\) | \(m \gg 0.1\) |

2) Data

The project uses four main data sources: - an SPX option chain (can download SPX EOD option chains from 2022 and 2023 from here for free) - a risk-free rate curve (We use treasury par yields which we used in the notebook 01) - SPY daily data and SPX daily data from yahoo finance API

The option chain contains call and put bid/ask quotes across strikes and expiries.

Options expire at specific calendar dates, and quotes arrive at specific quote times. The time to maturity is therefore not just a rough day count. It is computed as a continuous fraction of a year:

\(\tau=(\text{expiry} - \text{quote})/(365.25×24×3600)\)

This matters because option pricing is highly sensitive to maturity, especially for near-dated options.

For each option we compute: - the mid price - the bid-ask spread - the relative spread The mid price is \[m=(b+a)/2\]

where \(b\) is the bid and \(a\) is the ask. spread is \(a-b\).

And the relative spread is \((a-b)/m\)

The mid price is often used as the best single-point estimate of the tradable fair price, while the spread is a direct measure of liquidity and uncertainty. If the spread is wide, then the market itself is telling us that valuation is noisy.

We also build a simple liquidity proxy from volume and spread. This is economically useful because when multiple strikes are available, a practitioner usually prefers the cleaner, tighter, more active quotes. A hedge or pricing result based on illiquid quotes can look mathematically elegant but be practically useless.

Later sections need a dataset where calls and puts can be compared at the same strike and expiry. That is why the raw chain is cleaned and reshaped so the call and put information can be paired. This pairing is essential for put–call parity and for extracting the forward price from observed option prices.

chain_cols = [

"quote_date",

"quote_readtime",

"expire_date",

"underlying_last",

"strike",

"c_bid",

"c_ask",

"p_bid",

"p_ask",

"c_volume",

"p_volume",

]

df_chain = pd.read_parquet("../data/spx_options_chain_202201_202312.parquet", columns=chain_cols)

df_rates = pd.read_csv("../data/par-yield-curve-rates-1990-2026.csv")

df_spy = pd.read_csv("../data/spy_yfinance.csv")

df_spx = pd.read_csv("../data/spx_daily_yfinance.csv")

for col in ["quote_date", "quote_readtime", "expire_date"]:

df_chain[col] = pd.to_datetime(df_chain[col], errors="coerce")

df_chain["trade_date"] = df_chain["quote_date"].dt.normalize()

df_chain["quote_datetime"] = pd.to_datetime(df_chain["quote_readtime"], errors="coerce")

df_chain["expiry_datetime"] = df_chain["expire_date"].dt.normalize()

df_chain["tau"] = (df_chain["expiry_datetime"] - df_chain["quote_datetime"]).dt.total_seconds() / (365.25 * 24 * 3600)

df_chain["s"] = pd.to_numeric(df_chain["underlying_last"], errors="coerce")

df_chain["strike"] = pd.to_numeric(df_chain["strike"], errors="coerce")

for col in ["c_bid", "c_ask", "p_bid", "p_ask", "c_volume", "p_volume"]:

df_chain[col] = pd.to_numeric(df_chain[col], errors="coerce")

df_chain["c_mid"] = 0.5 * (df_chain["c_bid"] + df_chain["c_ask"])

df_chain["p_mid"] = 0.5 * (df_chain["p_bid"] + df_chain["p_ask"])

df_chain["c_spread"] = df_chain["c_ask"] - df_chain["c_bid"]

df_chain["p_spread"] = df_chain["p_ask"] - df_chain["p_bid"]

df_chain["c_rel_spread"] = df_chain["c_spread"] / df_chain["c_mid"]

df_chain["p_rel_spread"] = df_chain["p_spread"] / df_chain["p_mid"]

df_chain["volume_sum"] = df_chain["c_volume"].fillna(0.0) + df_chain["p_volume"].fillna(0.0)

df_chain["spread_sum"] = df_chain["c_spread"] + df_chain["p_spread"]

df_chain["liq_score"] = np.sqrt(df_chain["volume_sum"] + 1.0) / (df_chain["spread_sum"] + 1e-4)

valid_quote_mask = (

(df_chain["c_bid"] > 0)

& (df_chain["c_ask"] >= df_chain["c_bid"])

& (df_chain["p_bid"] > 0)

& (df_chain["p_ask"] >= df_chain["p_bid"])

& (df_chain["c_mid"] > 0)

& (df_chain["p_mid"] > 0)

& (df_chain["tau"] > 0)

& (df_chain["s"] > 0)

& (df_chain["strike"] > 0)

& df_chain["trade_date"].notna()

& df_chain["expiry_datetime"].notna()

)

liq_core = (

(df_chain["c_rel_spread"] <= 0.20)

& (df_chain["p_rel_spread"] <= 0.20)

& (df_chain["tau"] >= 7.0 / 365.25)

& (df_chain["tau"] <= 120.0 / 365.25)

)

df_pairs = df_chain.loc[valid_quote_mask & liq_core].copy()

df_pairs["k_over_s"] = df_pairs["strike"] / np.clip(df_pairs["s"], 1e-12, None)

df_pairs = df_pairs[(df_pairs["k_over_s"] >= 0.85) & (df_pairs["k_over_s"] <= 1.15)].copy()

df_pairs["atm_dist_s"] = np.abs(np.log(df_pairs["k_over_s"]))

df_pairs["atm_rank"] = df_pairs.groupby(["trade_date", "expiry_datetime"])["atm_dist_s"].rank(method="first", ascending=True)

df_pairs = df_pairs[df_pairs["atm_rank"] <= 25].copy()

df_pairs = df_pairs.groupby(["trade_date", "expiry_datetime"], group_keys=False).filter(lambda g: len(g) >= 10)

df_pairs["pair_id"] = df_pairs["expiry_datetime"].dt.strftime("%Y-%m-%d") + "_" + df_pairs["strike"].round(4).astype(str)

df_spy.columns = [c.lower().strip() for c in df_spy.columns]

df_spy["trade_date"] = pd.to_datetime(df_spy["date"], errors="coerce").dt.normalize()

df_spy["close"] = pd.to_numeric(df_spy["close"], errors="coerce")

df_spy["dividend"] = pd.to_numeric(df_spy["dividends"], errors="coerce")

df_spy = df_spy[["trade_date", "close", "dividend"]].dropna().sort_values("trade_date").reset_index(drop=True)

df_spx.columns = [c.lower().strip() for c in df_spx.columns]

df_spx["trade_date"] = pd.to_datetime(df_spx["date"], errors="coerce").dt.normalize()

df_spx["close"] = pd.to_numeric(df_spx["close"], errors="coerce")

df_spx = df_spx[["trade_date", "close"]].dropna().sort_values("trade_date").reset_index(drop=True)

print("df_pairs shape:", df_pairs.shape)

print("n trade_date:", df_pairs["trade_date"].nunique(), " | n (trade_date, expiry):", df_pairs.groupby(["trade_date", "expiry_datetime"]).ngroups)df_pairs shape: (299214, 29)

n trade_date: 505 | n (trade_date, expiry): 119693. Stochastic Processes

To price options, we need to model how stock prices evolve over time. Stock prices are random and unpredictable, so we use stochastic processes (random processes that evolve over time).

A stochastic process is a collection of random variables indexed by time: \(\{X_t : t \geq 0\}\). It’s more like a random path through time:

- At each time \(t\), \(X_t\) is a random variable

- The entire path \(\{X_0, X_1, X_2, \ldots\}\) or \(\{X_t : t \geq 0\}\) is the stochastic process

Stock prices over time can follow a stochastic process \(\{S_t : t \geq 0\}\)

3.1 Random Walk: The Discrete-Time Foundation

A random walk is the simplest stochastic process, defined in discrete time steps.

Simple random walk:

\[S_n = S_0 + \sum_{i=1}^{n} \epsilon_i\]

- \(S_0\) is the starting value

- \(\epsilon_i\) are independent random steps

- Typically \(\epsilon_i = +1\) or \(-1\) with equal probability \(\frac{1}{2}\)

Example: Imagine flipping a coin repeatedly: - Heads: move up one step (\(\epsilon_i = +1\)) - Tails: move down one step (\(\epsilon_i = -1\)) - Start at \(S_0 = 0\)

After 4 flips: H, T, H, H - \(S_1 = 0 + 1 = 1\) - \(S_2 = 1 - 1 = 0\) - \(S_3 = 0 + 1 = 1\) - \(S_4 = 1 + 1 = 2\)

Properties of random walk:

Expected value: If \(E[\epsilon_i] = 0\) (symmetric steps), then: \[E[S_n] = S_0\] The expected position is where you started.

Variance: If \(\text{Var}(\epsilon_i) = \sigma^2\), then: \[\text{Var}(S_n) = n\sigma^2\] Variance grows linearly with time. The longer you walk, the more spread out possible positions become.

Non-stationary: The distribution of \(S_n\) changes with \(n\). As time increases, the random walk spreads out more and more.

Independent increments: The change from time \(s\) to \(t\) is independent of the change from time \(u\) to \(v\) if the intervals don’t overlap.

Random walk with drift:

\[S_n = S_0 + n\mu + \sum_{i=1}^{n} \epsilon_i\]

where \(\mu\) is the drift (average step size per period).

Now: - \(E[S_n] = S_0 + n\mu\) (expected value grows linearly) - \(\text{Var}(S_n) = n\sigma^2\) (variance still grows linearly)

random walks are the discrete-time foundation for Brownian motion, which we use to model stock prices.

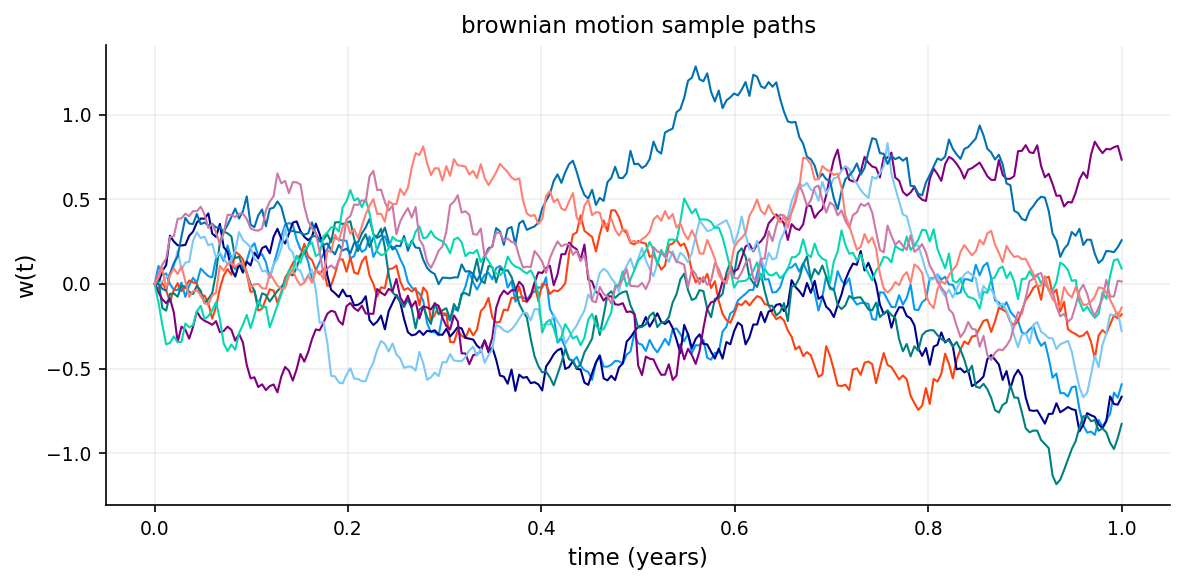

3.2 Brownian Motion (Wiener Process): Continuous-Time Random Walk

Brownian motion (also called a Wiener process) is the continuous-time limit of a random walk.

A stochastic process \(W_t\) is a Brownian motion if:

Starts at zero: \(W_0 = 0\)

Independent increments: For any times \(0 \leq t_1 < t_2 < t_3 < t_4\), the increments \(W_{t_2} - W_{t_1}\) and \(W_{t_4} - W_{t_3}\) are independent.

Stationary increments: The distribution of \(W_t - W_s\) depends only on the time difference \(t - s\), not on the specific times \(s\) and \(t\).

Normal increments: For any \(t > s\): \[W_t - W_s \sim N(0, t - s)\] The increment is normally distributed with mean \(0\) and variance \(t - s\).

Continuous paths: \(W_t\) is continuous in \(t\) (no jumps).

Non-differentiable: Despite being continuous, Brownian motion is nowhere differentiable (extremely jagged)

- \(E[W_t] = 0\) for all \(t\)

- \(\text{Var}(W_t) = t\) (variance equals time)

- \(W_t \sim N(0, t)\) (Brownian motion at time \(t\) is normally distributed)

- \(\text{Cov}(W_s, W_t) = s\) For \(s < t\)

- For \(t > s\): \(W_t - W_s \sim N(0, t-s)\)

Brownian motion with drift:

\[X_t = \mu t + \sigma W_t\]

where: - \(\mu\) is the drift rate (average velocity) - \(\sigma\) is the volatility (standard deviation per unit time) - \(W_t\) is standard Brownian motion

Properties: - \(E[X_t] = \mu t\) - \(\text{Var}(X_t) = \sigma^2 t\) - \(X_t \sim N(\mu t, \sigma^2 t)\)

Stock price changes over short time intervals are approximately normally distributed and independent, which matches the properties of Brownian motion.

At each instant, the process makes a small random jump up or down. Over a time interval of length \(t\), these jumps accumulate to give a normal distribution with variance \(t\).

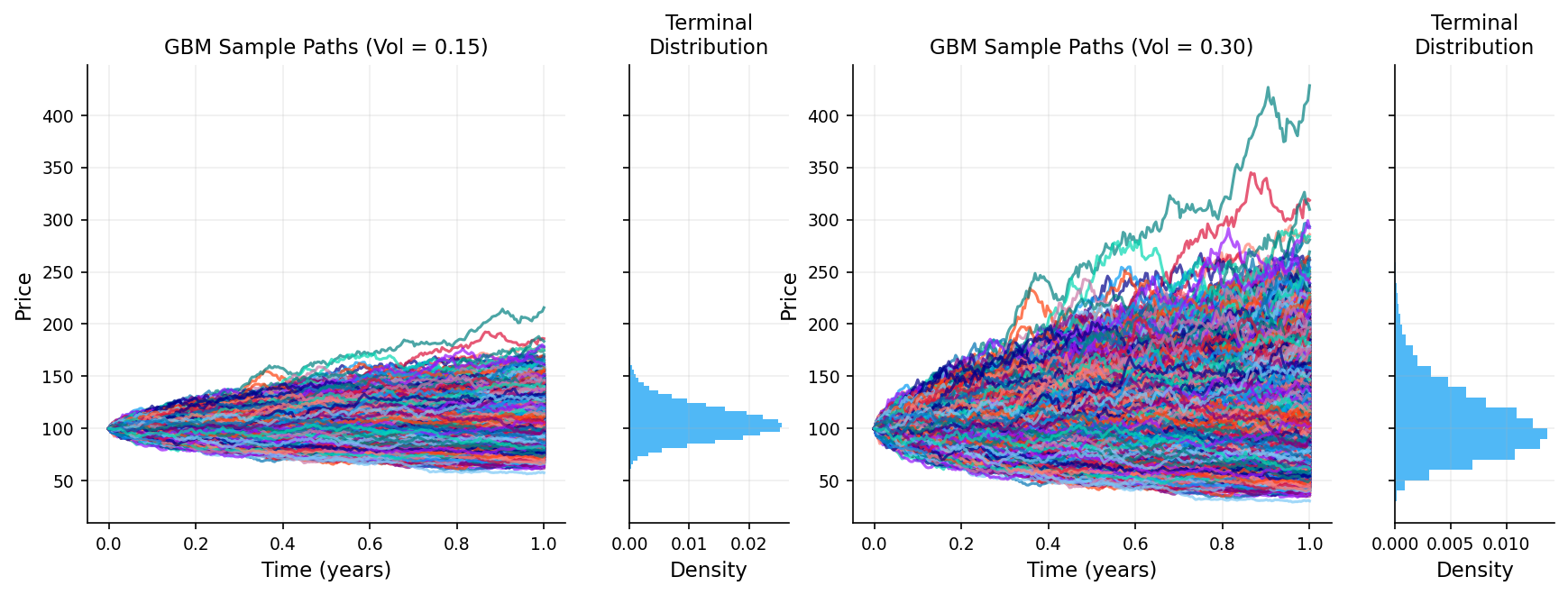

3.3 Geometric Brownian Motion (GBM)

Brownian motion itself can go negative, stock prices cannot. We need a model that: - Is always positive - Captures percentage returns (not absolute changes) - Has randomness

We can use Geometric Brownian Motion solves this. A stock price \(S_t\) follows GBM if:

\[dS_t = \mu S_t \, dt + \sigma S_t \, dW_t\]

where: - \(\mu\) is the drift (expected return rate) - \(\sigma\) is the volatility - \(W_t\) is a standard Brownian motion - \(dW_t\) is a small random change in Brownian motion

The change in stock price \(dS_t\) has two components:

- Deterministic drift: \(\mu S_t \, dt\)

- This is the expected growth

- If \(\mu = 0.10\) (10% annual return), the stock tends to grow at 10% per year

- Random fluctuation: \(\sigma S_t \, dW_t\)

- This is the unpredictable part

- \(\sigma\) measures how volatile the stock is and how much moves are expected to be

Solution to the GBM equation:

Using Itô’s lemma, we can solve this stochastic differential equation:

\[S_t = S_0 \exp\left[\left(\mu - \frac{\sigma^2}{2}\right)t + \sigma W_t\right]\]

where \(S_0\) is the initial stock price. Because of the exponential form, \(S_t > 0\) always (stock prices stay positive).

Distribution of \(S_t\):

Since \(W_t \sim N(0, t)\), we have:

\[\ln\left(\frac{S_t}{S_0}\right) \sim N\left[\left(\mu - \frac{\sigma^2}{2}\right)t, \sigma^2 t\right]\]

This means \(S_t\) follows a log-normal distribution: - \(E[S_t] = S_0 e^{\mu t}\) (expected value grows exponentially) - \(\text{Var}(S_t) = S_0^2 e^{2\mu t}(e^{\sigma^2 t} - 1)\) (variance also grows)

The term \(-\frac{\sigma^2}{2}\) is called the Itô correction or convexity adjustment. It arises from Itô’s lemma and ensures that \(E[S_t] = S_0 e^{\mu t}\). Without it, the expected value would be wrong due to the nonlinearity of the exponential function.

3.4 Itô’s Lemma

Itô’s lemma tells us how to differentiate functions of stochastic processes.

If \(X_t\) follows the stochastic differential equation:

\[dX_t = \mu(X_t, t) \, dt + \sigma(X_t, t) \, dW_t\]

and \(f(X_t, t)\) is a twice-differentiable function, then:

\[df = \left(\frac{\partial f}{\partial t} + \mu \frac{\partial f}{\partial x} + \frac{1}{2}\sigma^2 \frac{\partial^2 f}{\partial x^2}\right) dt + \sigma \frac{\partial f}{\partial x} \, dW_t\]

Key difference from ordinary calculus is that The extra term \(\frac{1}{2}\sigma^2 \frac{\partial^2 f}{\partial x^2}\) appears because \((dW_t)^2 = dt\) in stochastic calculus.

Example: Deriving the GBM solution

Starting with: \[dS_t = \mu S_t \, dt + \sigma S_t \, dW_t\]

If \(f(S_t, t) = \ln(S_t)\). Then:

\[\frac{\partial f}{\partial t} = 0 \qquad \frac{\partial f}{\partial S} = \frac{1}{S} \qquad \frac{\partial^2 f}{\partial S^2} = -\frac{1}{S^2}\]

Applying Itô’s lemma:

\[d(\ln S_t) = \left(0 + \mu S_t \cdot \frac{1}{S_t} + \frac{1}{2}\sigma^2 S_t^2 \cdot \left(-\frac{1}{S_t^2}\right)\right) dt + \sigma S_t \cdot \frac{1}{S_t} \, dW_t\]

\[d(\ln S_t) = \left(\mu - \frac{\sigma^2}{2}\right) dt + \sigma \, dW_t\]

Integrating from \(0\) to \(t\):

\[\ln S_t - \ln S_0 = \left(\mu - \frac{\sigma^2}{2}\right)t + \sigma W_t\]

\[S_t = S_0 \exp\left[\left(\mu - \frac{\sigma^2}{2}\right)t + \sigma W_t\right]\]

This is the solution we stated earlier. This is how we model price of an underlying asset for option pricing.

A key detail in this model is \(\sigma\). It shows us how much volatile we expect the process to be which is one of the most unknown details of option pricing and there are so many ways to model the volatility which we get to them later.

n_steps = 252

n_paths = 20000

dt = 1.0 / n_steps

t = np.linspace(0.0, 1.0, n_steps + 1)

z = np.random.normal(size=(n_steps, n_paths))

w = np.vstack([np.zeros(n_paths), np.cumsum(np.sqrt(dt) * z, axis=0)])

s0 = 100.0

mu = 0.06

sig_low = 0.15

sig_high = 0.30

gbm_low = s0 * np.exp((mu - 0.5 * sig_low * sig_low) * t[:, None] + sig_low * w)

gbm_high = s0 * np.exp((mu - 0.5 * sig_high * sig_high) * t[:, None] + sig_high * w)

fig, ax = plt.subplots(figsize=(8, 4))

ax.plot(t, w[:, :10], lw=1.0)

ax.set_title("brownian motion sample paths")

ax.set_xlabel("time (years)")

ax.set_ylabel("w(t)")

plt.tight_layout()

plt.show()

fig = plt.figure(figsize=(14, 4.4))

gs = fig.add_gridspec(1, 4, width_ratios=[3, 1, 3, 1], hspace=0.3)

ax1 = fig.add_subplot(gs[0, 0])

ax2 = fig.add_subplot(gs[0, 1], sharey=ax1)

ax3 = fig.add_subplot(gs[0, 2], sharey=ax1)

ax4 = fig.add_subplot(gs[0, 3], sharey=ax3)

ax1.plot(t, gbm_low, alpha=0.7)

ax1.set_title(f"GBM Sample Paths (Vol = 0.15)")

ax1.set_xlabel("Time (years)")

ax1.set_ylabel("Price")

ax2.hist(gbm_low[-1, :], bins=40, orientation='horizontal', density=True, alpha=0.7)

ax2.set_xlabel("Density")

ax2.set_title("Terminal\nDistribution")

ax2.tick_params(labelleft=False)

ax3.plot(t, gbm_high, alpha=0.7)

ax3.set_title(f"GBM Sample Paths (Vol = 0.30)")

ax3.set_xlabel("Time (years)")

ax3.set_ylabel("Price")

ax4.hist(gbm_high[-1, :], bins=40, orientation='horizontal', density=True, alpha=0.7)

ax4.set_xlabel("Density")

ax4.set_title("Terminal\nDistribution")

ax4.tick_params(labelleft=False)

plt.tight_layout()

plt.show()

4. The Black-Scholes Model

4.1 Risk-Neutral Pricing

Different investors have different risk appetites. Some are aggressive, some are conservative. If the price of an option depends on how much risk people are willing to take, then every investor would assign a different price to the same option.

In a risk-neutral world, all investors are indifferent to risk. They don’t demand extra return for taking on risk. In such a world: - All assets grow at the risk-free rate \(r\) (not their actual expected return \(\mu\)) - We can price derivatives by taking expected payoffs and discounting at rate \(r\)

Even though the real world is not risk-neutral, we can price options as if it were.

Risk-neutral pricing formula:

\[C(S_0, 0) = e^{-rT} E^Q[\max(S_T - K, 0)]\]

where: - \(E^Q\) denotes expectation under the risk-neutral measure (the “Q” measure) - Under this measure, \(S_t\) grows at rate \(r\) instead of \(\mu\):

\[S_T = S_0 \exp\left[\left(r - \frac{\sigma^2}{2}\right)T + \sigma W_T\right]\]

Hedging with options doesn’t depend on \(\mu\), only on \(\sigma\). So the price is the same whether we use the real-world measure (with \(\mu\)) or the risk-neutral measure (with \(r\)). So in this way and under this assumption we don’t need to estimate \(\mu\). We can use a risk-free rate as our expected return in the pricing formula.

4.2 Black Scholes Assumptions

The Black-Scholes model makes several key assumptions:

- Stock price follows GBM: \(dS_t = \mu S_t \, dt + \sigma S_t \, dW_t\)

- Constant volatility: \(\sigma\) is constant over time

- No dividends: The stock pays no dividends during the option’s life

- European options: Can only be exercised at maturity

- No transaction costs: No fees for buying/selling

- No arbitrage: No risk-free profit opportunities (will get to it later)

- Risk-free rate is constant: a risk-free asset exists with constant rate \(r\)

- Continuous trading: Can trade at any time

- No restrictions on short selling: Can borrow and sell assets you don’t own

While these assumptions are idealized and not close to real world, the model works well in practice and forms the foundation of modern option pricing. and later we can adjust the model and use different models for handling different probabilities.

4.3 The Black-Scholes Partial Differential Equation

If \(C(S, t)\) is the price of a call option when the stock price is \(S\) at time \(t\). The Black-Scholes PDE is:

\[\frac{\partial C}{\partial t} + rS\frac{\partial C}{\partial S} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2} = rC\]

If We create a portfolio buying 1 option and shorting \(\Delta = \frac{\partial C}{\partial S}\) shares of stock, the portfolio value is: \(\Pi = C - \Delta S\)

We need to track how the portfolio changes. Over a small time \(dt\), the change in portfolio value is: \[d\Pi = dC - \Delta \, dS\]

We need to figure out what \(dC\) and \(dS\) are. The option price \(C(S, t)\) depends on both the stock price \(S\) and time \(t\).

Using Itô’s lemma on \(C(S,t)\): \[dC = \left(\frac{\partial C}{\partial t} + \mu S\frac{\partial C}{\partial S} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2}\right) dt + \sigma S\frac{\partial C}{\partial S} \, dW_t\]

The first part (with \(dt\)) is the deterministic drift. The second part (with \(dW_t\)) is the random fluctuation. The stock also follows a GBM:

\[dS = \mu S \, dt + \sigma S \, dW_t\]

Plugging \(dC\) and \(dS\) into \(d\Pi = dC - \Delta dS\) and choosing \(\Delta = \frac{\partial C}{\partial S}\):

\[d\Pi = \left(\frac{\partial C}{\partial t} + \mu S\frac{\partial C}{\partial S} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2}\right) dt + \sigma S\frac{\partial C}{\partial S} \, dW_t - \frac{\partial C}{\partial S}(\mu S \, dt + \sigma S \, dW_t)\]

\[d\Pi = \left(\frac{\partial C}{\partial t} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2}\right) dt\]

The \(dW_t\) terms disappeared, and so did \(\mu\). The portfolio is now completely deterministic and risk free. No randomness is left.

By no-arbitrage, Since the portfolio has no risk, it must earn the risk-free rate \(r\).

\[d\Pi = r\Pi \, dt = r(C - \Delta S) \, dt = r(C - S\frac{\partial C}{\partial S})\]

\[\left(\frac{\partial C}{\partial t} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2}\right) dt = r\left(C - S\frac{\partial C}{\partial S}\right)\]

\[\frac{\partial C}{\partial t} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2} = rC - rS\frac{\partial C}{\partial S}\]

\[\frac{\partial C}{\partial t} + rS\frac{\partial C}{\partial S} + \frac{1}{2}\sigma^2 S^2 \frac{\partial^2 C}{\partial S^2} = rC\]

This is how we get the Black-Scholes PDE.

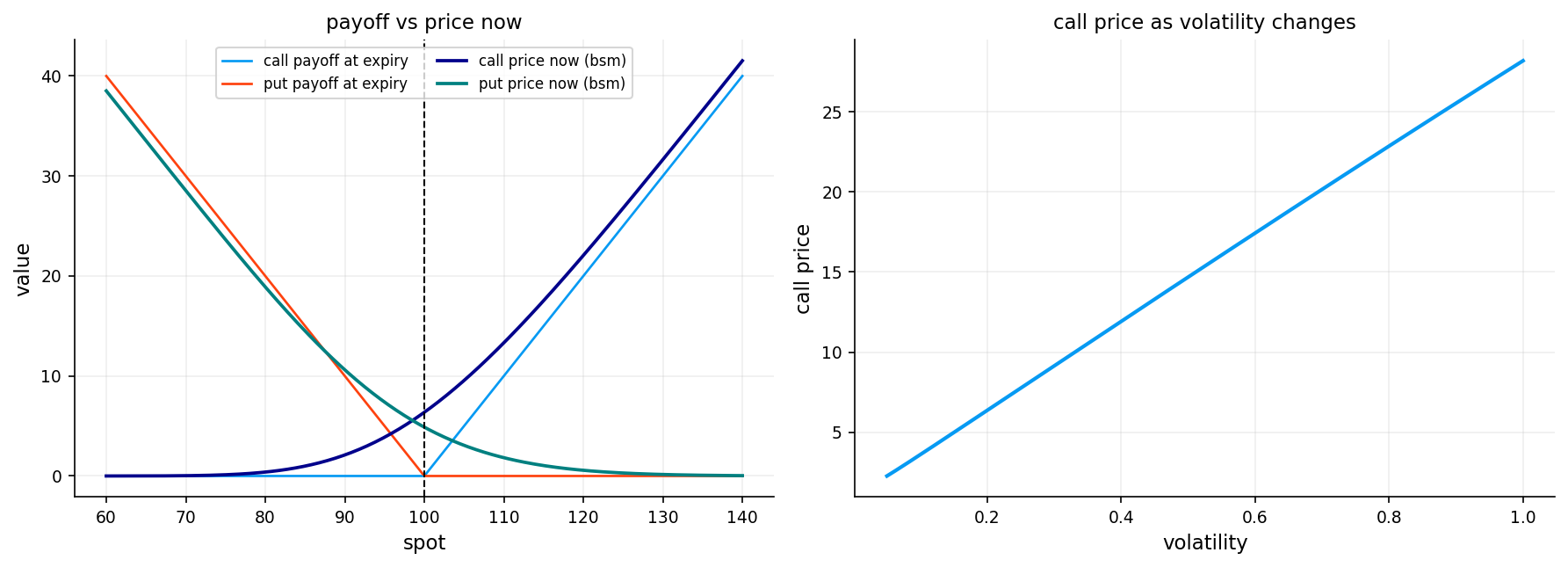

4.5 The Black-Scholes Formula

Solving the Black-Scholes PDE (or equivalently, computing the risk-neutral expectation) gives the famous Black-Scholes formula.

For a European call option:

\[C(S_0, T, K, r, \sigma) = S_0 \Phi(d_1) - Ke^{-rT}\Phi(d_2)\]

\[d_1 = \frac{\ln(S_0/K) + (r + \sigma^2/2)T}{\sigma\sqrt{T}}\]

\[d_2 = d_1 - \sigma\sqrt{T} = \frac{\ln(S_0/K) + (r - \sigma^2/2)T}{\sigma\sqrt{T}}\]

and \(\Phi(\cdot)\) is the cumulative distribution function (CDF) of the standard normal distribution:

\[\Phi(x) = \frac{1}{\sqrt{2\pi}}\int_{-\infty}^{x} e^{-z^2/2} \, dz\]

For a European put option (using put-call parity):

\[P(S_0, T, K, r, \sigma) = Ke^{-rT}\Phi(-d_2) - S_0\Phi(-d_1)\]

The call option price has two terms:

- \(S_0 \Phi(d_1)\): The expected value of the stock at maturity, weighted by the probability of exercise, adjusted for the stock’s current value

- \(Ke^{-rT}\Phi(d_2)\): The present value of the strike price, weighted by the probability of exercise

- \(\Phi(d_2)\) is approximately the risk-neutral probability that the option will be exercised (\(S_T > K\))

- \(\Phi(d_1)\) is related to the expected value of the stock conditional on exercise

Example calculation:

Suppose: - Current stock price: \(S_0 = 100\) - Strike price: \(K = 105\) - Time to maturity: \(T = 0.25\) years (3 months) - Risk-free rate: \(r = 0.05\) (5% per year) - Volatility: \(\sigma = 0.20\) (20% per year)

Calculate \(d_1\) and \(d_2\):

\[d_1 = \frac{\ln(100/105) + (0.05 + 0.20^2/2) \times 0.25}{0.20\sqrt{0.25}} = \frac{-0.04879 + 0.0175}{0.10} = -0.3129\]

\[d_2 = -0.3129 - 0.20\sqrt{0.25} = -0.3129 - 0.10 = -0.4129\]

Look up normal CDF values: - \(\Phi(-0.3129) \approx 0.3772\) - \(\Phi(-0.4129) \approx 0.3398\)

Calculate call price:

\[C = 100 \times 0.3772 - 105 \times e^{-0.05 \times 0.25} \times 0.3398\] \[C = 37.72 - 105 \times 0.9876 \times 0.3398\] \[C = 37.72 - 35.22 = 2.50\]

The call option is worth approximately \(\$2.50\).

def bs_price_spot(cp, spot, strike, tau, rate, vol):

tau = np.clip(np.asarray(tau, dtype=float), 1e-12, None)

vol = np.clip(np.asarray(vol, dtype=float), 1e-8, None)

spot = np.asarray(spot, dtype=float)

strike = np.asarray(strike, dtype=float)

rate = np.asarray(rate, dtype=float)

d1 = (np.log(np.clip(spot, 1e-12, None) / np.clip(strike, 1e-12, None)) + (rate + 0.5 * vol * vol) * tau) / (vol * np.sqrt(tau))

d2 = d1 - vol * np.sqrt(tau)

arr_cp = np.asarray(cp)

if arr_cp.ndim == 0:

is_call = bool(str(arr_cp.item()).lower().startswith("c"))

else:

is_call = pd.Series(arr_cp.reshape(-1)).astype(str).str.lower().str.startswith("c").to_numpy().reshape(arr_cp.shape)

call_val = spot * norm.cdf(d1) - strike * np.exp(-rate * tau) * norm.cdf(d2)

put_val = strike * np.exp(-rate * tau) * norm.cdf(-d2) - spot * norm.cdf(-d1)

return np.where(is_call, call_val, put_val)

strike = 100.0

rate = 0.03

tau = 0.5

spot_grid = np.linspace(60.0, 140.0, 240)

call_payoff = np.maximum(spot_grid - strike, 0.0)

put_payoff = np.maximum(strike - spot_grid, 0.0)

call_bs = bs_price_spot("call", spot_grid, strike, tau, rate, 0.2)

put_bs = bs_price_spot("put", spot_grid, strike, tau, rate, 0.2)

vol_grid = np.linspace(0.05, 1.0, 220)

price_vs_vol = bs_price_spot("call", 100.0, strike, tau, rate, vol_grid)

fig, axes = plt.subplots(1, 2, figsize=(12.0, 4.4))

axes[0].plot(spot_grid, call_payoff, lw=1.3, label="call payoff at expiry")

axes[0].plot(spot_grid, put_payoff, lw=1.3, label="put payoff at expiry")

axes[0].plot(spot_grid, call_bs, lw=1.8, label="call price now (bsm)")

axes[0].plot(spot_grid, put_bs, lw=1.8, label="put price now (bsm)")

axes[0].axvline(strike, lw=1.0, ls="--", c="k")

axes[0].set_title("payoff vs price now")

axes[0].set_xlabel("spot")

axes[0].set_ylabel("value")

axes[0].legend(loc="best", ncol=2)

axes[1].plot(vol_grid, price_vs_vol, lw=2.0)

axes[1].set_title("call price as volatility changes")

axes[1].set_xlabel("volatility")

axes[1].set_ylabel("call price")

plt.tight_layout()

plt.show()

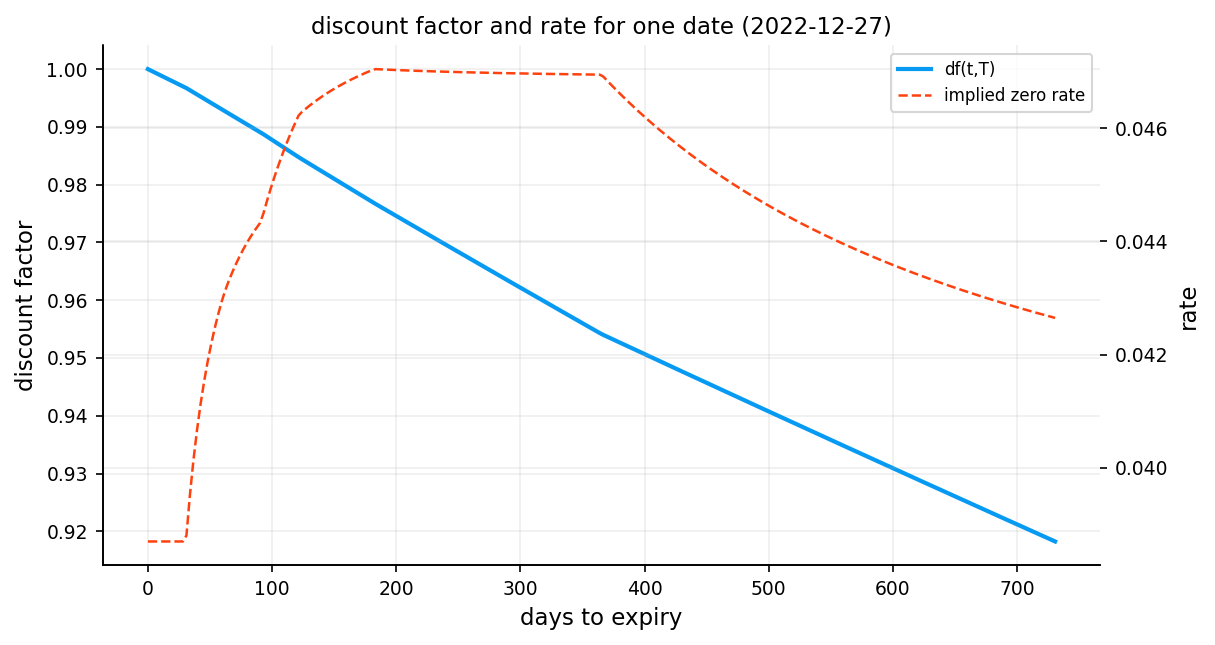

5) Constructing the Risk-Free Rate Curve

Option pricing requires discounting future payoffs back to the present using the risk-free rate \(r\). In practice, there isn’t a single risk-free rate. instead, we observe a term structure of rates across different maturities (overnight, 1 month, 3 months, 1 year, etc.).

For accurate option pricing, we need to build a continuous discount curve from discrete market observations and for every option with a maturity, we have to use their own risk-free rate based on their maturity for pricing them.

in the first project we focused on how we can do this and compared different approaches. i recommend checking it out: 1. Fixed income and yield curve construction

We use US treasury par yields again and only use the most accurate model for interpolation: Log-Linear

3.1 Handling the Short End

The short end (very short maturities, typically under 1 week) requires special treatment because the estimation can be noisy and we need a rate for emmidiate discounting.

Our approach:

We define a short rate \(r_{\text{short}}\) at a small time horizon \(\tau_{\text{short}} = 1/365.25\) (1 day). This rate is either: - Extracted from the first available tenor using simple exponential decay - Used as a constant for all maturities below the first observed tenor

This ensures we always have a well-defined rate for short-dated options without extrapolating into unstable regions.

The rest of the code is mostly covered in the first notebook.

def weighted_median(values, weights):

values = np.asarray(values, dtype=float)

weights = np.asarray(weights, dtype=float)

mask = np.isfinite(values) & np.isfinite(weights) & (weights > 0)

if mask.sum() == 0:

return np.nan

values = values[mask]

weights = weights[mask]

order = np.argsort(values)

values = values[order]

weights = weights[order]

csum = np.cumsum(weights)

idx = int(np.searchsorted(csum, 0.5 * weights.sum()))

idx = int(np.clip(idx, 0, len(values) - 1))

return float(values[idx])short_rate_tau = 1.0 / 365.25

rates_norm = fi.normalize_par_yields(df_rates.copy(), date_col="date")

rates_norm = rates_norm.sort_index()

curve_lookup = fi.make_discount_lookup(

rates_norm,

tenor_cols=list(rates_norm.columns),

curve_method="loglinear",

short_end="simple",

short_end_policy="first_tenor_exp",

)

get_df = curve_lookup["get_df"]

get_rate = curve_lookup["get_rate"]

resolve_curve_date = curve_lookup["resolve_date"]

curve_mode = curve_lookup["curve_mode"]

def get_r_short(trade_date, tau_short=short_rate_tau):

return float(get_rate(trade_date, tau_short))

curve_dates = np.sort(df_pairs["trade_date"].dropna().unique())

sample_date = pd.Timestamp(curve_dates[len(curve_dates) // 2]).normalize()

tau_grid = np.linspace(0.0, 2.0, 260)

df_grid = get_df(sample_date, tau_grid)

r_grid = np.where(

tau_grid > 0,

-np.log(np.clip(df_grid, 1e-12, None)) / np.clip(tau_grid, 1e-12, None),

get_r_short(sample_date),

)

df_100d = float(get_df(sample_date, 100.0 / 365.25))

r_100d = float(-np.log(max(df_100d, 1e-12)) / (100.0 / 365.25))

cdate_sample = resolve_curve_date(sample_date)

fig, ax1 = plt.subplots(figsize=(8.2, 4.4))

ax1.plot(tau_grid * 365.25, df_grid, lw=2, label="df(t,T)")

ax1.set_xlabel("days to expiry")

ax1.set_ylabel("discount factor")

ax2 = ax1.twinx()

ax2.plot(tau_grid * 365.25, r_grid, lw=1.2, ls="--", c= palette[1], label="implied zero rate")

ax2.set_ylabel("rate")

ax1.set_title(f"discount factor and rate for one date ({sample_date.date()})")

ln1, lb1 = ax1.get_legend_handles_labels()

ln2, lb2 = ax2.get_legend_handles_labels()

ax1.legend(ln1 + ln2, lb1 + lb2, loc="best")

plt.tight_layout()

plt.show()

df_curve_diag = pd.DataFrame(

[

{"metric": "sample_date", "value": str(sample_date.date())},

{"metric": "curve_date_used", "value": str(pd.Timestamp(cdate_sample).date())},

{"metric": "df_100d", "value": df_100d},

{"metric": "cc_zero_rate_100d", "value": r_100d},

{"metric": "short_rate_tau_days", "value": short_rate_tau * 365.25},

]

)

df_curve_diag

| metric | value | |

|---|---|---|

| 0 | sample_date | 2022-12-27 |

| 1 | curve_date_used | 2022-12-27 |

| 2 | df_100d | 0.987753 |

| 3 | cc_zero_rate_100d | 0.045009 |

| 4 | short_rate_tau_days | 1.000000 |

6 Forward Price

6.1 Forward Price Estimation via Put-Call Parity

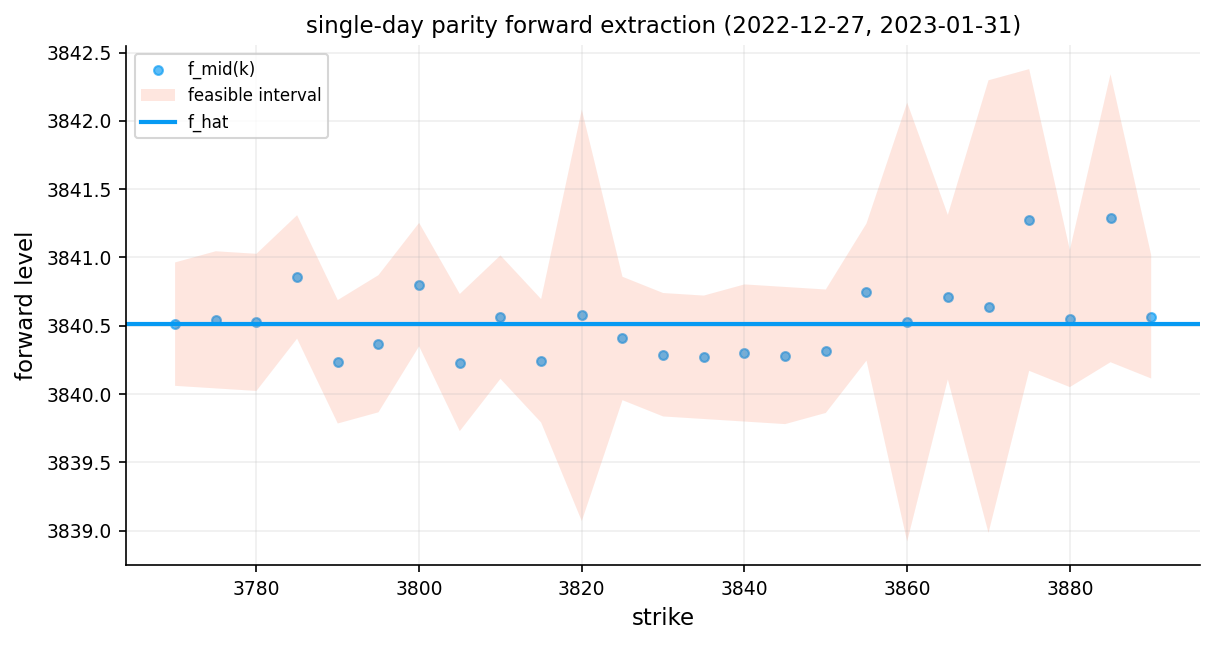

Before computing implied volatilities, we need a reliable estimate of the forward price \(F\). Rather than using the spot price and an assumed dividend yield, we extract \(F\) directly from market data using put-call parity:

\[C - P = D(t,T) \cdot (F - K)\]

Rearranging for the forward:

\[F(K) = K + \frac{C(K) - P(K)}{D(t,T)}\]

For each strike \(K\) where we observe both a call and a put, this gives us an implied forward. In a perfect market, all strikes would give the same \(F\). In practice, they vary due to bid-ask spreads, liquidity differences, and microstructure noise.

Constructing the forward estimate:

For each strike \(K\), we compute:

\[F(K) = K + \frac{C_{\text{mid}} - P_{\text{mid}}}{D(t,T)}\]

We also compute bounds using bid-ask spreads:

\[F_{\text{low}}(K) = K + \frac{C_{\text{bid}} - P_{\text{ask}}}{D(t,T)}\] \[F_{\text{high}}(K) = K + \frac{C_{\text{ask}} - P_{\text{bid}}}{D(t,T)}\]

Different strikes give slightly different forward estimates due to bid-ask spreads, Microstructure noise or violations of put-call parity like transaction costs or early exercise for American options

The interval \([F_{\text{low}}, F_{\text{high}}]\) is the feasible range. Any forward within this interval is consistent with no-arbitrage given the bid-ask spreads. \(F_{\text{mid}}\) is our point estimate.

Then we aggregate these estimates using a weighted median, where the weight for each strike is:

\[w_K = \frac{1}{(C_{\text{ask}} - C_{\text{bid}}) + (P_{\text{ask}} - P_{\text{bid}})}\]

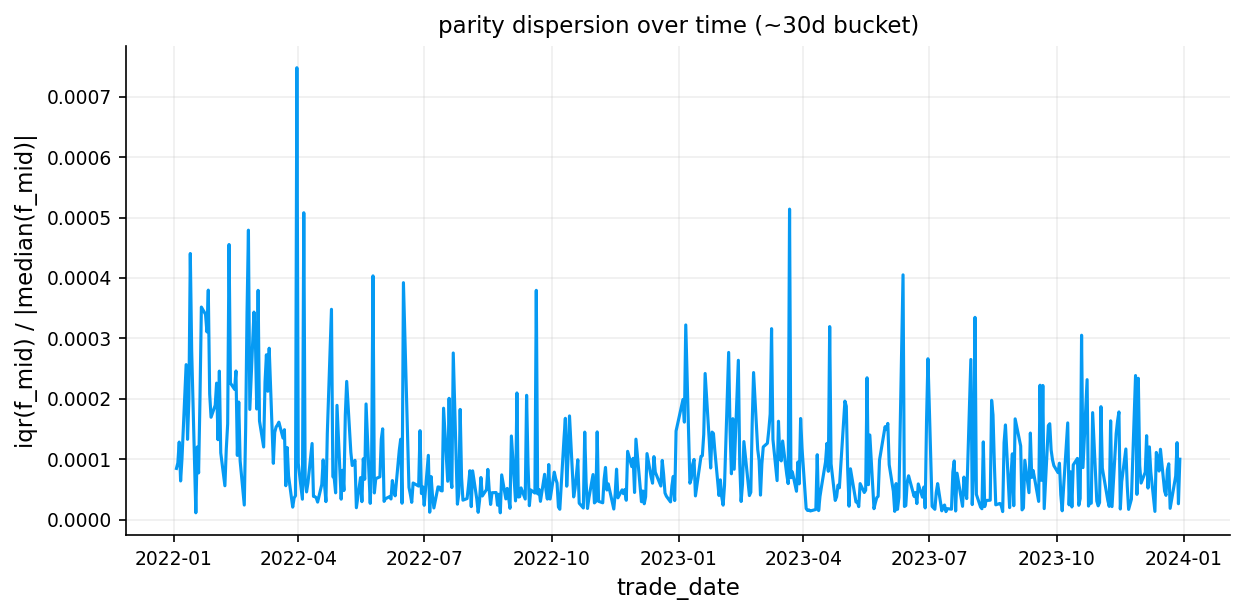

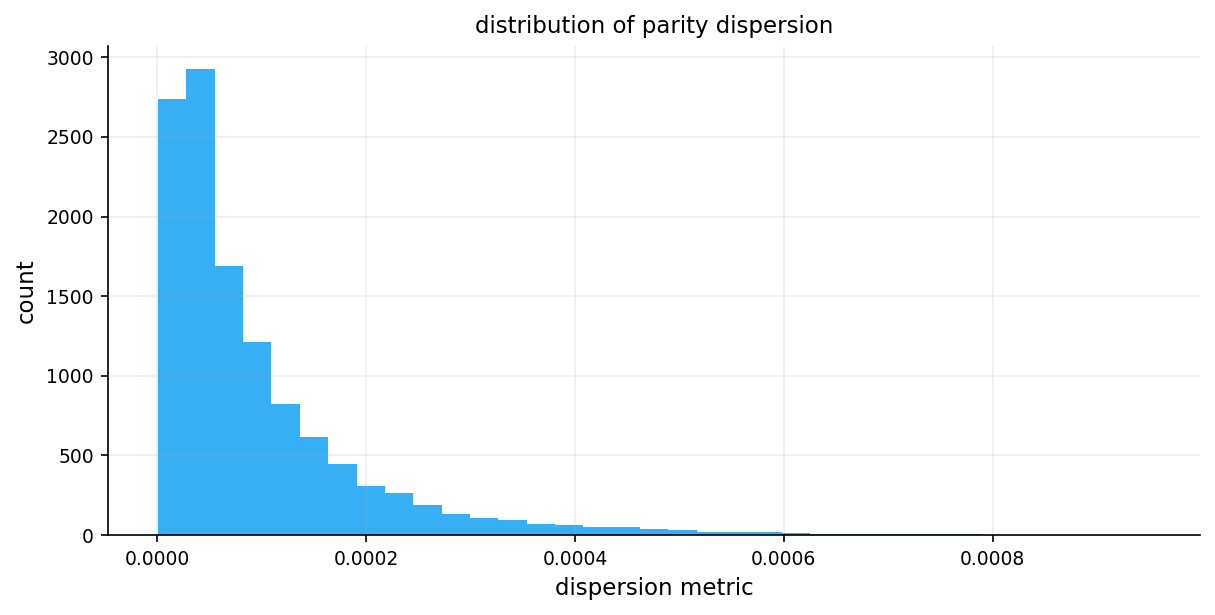

We then compute two diagnostics:

- Dispersion: The interquartile range divided by the median

- Measures the spread of forward estimates

- Low dispersion shows consistent pricing across strikes

- Feasibility: The fraction of strikes where \(F_{\text{low}} \leq \hat{F} \leq F_{\text{high}}\)

- Measures how often our estimate falls within the no-arbitrage bounds

- High feasibility shows the estimate is consistent with market prices

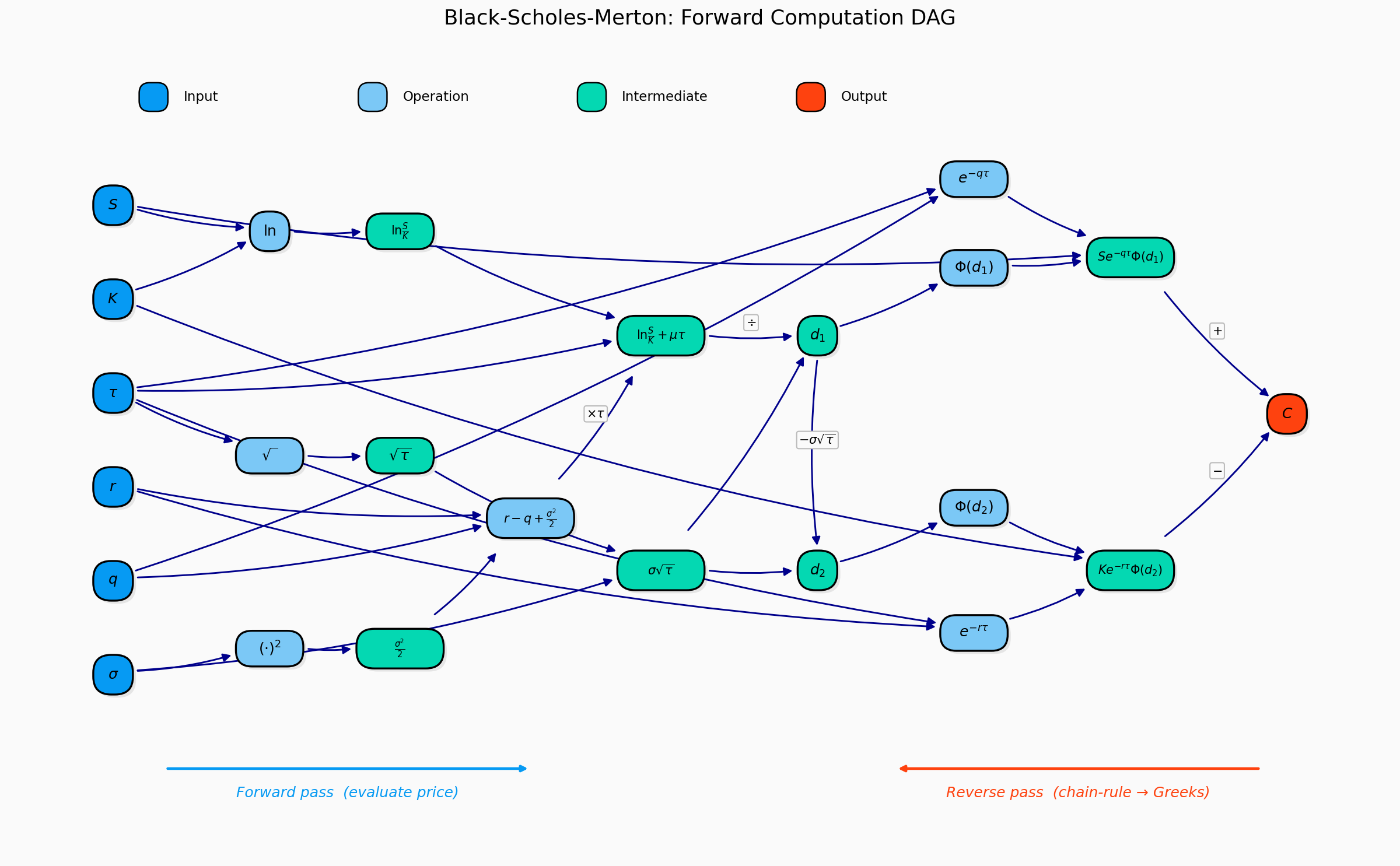

6.2 Pricing with the Black-Scholes Forward Formula

With the forward price in hand, we use the forward form of the Black-Scholes formula. This form separates discounting from the option’s intrinsic pricing:

\[C = D(t,T) \left[ F \cdot \Phi(d_1) - K \cdot \Phi(d_2) \right]\]

\[P = D(t,T) \left[ K \cdot \Phi(-d_2) - F \cdot \Phi(-d_1) \right]\]

where:

\[d_1 = \frac{\ln(F/K) + \frac{1}{2}\sigma^2 \tau}{\sigma\sqrt{\tau}}, \qquad d_2 = d_1 - \sigma\sqrt{\tau}\]

We use the forward form because:

- We don’t need to estimate the dividend yield or repo rate separately

- The forward already incorporates all carry costs

- It’s more natural for market-making and relative value analysis

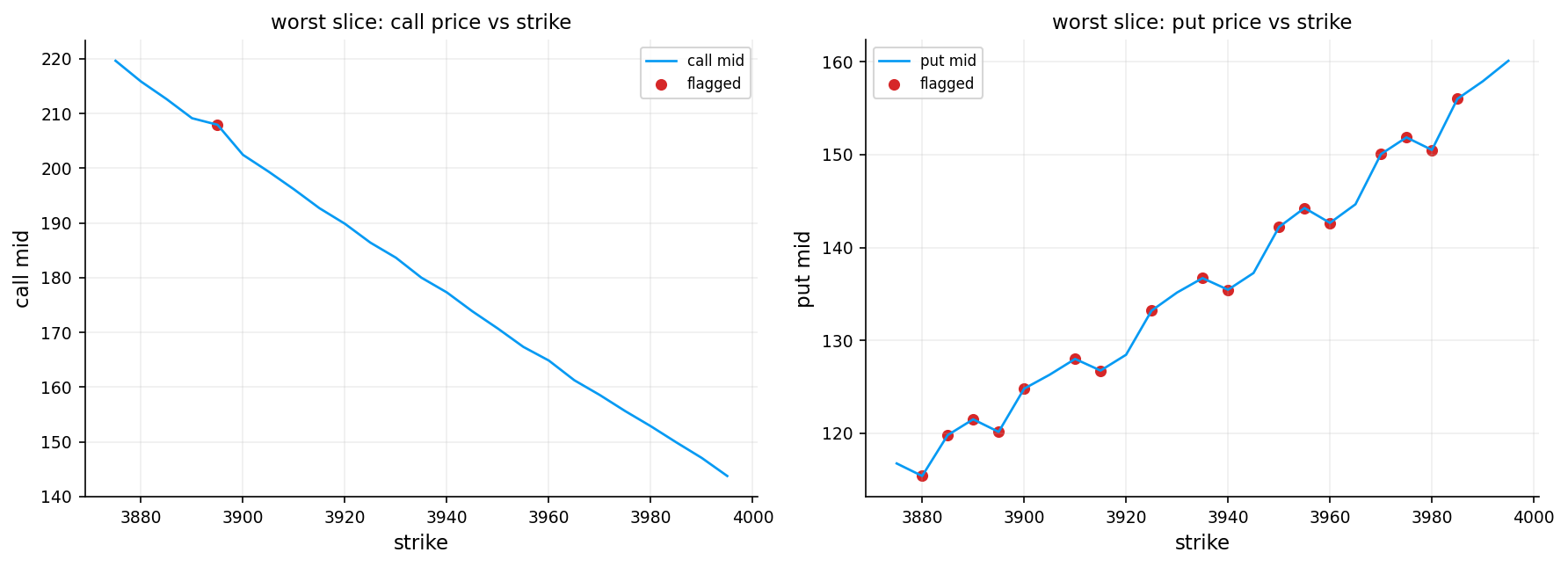

6.3 Arbitrage Bounds on Option Prices

Before attempting to solve for implied volatility, we check that the market price falls within no arbitrage bounds:

For calls:

\[D(t,T) \cdot \max(F - K, 0) \leq C \leq D(t,T) \cdot F\]

For puts:

\[D(t,T) \cdot \max(K - F, 0) \leq P \leq D(t,T) \cdot K\]

The lower bound is the discounted intrinsic value (the price if \(\sigma = 0\)). The upper bound is the discounted maximum possible payoff (the price as \(\sigma \to \infty\)).

If the market price violates these bounds, no implied volatility exists, and we flag the option accordingly.

7) Volatility

Volatility is the single most important input in options pricing. Unlike the stock price, interest rate, and time to expiration (which are directly observable), volatility must be estimated or inferred. We need volatility to price options, but we can only observe past price movements, not future ones, but also different market participants have different views on future volatility, creating trading opportunities and risk premia.

- Option prices are highly sensitive to volatility changes

- Future volatility is inherently uncertain and varies over time

- Volatility affects OTM options more than ITM options, creating the volatility smile (will get to them later)

- Investors pay for volatility exposure, creating systematic pricing patterns

There are so many different ways to estimate volatility:

- Realized volatility (RV): What actually happened — computed from historical returns

- Implied volatility (IV): What the market expects — extracted from option prices

- GARCH volatility forecast

- Local Volatility

- Stochastic volatility

We get to the first two in this notebook and the rest of the models in later projects.

7.1 Realized Volatility

Realized volatility is the volatility computed from actual price movements over a specific period in the history of the asset. It’s backward-looking and objective.

For a time series of log returns \(r_1, r_2, \ldots, r_n\):

\[\text{RV} = \sqrt{\frac{1}{n-1} \sum_{i=1}^{n} (r_i - \bar{r})^2} \times \sqrt{252}\]

We use it as a benchmark to evaluate other volatility models.

7.2 Implied Volatility

In the Black-Scholes model, we can compute an option price given volatility \(\sigma\):

\[C = D(t,T) \left[ F \Phi(d_1) - K \Phi(d_2) \right]\]

where: - \(F\) is the forward price - \(D(t,T)\) is the discount factor - \(d_1 = \frac{\ln(F/K) + \frac{1}{2}\sigma^2 \tau}{\sigma\sqrt{\tau}}\) - \(d_2 = d_1 - \sigma\sqrt{\tau}\)

But in practice, we observe market prices and want to find the volatility that produces those prices. This is the implied volatility — the market’s expectation of future volatility embedded in option prices.

The problem is that there’s no closed-form solution for \(\sigma\) given \(C\). We have similar options to the one we want to price that have traded before and their details and how much others priced it. We can find a \(\sigma\) that makes the BSM model’s price equal to the price that other have agreed on. We must solve numerically:

\[C_{\text{BSM}}(\sigma) = C_{\text{market}}\]

This is a root-finding problem. We seek the zero of the function \(f(\sigma) = \text{BSM}(\sigma) - C_{\text{market}}\)

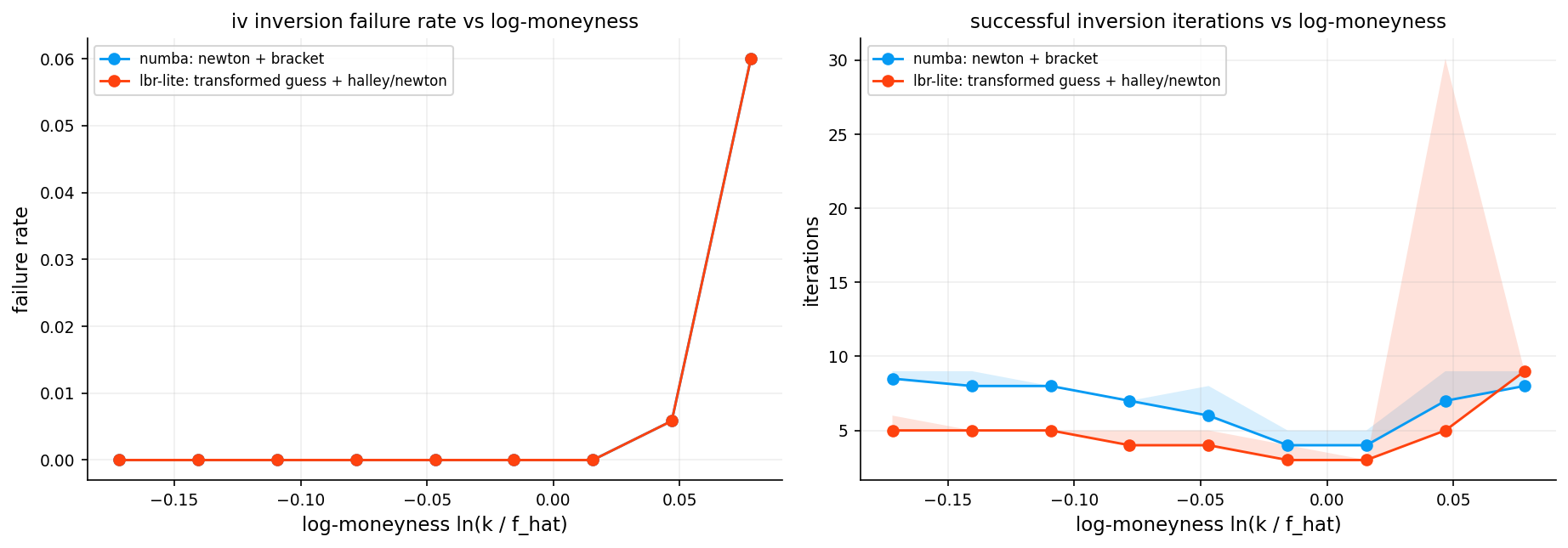

7.2.1 Newton-Raphson Method

One of the most efficient methods for solving the implied volatility equation is Newton-Raphson iteration. This is a classic numerical optimization technique that uses both the function value and its derivative to converge rapidly.

The Newton-Raphson update rule:

\[\sigma_{n+1} = \sigma_n - \frac{f(\sigma_n)}{f'(\sigma_n)}\]

where: - \(f(\sigma) = \text{BSM}(\sigma) - C_{\text{market}}\) is the pricing error - \(f'(\sigma) = \frac{\partial \text{BSM}}{\partial \sigma} = \text{Vega}\) is the sensitivity of price to volatility (we get to it more later).

At each iteration, we approximate the function \(f(\sigma)\) with its tangent line at \(\sigma_n\):

\[f(\sigma) \approx f(\sigma_n) + f'(\sigma_n)(\sigma - \sigma_n)\]

Setting this equal to zero and solving for \(\sigma\) gives the Newton-Raphson update. Geometrically, we’re following the tangent line to where it crosses the \(\sigma\)-axis.

7.2.2 Bisection Method (The Safety Net)

Newton-Raphson is fast but can fail if: - The initial guess is far from the solution - Vega is very small (near zero or deep ITM/OTM) - Numerical instability causes the update to overshoot

To ensure robustness, we combine Newton-Raphson with bisection, a slower but guaranteed-to-converge method.

The bisection algorithm:

- Start with an interval \([\sigma_{\text{low}}, \sigma_{\text{high}}]\) that brackets the solution

- Evaluate \(f(\sigma_{\text{mid}})\) at the midpoint \(\sigma_{\text{mid}} = \frac{\sigma_{\text{low}} + \sigma_{\text{high}}}{2}\)

- If \(f(\sigma_{\text{mid}}) > 0\), the solution is in \([\sigma_{\text{low}}, \sigma_{\text{mid}}]\); otherwise it’s in \([\sigma_{\text{mid}}, \sigma_{\text{high}}]\)

- Repeat until the interval is sufficiently small

It has linear convergance and it takes maybe to 20 iterations for convergence but convergence is guaranteed.

Our hybrid approach:

- Phase 1 (12 iterations): Try Newton-Raphson with safeguards

- If the Newton step would leave the bracketing interval \([\sigma_{\text{low}}, \sigma_{\text{high}}]\), fall back to bisection

- Update the bracketing interval based on the sign of the pricing error

- Phase 2 (28 iterations): Pure bisection as a fallback

- If Newton-Raphson hasn’t converged, switch to guaranteed bisection

- This ensures we always return a result, even for difficult cases

This combination gives us the speed of Newton-Raphson with the reliability of bisection.

7.2.3 Let’s be rational inspired method (Halley/Householder Refinement)

Inspired by Peter Jäckel’s “Let’s Be Rational” algorithm, this solver uses higher-order derivatives for faster convergence.

Householder correction (4 iterations):

Instead of the simple Newton step, we apply a Halley-like correction using Volga (the second derivative of price with respect to volatility):

\[\text{Volga} = \mathcal{V} \cdot \frac{d_1 \cdot d_2}{\sigma}\]

The corrected step is:

\[\sigma_{n+1} = \sigma_n - \frac{\Delta}{\mathcal{V}} \cdot \frac{1}{1 - \frac{\Delta}{2\mathcal{V}} \cdot \frac{\text{Volga}}{\mathcal{V}}}\]

where \(\Delta = C_{\text{BS}}(\sigma_n) - C_{\text{market}}\).

Newton-Raphson has quadratic convergence: error \(\sim \varepsilon^2\) per step. Halley/Householder has cubic convergence: error \(\sim \varepsilon^3\) per step. With a good initial guess, 3-4 Householder iterations often suffice

The denominator \(1 - \frac{\text{step} \cdot \text{Volga}}{2\mathcal{V}}\) corrects for the curvature of the price-volatility relationship. We apply a safety check: if the denominator is too small (\(< 0.35\)), we fall back to a plain Newton step.

After that we use bracketed Newton cleanup (8 iterations). Same as Solver 1. Catches any residual error.

And then short bisection finish (up to 28 iterations). Final safety net for extreme cases.

Convergence is declared if any of: - \(|C_{\text{BS}}(\sigma) - C_{\text{market}}| < 10^{-8}\) - \(|\sigma_{\text{high}} - \sigma_{\text{low}}| < 10^{-7}\)

7.2.4 Initial Guess

The quality of the initial guess dramatically affects convergence speed. A poor guess can cause Newton-Raphson to diverge or waste iterations.

We use three complementary approaches:

Approach 1: Time-value heuristic

\[\sigma_0 = \sqrt{\frac{2\pi}{\tau}} \cdot \frac{\text{time value}}{\max(D \cdot F, \; D \cdot K)}\]

This comes from the ATM approximation \(C_{\text{ATM}} \approx D \cdot F \cdot \sigma \sqrt{\tau / (2\pi)}\), inverted for \(\sigma\).

Approach 2: Corrado-Miller forward formula

This uses a quadratic approximation to the Black-Scholes formula. Starting from the call-equivalent price (converting puts to calls via parity):

\[C^* = \begin{cases} C & \text{if call} \\ C + D(F - K) & \text{if put} \end{cases}\]

then solving a quadratic involving the midpoint \(\frac{1}{2}(DF - DK)\) and a discriminant correction.

Approach 3: Transformed-space estimate (inspired by “Let’s Be Rational”)

For away-from-the-money options, we work in a normalized space:

\[\beta = \frac{\text{time value}}{D \cdot \sqrt{F \cdot K}}\]

\[x = |\ln(F/K)|\]

and apply a Padé-like correction:

\[\sigma_{\text{total}} = \sqrt{2\pi} \cdot \beta \cdot (1 + 0.35x + 0.08x^2)\]

\[\sigma_0 = \frac{\sigma_{\text{total}}}{\sqrt{\tau}}\]

This approximation handles the transition from ATM (where \(x \approx 0\)) to deep OTM (where \(x\) is large) smoothly.

Adaptive seed selection:

The second solver picks between these guesses based on moneyness:

| Region | Condition | Strategy |

|---|---|---|

| Near ATM | \(\|x\| < 0.08\) | Average of heuristic and Corrado-Miller |

| Moderate OTM | \(0.08 \leq \|x\| < 0.18\) | Average of Corrado-Miller and transformed |

| Deep OTM | \(\|x\| \geq 0.18\) | Transformed-space estimate |

This ensures the initial guess is always in the right ballpark, regardless of moneyness.

Faster optimization with Numba

Numba is a just-in-time (JIT) compiler for Python that translates Python functions into optimized machine code. For numerical algorithms like ours, it provides 10 to 100x faster execution and can run the code on different modes or even running on GPU.

Also another choice for faster code is precomputing. Instead of recomputing \(\sqrt{\tau}\) and \(\ln(F/K)\) in every iteration, we compute them once outside the loop and pass them as arguments. This eliminates redundant calculations and improves cache efficiency.

def cp_is_call(cp):

arr = np.asarray(cp)

if arr.ndim == 0:

return bool(str(arr.item()).lower().startswith("c"))

return np.char.startswith(np.char.lower(arr.astype(str)), "c")

def bsm_forward_price(cp, df, fwd, strike, tau, sigma):

tau = np.clip(np.asarray(tau, dtype=float), 1e-12, None)

sigma = np.clip(np.asarray(sigma, dtype=float), 1e-8, None)

df = np.asarray(df, dtype=float)

fwd = np.asarray(fwd, dtype=float)

strike = np.asarray(strike, dtype=float)

sqrt_tau = np.sqrt(tau)

d1 = (np.log(np.clip(fwd, 1e-12, None) / np.clip(strike, 1e-12, None)) + 0.5 * sigma * sigma * tau) / (sigma * sqrt_tau)

d2 = d1 - sigma * sqrt_tau

is_call = cp_is_call(cp)

call_val = df * (fwd * norm.cdf(d1) - strike * norm.cdf(d2))

put_val = df * (strike * norm.cdf(-d2) - fwd * norm.cdf(-d1))

return np.where(is_call, call_val, put_val)

def iv_bounds(cp, df, fwd, strike):

is_call = cp_is_call(cp)

df = np.asarray(df, dtype=float)

fwd = np.asarray(fwd, dtype=float)

strike = np.asarray(strike, dtype=float)

low_call = df * np.maximum(fwd - strike, 0.0)

high_call = df * fwd

low_put = df * np.maximum(strike - fwd, 0.0)

high_put = df * strike

low = np.where(is_call, low_call, low_put)

high = np.where(is_call, high_call, high_put)

return low, high

iv_status = {

0: "ok",

1: "nan_input",

2: "bounds_violation",

3: "no_convergence",

}

@njit

def norm_cdf_numba(x):

return 0.5 * (1.0 + math.erf(x / math.sqrt(2.0)))

@njit

def norm_pdf_numba(x):

return 0.3989422804014327 * math.exp(-0.5 * x * x)

@njit

def bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma):

sigma = max(sigma, 1e-12)

d1 = (log_fk + 0.5 * sigma * sigma * tau) / (sigma * sqrt_tau)

d2 = d1 - sigma * sqrt_tau

if is_call:

return df * (fwd * norm_cdf_numba(d1) - strike * norm_cdf_numba(d2))

return df * (strike * norm_cdf_numba(-d2) - fwd * norm_cdf_numba(-d1))

@njit

def bsm_vega_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma):

sigma = max(sigma, 1e-12)

d1 = (log_fk + 0.5 * sigma * sigma * tau) / (sigma * sqrt_tau)

return df * fwd * norm_pdf_numba(d1) * sqrt_tau

@njit

def bsm_volga_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma):

sigma = max(sigma, 1e-12)

d1 = (log_fk + 0.5 * sigma * sigma * tau) / (sigma * sqrt_tau)

d2 = d1 - sigma * sqrt_tau

vega = df * fwd * norm_pdf_numba(d1) * sqrt_tau

return vega * d1 * d2 / sigma

@njit

def iv_one_precomputed_core_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

if not (

np.isfinite(price) and np.isfinite(low) and np.isfinite(high)

and np.isfinite(df) and np.isfinite(fwd) and np.isfinite(strike) and np.isfinite(tau)

):

return np.nan, 1, 0

if (price <= 0.0) or (df <= 0.0) or (fwd <= 0.0) or (strike <= 0.0) or (tau <= 0.0):

return np.nan, 1, 0

if (price < low - 1e-10) or (price > high + 1e-10):

return np.nan, 2, 0

lo = 1e-8

hi = 5.0

sigma = min(max(guess, 0.03), 2.0)

n_iter = 0

diff = 0.0

for _ in range(12):

n_iter += 1

p = bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma)

diff = p - price

if abs(diff) < 1e-8:

return sigma, 0, n_iter

if diff > 0.0:

hi = sigma

else:

lo = sigma

v = bsm_vega_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma)

if (not np.isfinite(v)) or (v < 1e-8):

sigma = 0.5 * (lo + hi)

else:

nxt = sigma - diff / v

if np.isfinite(nxt) and (lo < nxt < hi):

sigma = nxt

else:

sigma = 0.5 * (lo + hi)

for _ in range(28):

n_iter += 1

sigma = 0.5 * (lo + hi)

p = bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma)

diff = p - price

if abs(diff) < 1e-8:

return sigma, 0, n_iter

if diff > 0.0:

hi = sigma

else:

lo = sigma

return sigma, 3, n_iter

@njit

def iv_one_precomputed_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

sigma, status, _ = iv_one_precomputed_core_numba(

is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk

)

return sigma, status

@njit(parallel=True)

def iv_array_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

n = len(price)

sigma_out = np.empty(n, dtype=np.float64)

status_out = np.empty(n, dtype=np.int64)

for i in prange(n):

sigma_i, status_i = iv_one_precomputed_numba(

is_call[i],

price[i],

low[i],

high[i],

guess[i],

df[i],

fwd[i],

strike[i],

tau[i],

sqrt_tau[i],

log_fk[i],

)

sigma_out[i] = sigma_i

status_out[i] = status_i

return sigma_out, status_out

@njit(parallel=True)

def iv_array_numba_with_iters(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

n = len(price)

sigma_out = np.empty(n, dtype=np.float64)

status_out = np.empty(n, dtype=np.int64)

iter_out = np.empty(n, dtype=np.int64)

for i in prange(n):

sigma_i, status_i, iter_i = iv_one_precomputed_core_numba(

is_call[i],

price[i],

low[i],

high[i],

guess[i],

df[i],

fwd[i],

strike[i],

tau[i],

sqrt_tau[i],

log_fk[i],

)

sigma_out[i] = sigma_i

status_out[i] = status_i

iter_out[i] = iter_i

return sigma_out, status_out, iter_out

def iv_guess_from_price(price, low, df, fwd, strike, tau):

time_val = np.maximum(np.asarray(price, dtype=float) - np.asarray(low, dtype=float), 1e-12)

scale = np.maximum(np.asarray(df, dtype=float) * np.maximum(np.asarray(fwd, dtype=float), np.asarray(strike, dtype=float)), 1e-12)

guess = np.sqrt(2.0 * np.pi / np.maximum(np.asarray(tau, dtype=float), 1e-12)) * (time_val / scale)

return np.clip(guess, 0.03, 2.0)

@njit

def call_equiv_price_numba(is_call, price, df, fwd, strike):

if is_call:

return price

return price + df * (fwd - strike)

@njit

def iv_guess_cm_forward_numba(is_call, price, df, fwd, strike, tau):

a = df * fwd

b = df * strike

c = call_equiv_price_numba(is_call, price, df, fwd, strike)

core = c - 0.5 * (a - b)

disc = core * core - (a - b) * (a - b) / math.pi

if disc > 0.0 and (a + b) > 1e-12:

sigma = math.sqrt(2.0 * math.pi / max(tau, 1e-12)) * (core + math.sqrt(disc)) / (a + b)

else:

sigma = math.sqrt(2.0 * math.pi / max(tau, 1e-12)) * c / max(0.5 * (a + b), 1e-12)

if not np.isfinite(sigma):

sigma = 0.20

return min(max(sigma, 0.01), 4.0)

@njit

def iv_guess_transformed_lite_numba(price, low, df, fwd, strike, tau, log_fk):

time_value = max(price - low, 1e-14)

beta = time_value / max(df * math.sqrt(max(fwd * strike, 1e-14)), 1e-14)

x = abs(log_fk)

total_vol_guess = math.sqrt(2.0 * math.pi) * beta * (1.0 + 0.35 * x + 0.08 * x * x)

sigma = total_vol_guess / math.sqrt(max(tau, 1e-12))

return min(max(sigma, 0.01), 4.0)

@njit

def select_seed_lbr_lite_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

g0 = min(max(guess, 0.01), 4.0)

g1 = iv_guess_cm_forward_numba(is_call, price, df, fwd, strike, tau)

g2 = iv_guess_transformed_lite_numba(price, low, df, fwd, strike, tau, log_fk)

x = abs(log_fk)

if x < 0.08:

sigma0 = 0.5 * (g0 + g1)

elif x < 0.18:

sigma0 = 0.5 * (g1 + g2)

else:

sigma0 = g2

return min(max(sigma0, 0.01), 4.0)

@njit

def iv_one_lbr_lite_core_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

if not (

np.isfinite(price) and np.isfinite(low) and np.isfinite(high)

and np.isfinite(df) and np.isfinite(fwd) and np.isfinite(strike) and np.isfinite(tau)

):

return np.nan, 1, 0

if (price <= 0.0) or (df <= 0.0) or (fwd <= 0.0) or (strike <= 0.0) or (tau <= 0.0):

return np.nan, 1, 0

if (price < low - 1e-10) or (price > high + 1e-10):

return np.nan, 2, 0

lo = 1e-8

hi = 5.0

sigma = select_seed_lbr_lite_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk)

sigma = min(max(sigma, 0.01), 4.0)

n_iter = 0

diff = 0.0

for _ in range(4):

n_iter += 1

p = bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma)

diff = p - price

if abs(diff) < 1e-8:

return sigma, 0, n_iter

if diff > 0.0:

hi = sigma

else:

lo = sigma

v = bsm_vega_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma)

if (not np.isfinite(v)) or (v < 1e-10):

sigma = 0.5 * (lo + hi)

continue

volga = bsm_volga_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma)

step = diff / v

denom = 1.0 - 0.5 * step * volga / max(v, 1e-12)

if np.isfinite(denom) and (abs(denom) > 0.35):

nxt = sigma - step / denom

else:

nxt = sigma - step

if (not np.isfinite(nxt)) or (nxt <= lo) or (nxt >= hi):

nxt = 0.5 * (lo + hi)

sigma = nxt

for _ in range(8):

n_iter += 1

p = bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma)

diff = p - price

if abs(diff) < 1e-8:

return sigma, 0, n_iter

if diff > 0.0:

hi = sigma

else:

lo = sigma

v = bsm_vega_precomputed_numba(df, fwd, tau, sqrt_tau, log_fk, sigma)

if (not np.isfinite(v)) or (v < 1e-10):

sigma = 0.5 * (lo + hi)

else:

nxt = sigma - diff / v

if np.isfinite(nxt) and (lo < nxt < hi):

sigma = nxt

else:

sigma = 0.5 * (lo + hi)

for _ in range(28):

n_iter += 1

sigma = 0.5 * (lo + hi)

p = bsm_price_precomputed_numba(is_call, df, fwd, strike, tau, sqrt_tau, log_fk, sigma)

diff = p - price

if (abs(diff) < 1e-8) or (abs(diff) < 1e-6) or ((hi - lo) < 1e-7):

return sigma, 0, n_iter

if diff > 0.0:

hi = sigma

else:

lo = sigma

if (abs(diff) < 1e-6) or ((hi - lo) < 1e-7):

return sigma, 0, n_iter

return sigma, 3, n_iter

@njit

def iv_one_lbr_lite_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

sigma, status, _ = iv_one_lbr_lite_core_numba(

is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk

)

return sigma, status

@njit(parallel=True)

def iv_array_lbr_lite_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

n = len(price)

sigma_out = np.empty(n, dtype=np.float64)

status_out = np.empty(n, dtype=np.int64)

for i in prange(n):

sigma_i, status_i = iv_one_lbr_lite_numba(

is_call[i],

price[i],

low[i],

high[i],

guess[i],

df[i],

fwd[i],

strike[i],

tau[i],

sqrt_tau[i],

log_fk[i],

)

sigma_out[i] = sigma_i

status_out[i] = status_i

return sigma_out, status_out

@njit(parallel=True)

def iv_array_lbr_lite_with_iters_numba(is_call, price, low, high, guess, df, fwd, strike, tau, sqrt_tau, log_fk):

n = len(price)

sigma_out = np.empty(n, dtype=np.float64)

status_out = np.empty(n, dtype=np.int64)

iter_out = np.empty(n, dtype=np.int64)

for i in prange(n):

sigma_i, status_i, iter_i = iv_one_lbr_lite_core_numba(

is_call[i],

price[i],

low[i],

high[i],

guess[i],

df[i],

fwd[i],

strike[i],

tau[i],

sqrt_tau[i],

log_fk[i],

)

sigma_out[i] = sigma_i

status_out[i] = status_i

iter_out[i] = iter_i

return sigma_out, status_out, iter_out

def solve_iv_forward(cp, price, df, fwd, strike, tau, init_sigma=0.20):

tau = float(max(float(tau), 1e-12))

sqrt_tau = float(np.sqrt(tau))

log_fk = float(np.log(max(float(fwd), 1e-12) / max(float(strike), 1e-12)))

low, high = iv_bounds(cp, float(df), float(fwd), float(strike))

low = float(np.asarray(low).reshape(-1)[0])

high = float(np.asarray(high).reshape(-1)[0])

guess = float(np.clip(init_sigma, 0.03, 2.0))

sigma, status = iv_one_precomputed_numba(

bool(cp_is_call(cp)),

float(price),

low,

high,

guess,

float(df),

float(fwd),

float(strike),

tau,

sqrt_tau,

log_fk,

)

reason = iv_status.get(int(status), "unknown")

return (float(sigma) if int(status) == 0 else np.nan), bool(int(status) == 0), reason

def estimate_forward_slice(df_slice, near_band=0.15):

if df_slice.empty:

return {"ok": False}

tau = float(np.nanmedian(df_slice["tau"]))

trade_date = pd.Timestamp(df_slice["trade_date"].iloc[0]).normalize()

df_tau = float(get_df(trade_date, tau))

strike = df_slice["strike"].to_numpy(dtype=float)

c_bid = df_slice["c_bid"].to_numpy(dtype=float)

c_ask = df_slice["c_ask"].to_numpy(dtype=float)

c_mid = df_slice["c_mid"].to_numpy(dtype=float)

p_bid = df_slice["p_bid"].to_numpy(dtype=float)

p_ask = df_slice["p_ask"].to_numpy(dtype=float)

p_mid = df_slice["p_mid"].to_numpy(dtype=float)

w = 1.0 / np.clip((c_ask - c_bid) + (p_ask - p_bid), 1e-6, None)

f_low = strike + (c_bid - p_ask) / df_tau

f_high = strike + (c_ask - p_bid) / df_tau

f_mid = strike + (c_mid - p_mid) / df_tau

prelim = weighted_median(f_mid, w)

lm = np.log(np.clip(strike, 1e-12, None) / np.clip(prelim, 1e-12, None))

core = np.abs(lm) <= near_band

if core.sum() < 3:

ord_idx = np.argsort(np.abs(lm))

core = np.zeros(len(lm), dtype=bool)

core[ord_idx[: min(6, len(lm))]] = True

f_hat = weighted_median(f_mid[core], w[core])

med = np.nanmedian(f_mid[core])

iqr = np.nanquantile(f_mid[core], 0.75) - np.nanquantile(f_mid[core], 0.25)

dispersion = float(iqr / max(abs(med), 1e-8))

feasibility = float(np.mean((f_hat >= f_low) & (f_hat <= f_high)))

f_low_ref = weighted_median(f_low[core], w[core])

f_high_ref = weighted_median(f_high[core], w[core])

if not np.isfinite(f_low_ref):

f_low_ref = float(np.nanmedian(f_low[core]))

if not np.isfinite(f_high_ref):

f_high_ref = float(np.nanmedian(f_high[core]))

return {

"ok": np.isfinite(f_hat),

"trade_date": trade_date,

"expiry_datetime": pd.Timestamp(df_slice["expiry_datetime"].iloc[0]),

"tau": tau,

"df": df_tau,

"r_short": get_r_short(trade_date),

"f_hat": float(f_hat),

"f_low_ref": float(f_low_ref),

"f_high_ref": float(f_high_ref),

"dispersion": dispersion,

"feasibility": feasibility,

"n_obs": int(len(df_slice)),

"n_core": int(core.sum()),

}

all_dates = np.sort(df_pairs["trade_date"].dropna().unique())

walk_trade_date = pd.Timestamp(all_dates[len(all_dates) // 2]).normalize()

day_slice = df_pairs[df_pairs["trade_date"] == walk_trade_date].copy()

day_slice["dte_days"] = day_slice["tau"] * 365.25

exp_rank = day_slice.groupby("expiry_datetime")["dte_days"].median().reset_index()

exp_rank["target_dist"] = (exp_rank["dte_days"] - 35.0).abs()

walk_expiry = exp_rank.sort_values("target_dist").iloc[0]["expiry_datetime"]

walk = day_slice[day_slice["expiry_datetime"] == walk_expiry].copy()

walk_est = estimate_forward_slice(walk, near_band=0.12)

walk_df = walk_est["df"]

walk_f_hat = walk_est["f_hat"]

walk["f_low"] = walk["strike"] + (walk["c_bid"] - walk["p_ask"]) / walk_df

walk["f_high"] = walk["strike"] + (walk["c_ask"] - walk["p_bid"]) / walk_df

walk["f_mid"] = walk["strike"] + (walk["c_mid"] - walk["p_mid"]) / walk_df

walk["lm_f"] = np.log(walk["strike"] / walk_f_hat)

fig, ax = plt.subplots(figsize=(8.2, 4.4))

ax.scatter(walk["strike"], walk["f_mid"], s=18, alpha=0.65, label="f_mid(k)")

ax.fill_between(walk["strike"], walk["f_low"], walk["f_high"], alpha=0.13, label="feasible interval")

ax.axhline(walk_f_hat, lw=2, label="f_hat")

ax.set_title(f"single-day parity forward extraction ({walk_trade_date.date()}, {pd.Timestamp(walk_expiry).date()})")

ax.set_xlabel("strike")

ax.set_ylabel("forward level")

ax.legend(loc="best")

plt.tight_layout()

plt.show()

walk_prices = walk["c_mid"].to_numpy(dtype=float)

walk_strikes = walk["strike"].to_numpy(dtype=float)

walk_tau = walk["tau"].to_numpy(dtype=float)

walk_df_arr = np.full(len(walk), float(walk_df))

walk_fwd_arr = np.full(len(walk), float(walk_f_hat))

walk_low, walk_high = iv_bounds("call", walk_df_arr, walk_fwd_arr, walk_strikes)

walk_guess = iv_guess_from_price(walk_prices, walk_low, walk_df_arr, walk_fwd_arr, walk_strikes, walk_tau)

walk_tau = np.clip(walk_tau, 1e-12, None)

walk_sqrt_tau = np.sqrt(walk_tau)

walk_log_fk = np.log(np.clip(walk_fwd_arr, 1e-12, None) / np.clip(walk_strikes, 1e-12, None))

_ = iv_array_numba(np.ones(min(len(walk), 1), dtype=np.bool_), walk_prices[:1], walk_low[:1], walk_high[:1], walk_guess[:1], walk_df_arr[:1], walk_fwd_arr[:1], walk_strikes[:1], walk_tau[:1], walk_sqrt_tau[:1], walk_log_fk[:1])

walk_iv, walk_status = iv_array_numba(

np.ones(len(walk), dtype=np.bool_), walk_prices, walk_low,

walk_high, walk_guess, walk_df_arr, walk_fwd_arr,

walk_strikes, walk_tau, walk_sqrt_tau, walk_log_fk)

df_walk_smile = pd.DataFrame({"lm_f": np.log(walk_strikes / walk_f_hat), "iv_mid": walk_iv, "status": walk_status})

df_walk_smile = df_walk_smile[df_walk_smile["status"] == 0].sort_values("lm_f")

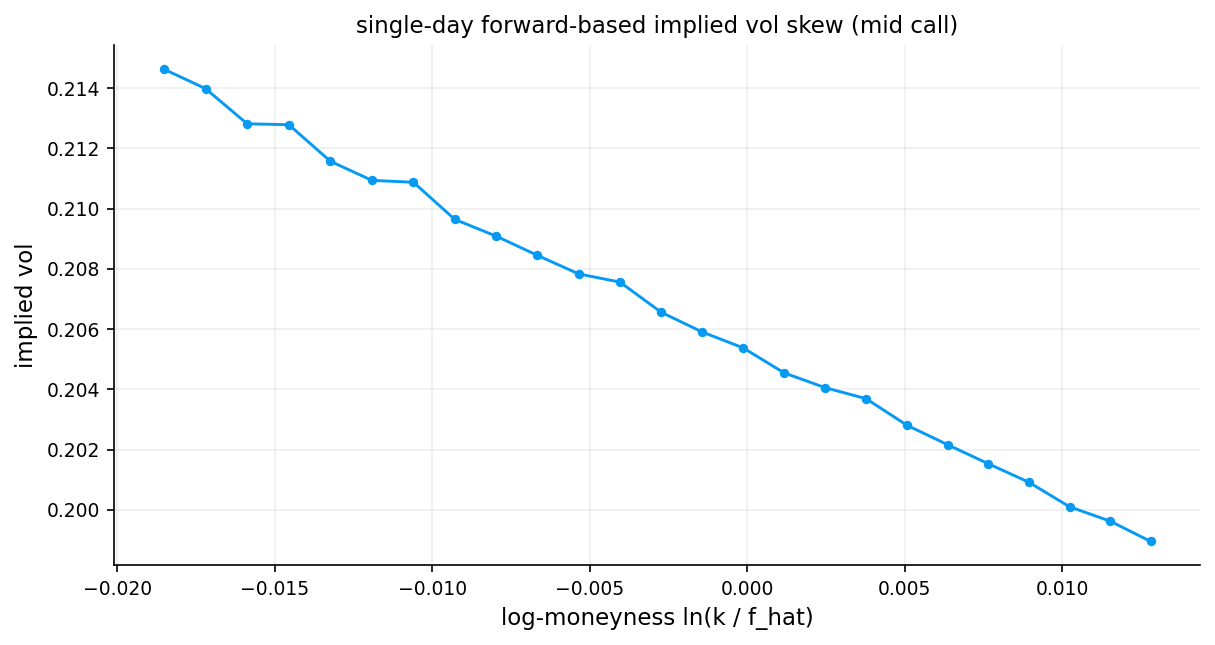

fig, ax = plt.subplots(figsize=(8.2, 4.4))

ax.plot(df_walk_smile["lm_f"], df_walk_smile["iv_mid"], marker="o", lw=1.4, ms=3.5)

ax.set_title("single-day forward-based implied vol skew (mid call)")

ax.set_xlabel("log-moneyness ln(k / f_hat)")

ax.set_ylabel("implied vol")

plt.tight_layout()

plt.show()

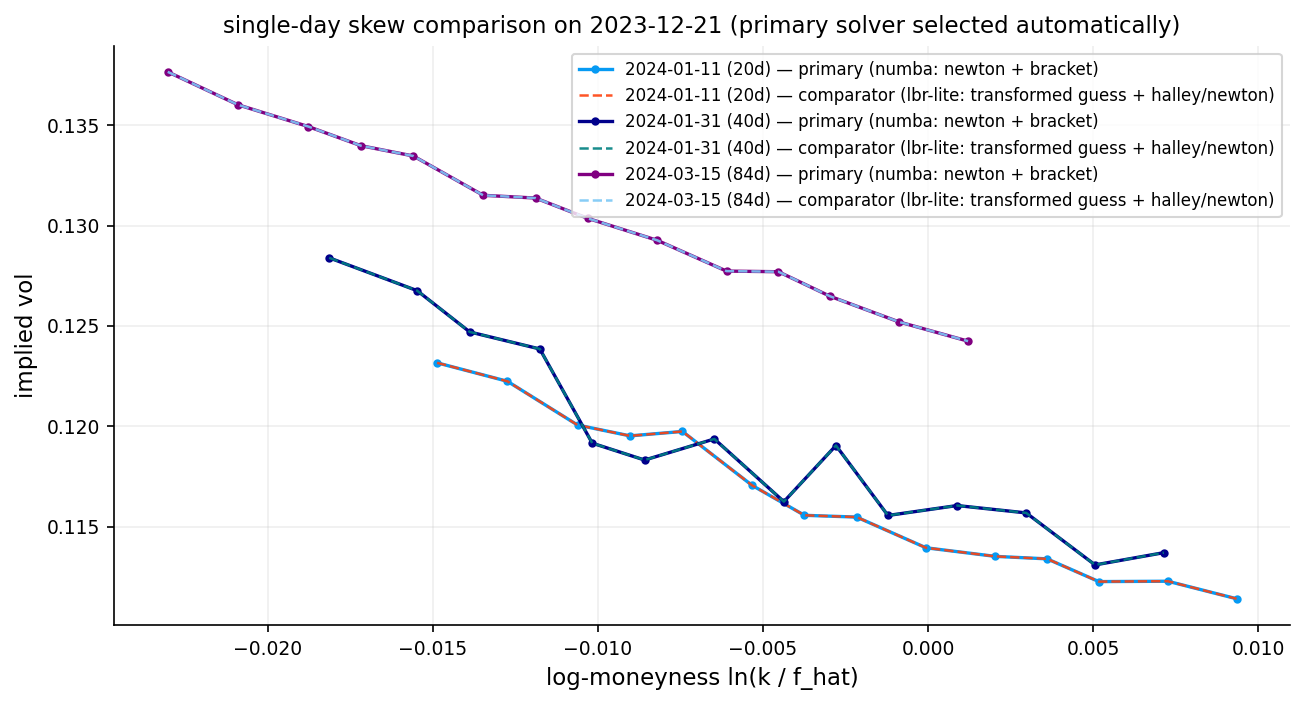

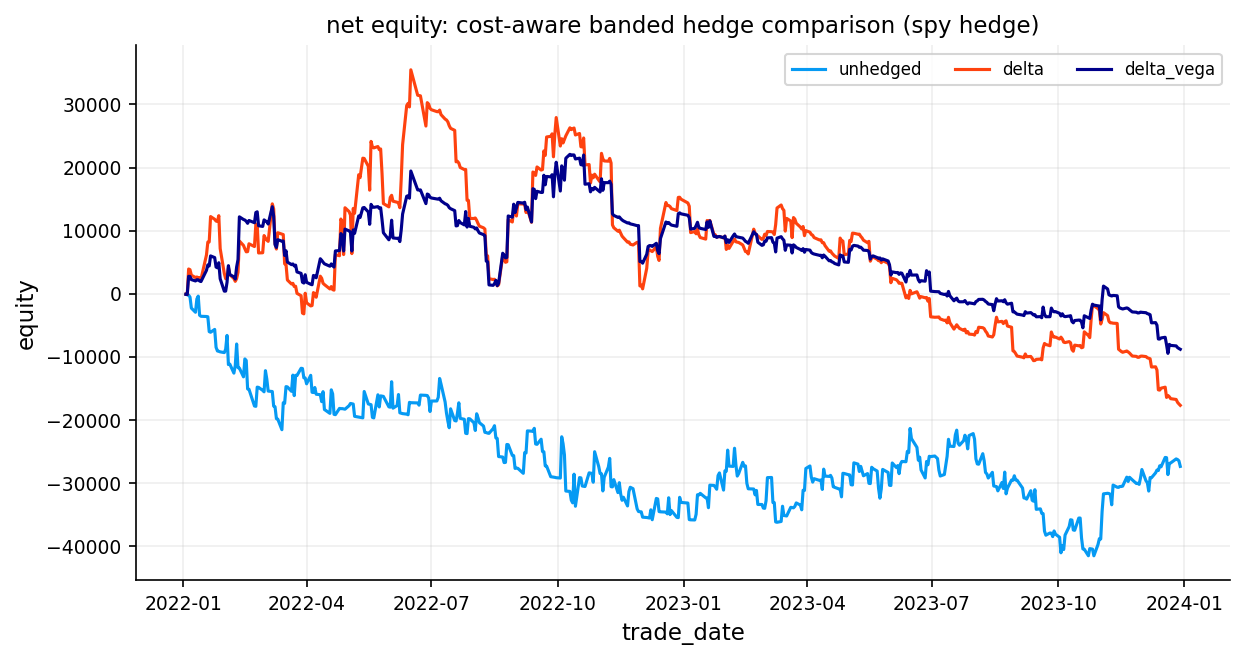

The smile we get is not really a symmetric smile. It is more like the familiar equity-index skew: - higher implied vol for downside strikes - lower implied vol for upside strikes - a shape consistent with crash protection being expensive

That is a normal result for SPX options.

If we were careless and just plugged spot \(S\) and a guessed dividend assumption directly into Black–Scholes, then part of the structure we attribute to volatility would actually be carry mismeasurement. For index options, that is a bad mistake. That’s why using forward price can be the best way for handling dividend for assets that even don’t pay dividends.

Some of the reasons that markets behave like this and create a skew can be:

- Demand for downside protection: Portfolio hedgers structurally buy OTM puts (low strikes) which pushes their prices (and IVs) higher

- Leverage effect: When the underlying falls, realized volatility tends to increase (firms become more leveraged), so options protecting against declines are priced with higher vol

- Jump risk: The market prices in the possibility of sudden large drops (crashes) more heavily than large rallies, fattening the left tail of the risk-neutral distribution

- Risk-neutral skewness: The skew encodes a negatively skewed risk-neutral distribution — the market assigns more probability to large down-moves than a lognormal model would

forward_rows = []

for key, grp in df_pairs.groupby(["trade_date", "expiry_datetime"], sort=True):

est = estimate_forward_slice(grp, near_band=0.12)

if est.get("ok", False):

forward_rows.append(

{

"trade_date": est["trade_date"],

"expiry_datetime": est["expiry_datetime"],

"tau": est["tau"],

"df": est["df"],

"r_short": est["r_short"],

"f_hat": est["f_hat"],

"f_low_ref": est["f_low_ref"],

"f_high_ref": est["f_high_ref"],

"dispersion": est["dispersion"],

"feasibility": est["feasibility"],

"n_obs": est["n_obs"],

"n_core": est["n_core"],

}

)

df_forward = pd.DataFrame(forward_rows)

disp_cut = float(np.nanquantile(df_forward["dispersion"], 0.95)) if len(df_forward) else np.nan

disp_limit = float(max(0.02, disp_cut if np.isfinite(disp_cut) else 0.02))

feas_limit = 0.50

df_forward["forward_quality_ok"] = (df_forward["feasibility"] >= feas_limit) & (df_forward["dispersion"] <= disp_limit)

merge_cols = [

"trade_date",

"expiry_datetime",

"df",

"r_short",

"f_hat",

"f_low_ref",

"f_high_ref",

"dispersion",

"feasibility",

"n_obs",

"n_core",

"forward_quality_ok",

]

df_pairs = df_pairs.merge(df_forward[merge_cols], on=["trade_date", "expiry_datetime"], how="inner")

df_pairs["lm_f"] = np.log(df_pairs["strike"] / df_pairs["f_hat"])

df_pairs["dte_days"] = df_pairs["tau"] * 365.25

df_forward_quality_summary = pd.DataFrame(

[

{"metric": "n_forward_slices", "value": int(len(df_forward))},

{"metric": "dispersion_limit", "value": float(disp_limit)},

{"metric": "feasibility_limit", "value": float(feas_limit)},

{"metric": "share_quality_ok", "value": float(np.nanmean(df_forward["forward_quality_ok"])) if len(df_forward) else np.nan},

]

)

print("df_forward shape:", df_forward.shape)

print("df_pairs with forward shape:", df_pairs.shape)

df_forward_quality_summarydf_forward shape: (11969, 13)

df_pairs with forward shape: (299214, 41)| metric | value | |

|---|---|---|

| 0 | n_forward_slices | 11,969.000000 |

| 1 | dispersion_limit | 0.020000 |

| 2 | feasibility_limit | 0.500000 |

| 3 | share_quality_ok | 0.992898 |

Quality flags

A slice is more trustworthy when: - dispersion is small - feasibility is high - enough strikes survive filtering

Nearly 12,000 unique (trade date, expiry) combinations were processed. 99.3% of slices out of 11,969 passed quality checks and had acceptable amount of feasibility and dispersion.

8) Computing rolling realized volatility:

Starting from the SPX closing prices, we compute log-returns:

\[r_t = \ln\!\left(\frac{S_t}{S_{t-1}}\right)\]

Then, for each lookback window \(w \in \{10, 21, 42, 63, 84, 126\}\) trading days, we compute the annualized realized volatility:

\[\text{RV}_w(t) = \sigma_w(t) \cdot \sqrt{252}\]

where \(\sigma_w(t)\) is the rolling standard deviation of the most recent \(w\) log-returns. This gives us six realized volatility time series, each capturing a different horizon of historical price variability.

We use multiple windows because a 10-day option should be compared to recent short-term realized vol, while a 4-month option should be compared to a longer-horizon estimate. A single fixed window would create a maturity mismatch.