import warnings

from pathlib import Path as path

from datetime import datetime

from statistics import NormalDist as normaldist

import textwrap

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from matplotlib.backends.backend_pdf import PdfPages as pdfpages

from cycler import cycler

from scipy.stats import chi2

from sklearn.linear_model import LinearRegression

from IPython.display import display

import sys

sys.path.append(str(path("..").resolve()))

import quantfinlab.portfolio as pf

warnings.filterwarnings("ignore")

palette = [

"#069af3", "#fe420f", "#00008b", "#800080",

"#008080", "#7bc8f6", "#0072b2", "#04d8b2",

"#cc79a7", "#ff8072", "#9614fa", "#dc143c",

]

plt.rcParams["axes.prop_cycle"] = cycler(color=palette)

plt.rcParams.update({

"figure.dpi": 200,

"savefig.dpi": 300,

"axes.grid": True,

"grid.alpha": 0.20,

"axes.spines.top": False,

"axes.spines.right": False,

"axes.titlesize": 11,

"axes.labelsize": 11,

"xtick.labelsize": 9,

"ytick.labelsize": 9,

"legend.fontsize": 9,

})

ann = 252

rf_annual = 0.04

rf_daily = (1 + rf_annual) ** (1 / ann) - 13. risk analysis and CAPM

In this notebook we build a risk-focused report for a small set of “objects” (can be assets, strategies, or portfolios). the goal is not just to compute numbers, but to make the math and interpretation transparent so we can understand what each metric means and how it is calculated and how we can interpret from them.

in this notebook we produce

- tables (small, topic-specific) and plots (simple, comparable) for:

- performance and basic distribution shape

- drawdowns and drawdown episodes

- tail risk (vaR / expected shortfall) + backtests

- historical stress windows

- capm factor regression

- risk attribution and diversification diagnostics

sign conventions

many risk measures are easier to read as positive “loss magnitudes”. for example, if the 5% quantile of returns is negative, we report:

- \(\text{vaR}_{5\%} = -q_{0.05}(r)\) (positive number)

- \(\text{ES}_{5\%} = -\mathbb{e}[r \mid r \le q_{0.05}(r)]\) (positive number)

so bigger vaR/ES means worse tail risk.

Imports and plotting style

1) data and returns

what we need as input

the report needs aligned daily returns for each object:

- a date index \(t = 1, 2, \dots, T\)

- for each object \(j\), a series \(\{r_{j,t}\}\)

if you start from prices \(p_t\), we use simple returns:

\[ r_t = \frac{p_t}{p_{t-1}} - 1 \]

in this notebook we keep everything in simple returns because: - the nav compounding is literally \(\prod (1+r)\), - most risk metrics (vaR/ES on daily returns) are commonly shown in simple-return units.

Load data and compute returns

the data used in this project can be downloaded from here (Stooq US (nasdaq) daily market data)

df = pd.read_parquet("../data/nasdaq_all_close_volume.parquet")

df["date"] = pd.to_datetime(df["Date"], errors="coerce")

dcol = "date"

df = df.dropna(subset=[dcol]).sort_values(dcol)

close_map, vol_map = {}, {}

for c in df.columns:

c_str = str(c)

if c_str.lower() == dcol.lower() or "__" not in c_str:

continue

t, f = c_str.rsplit("__", 1)

f = f.lower()

if f == "close":

close_map[t] = c

elif f == "volume":

vol_map[t] = c

tickers_all = sorted(set(close_map).intersection(vol_map))

close_prices = df[[close_map[t] for t in tickers_all]].copy()

volumes = df[[vol_map[t] for t in tickers_all]].copy()

close_prices.columns = tickers_all

volumes.columns = tickers_all

close_prices.index = pd.to_datetime(df[dcol].values)

volumes.index = pd.to_datetime(df[dcol].values)

close_prices = close_prices.apply(pd.to_numeric, errors="coerce").replace([np.inf, -np.inf], np.nan)

volumes = volumes.apply(pd.to_numeric, errors="coerce").replace([np.inf, -np.inf], np.nan)

start = pd.Timestamp("2016-01-01")

close_prices = close_prices.loc[close_prices.index >= start]

volumes = volumes.loc[volumes.index >= start]

idx = close_prices.index.intersection(volumes.index)

cols = close_prices.columns.intersection(volumes.columns)

close_prices = close_prices.loc[idx, cols]

volumes = volumes.loc[idx, cols]

returns = pf.prices_to_returns(close_prices)

first_date = pd.concat([close_prices.apply(pd.Series.first_valid_index),

volumes.apply(pd.Series.first_valid_index)],axis=1,).max(axis=1)

spy = pd.read_csv("../data/spy_yfinance.csv")

spy["date"] = pd.to_datetime(spy["Date"], errors="coerce") if "date" in [str(c).lower() for c in spy.columns] else pd.to_datetime(spy["Date"], errors="coerce")

dcol_spy = "date" if "date" in spy.columns else "Date"

spy = spy.dropna(subset=[dcol_spy]).sort_values(dcol_spy).set_index(dcol_spy)

if "Adj Close" in spy.columns:

spy_px = pd.to_numeric(spy["Adj Close"], errors="coerce")

else:

raise ValueError("spy_yfinance.csv missing adj close column")

spy_px = spy_px.loc[spy_px.index >= start]

market_ret = spy_px.pct_change().replace([np.inf, -np.inf], np.nan)2) rebalancing, universe selection, and strategies

In the last project we imported the same data from nasdaq and filtered the most liquid stocks for each month from 2016 to 2026 and implemented MeanVariance, MinVariance and MaxSharpe models with monthly rebalancing and backtested and compared them under real market conditions.

for better understanding please read the last project (2. Portfolio Optimization with Mean–Variance Models)

rebalancing logic (no look-ahead)

a clean backtest timeline is:

- at the start of day \(t\), if \(t\) is a rebalance date, compute target weights \(w_t\) using information up to \(t-1\)

- apply transaction costs/turnover if needed

- hold weights through the day and apply realized return \(r_{p,t+1}\) next

transaction cost proxy (simple linear model):

\[ \text{tc}_t = c \sum_i |w_{i,t} - w_{i,t-1}| \]

where \(c\) is a per-unit turnover cost.

strategies used here (high level)

In this notebook we don’t repeat the code in the last notebook and we use quantfinlab library for creating the needed strategies. for the first implementation we just use two of the best strategies from the last notebook. MV_ewma and MaxSharpe_frontier. we also use two of the stocks in our dataset for showing different results in different types of objects. We use NVIDIA and Apple. and for CAPM analysis we use SPY as our benchmmark.

if you later add more objects, the report works as long as: - each object is a daily return series aligned to the same date index - object names are consistent (for labeling)

rebal_dates = pf.make_rebalance_dates(returns.index, freq="ME", min_history_days=252)

cache = {}

for dt in rebal_dates:

tickers, adv = pf.select_liquid_universe(dt, close_prices=close_prices,

volumes=volumes, top_n=100,

liq_lookback=252, min_listing_days=252,

min_obs=252, first_date=first_date,)

if len(tickers) < 2:

continue

pos = returns.index.get_loc(dt)

if isinstance(pos, slice):

pos = pos.stop - 1

if pos < 252:

continue

window = (returns[tickers].iloc[pos - 252:pos]

.dropna(axis=0, how="any"))

if window.shape[0] < 170 or window.shape[1] < 2:

continue

tickers = window.columns.tolist()

cov_ewma = pf.cov_estimate(window, method="ewma", annualization=ann)

cov_lw = pf.cov_estimate(window, method="ledoitwolf", annualization=ann)

mu = pf.mu_momentum(window, mode="6-1", rf=rf_annual,

cov_for_scaling=cov_lw, target_sharpe=0.80,

mu_cap=0.30)

cache[dt] = {

"tickers": tickers,

"mu_excess_ann": np.asarray(mu, dtype=float),

"cov_ann_map": {"ewma": cov_ewma, "ledoitwolf": cov_lw},

"window": window,

}

rebal_dates = pd.DatetimeIndex([d for d in rebal_dates if d in cache])

def mv_weight_fn(dt, state, w_prev):

return pf.weights_mv(mu_excess_ann=state["mu_excess_ann"],

cov_ann=state["cov_ann_map"]["ewma"],

w_prev=w_prev, w_max=0.25, long_only=True,

turnover_penalty_bps=10, ridge=1e-8)

def maxsharpe_weight_fn(dt, state, w_prev):

return pf.weights_maxsharpe_frontier_grid(

mu_excess_ann=state["mu_excess_ann"],

cov_ann=state["cov_ann_map"]["ledoitwolf"],

w_prev=w_prev,grid_n=25,

w_max=0.25, long_only=True,

turnover_penalty_bps=10, ridge=1e-8,)

res_mv = pf.backtest(returns, rebal_dates, cache,

mv_weight_fn, cost_bps=10,

fallback="equal", w_max=0.25,

long_only=True, rf_daily=rf_daily)

res_mx = pf.backtest(returns, rebal_dates,

cache, maxsharpe_weight_fn,

cost_bps=10, fallback="equal",

w_max=0.25, long_only=True, rf_daily=rf_daily)

base_idx = returns.index.intersection(res_mv.net_returns.index).intersection(res_mx.net_returns.index)

nvda_ret = returns["NVDA"].reindex(base_idx).fillna(0.0)

aapl_ret = returns["AAPL"].reindex(base_idx).fillna(0.0)

mv_ret = res_mv.net_returns.reindex(base_idx).fillna(0.0)

mx_ret = res_mx.net_returns.reindex(base_idx).fillna(0.0)

market_ret = market_ret.reindex(base_idx).fillna(0.0)

obj = {

"nvda": nvda_ret,

"aapl": aapl_ret,

"mv_ewma": mv_ret,

"maxsharpe_frontier": mx_ret,

}

obj_colors = {

"nvda": palette[0],

"aapl": palette[1],

"mv_ewma": palette[2],

"maxsharpe_frontier": palette[3],

}

print("analysis objects:", list(obj.keys()))

print("date range:", base_idx.min().date(), "to", base_idx.max().date(), ", n:", len(base_idx))analysis objects: ['nvda', 'aapl', 'mv_ewma', 'maxsharpe_frontier']

date range: 2017-01-31 to 2026-01-28 , n: 22613) core risk metrics

In this section we build tables that summarize each object using only its own return series (We had this in the project 2 too).

3.2 annualized return

from nav, the total growth factor is \(\text{nav}_T\). to annualize over \(T\) trading days (We assume 252 trading days in one year):

\[ r^{ann} = \text{nav}_T^{252/T} - 1 \]

this assumes the sample growth rate continues at the same pace (a standard convention).

3.3 annualized volatility

daily volatility is the sample standard deviation:

\[ \hat\sigma = \sqrt{\frac{1}{T-1}\sum_{t=1}^T (r_t - \bar r)^2} \]

annualized volatility is:

\[ \hat\sigma^{ann} = \hat\sigma\sqrt{252} \]

3.5 sortino ratio (downside-focused)

For sortino we replace total volatility with downside deviation. We define downside returns relative to a target \(\tau\) (often \(0\) or \(r_f\)). So we set returns higher than risk free rate as 0 to analyze the lowest returns:

\[ d_t = \min(0, r_t - \tau) \]

downside deviation:

\[ \sigma_d = \sqrt{\frac{1}{T-1}\sum_{t=1}^T d_t^2} \]

sortino:

\[ \text{sortino} = \frac{\bar r - \tau}{\sigma_d}\sqrt{252} \]

def nav_series(r):

r = pd.Series(r).fillna(0.0)

return (1 + r).cumprod()

def sortino(r):

x = pd.Series(r).dropna()

ex = x - rf_daily

dn = np.minimum(ex, 0)

den = np.sqrt((dn ** 2).mean())

return float((ex.mean() / den) * np.sqrt(ann)) if den > 1e-12 else np.nan

perf_rows = []

for name, r in obj.items():

x = pd.Series(r).dropna()

nav = nav_series(x)

ann_return = float(nav.iloc[-1] ** (ann / len(x)) - 1) if len(x) else np.nan

daily_mean = float(x.mean())

daily_vol = float(x.std(ddof=1))

ann_vol = daily_vol * np.sqrt(ann) if daily_vol > 1e-12 else np.nan

sharpe = ((daily_mean - rf_daily) / daily_vol * np.sqrt(ann)) if daily_vol > 1e-12 else np.nan

sortino_ratio = sortino(x)

perf_rows.append({

"object": name,

"ann_return": ann_return,

"ann_vol": ann_vol,

"sharpe": float(sharpe) if np.isfinite(sharpe) else np.nan,

"sortino": float(sortino_ratio) if np.isfinite(sortino_ratio) else np.nan,

})

perf_tbl = pd.DataFrame(perf_rows).set_index("object").sort_index()display(perf_tbl.round(4))| ann_return | ann_vol | sharpe | sortino | |

|---|---|---|---|---|

| object | ||||

| aapl | 0.2798 | 0.2967 | 0.8478 | 1.2478 |

| maxsharpe_frontier | 0.2084 | 0.2951 | 0.6565 | 0.9390 |

| mv_ewma | 0.1688 | 0.1717 | 0.7661 | 1.0921 |

| nvda | 0.6074 | 0.5016 | 1.1189 | 1.6765 |

As we can see nvidia has so much more annual returns than other objects but the annual volatility is insanely more. even with more volatility, Apple and Nvidia still have more sharpe and sortino ratio, but if we care about risk, it’s even obvious from the first metric (annual vol) that our diversification reduced the volatility succesfully. And in 2016 we wouldn’t know in 2026 nvidia would grow this much and give this much return. and even if we knew we probably wouldn’t trust this much volatility and hold it until now. the best thing we could’ve done was make a portfolio of the top 100 stocks in that time and update it each month. we now get to other metrics for comparing the risk of these 4 objects.

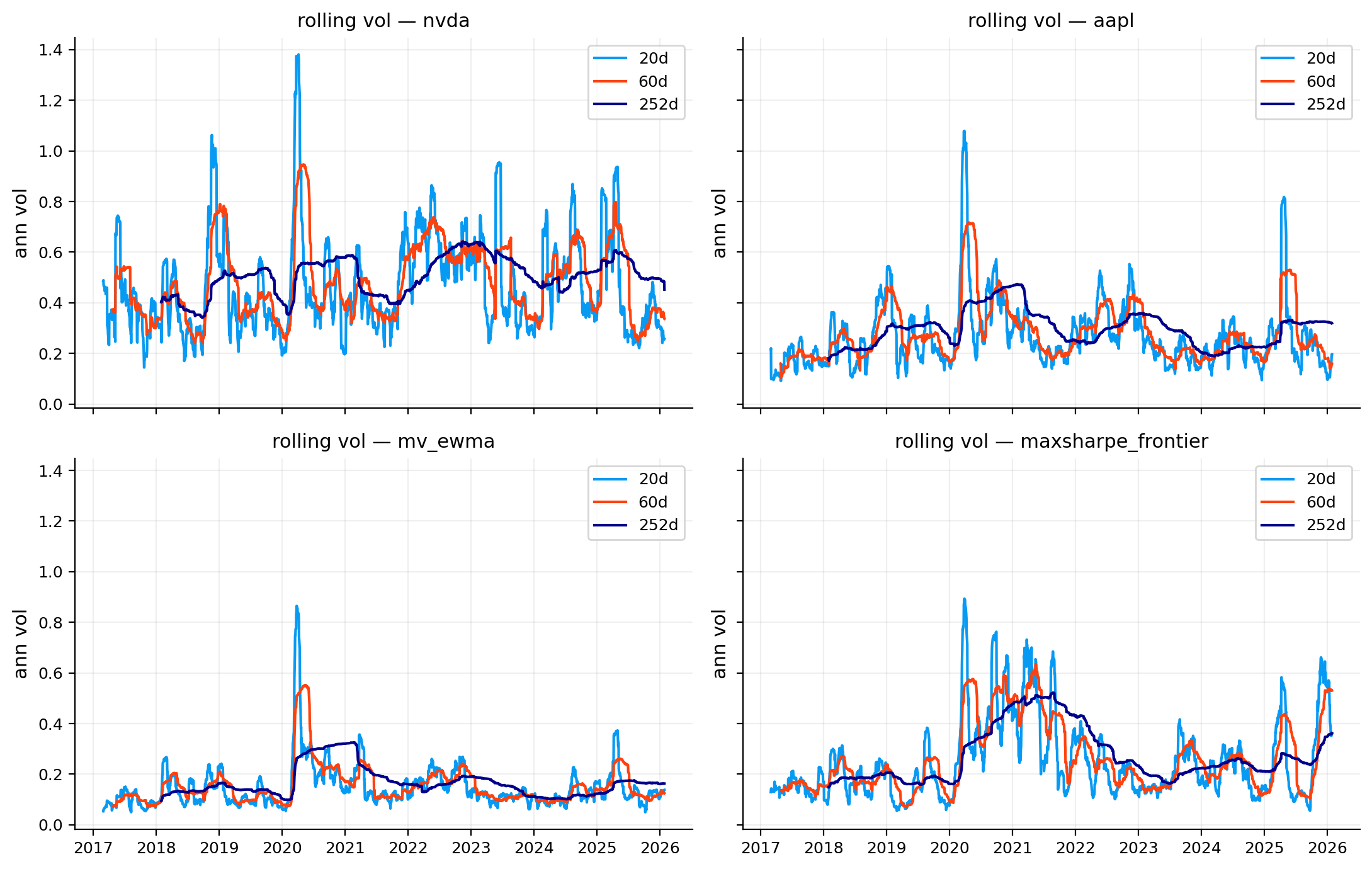

4) rolling volatility

volatility is not constant. a single full-sample \(\sigma\) hides regime changes. with rolling volatility we can analyze the volatility overtime and see different volatility of an asset in different times.

rolling statistics

a rolling volatility over window length \(w\) (for example 60 days) is:

\[ \hat\sigma_{t,w} = \sqrt{\frac{1}{w-1}\sum_{u=t-w+1}^t (r_u - \bar r_{t,w})^2} \]

and annualized rolling vol is:

\[ \hat\sigma_{t,w}^{ann} = \hat\sigma_{t,w}\sqrt{252} \]

we plot multiple windows (e.g., 20/60/252 days) because: - short windows react quickly and are more noisy (good for risk control) - long windows are smoother (good for long-horizon intuition)

windows = [20, 60, 252]

fig, axes = plt.subplots(2, 2, figsize=(11, 7), sharex=True, sharey=True)

axes = axes.ravel()

for i, (name, r) in enumerate(obj.items()):

ax = axes[i]

x = pd.Series(r).dropna()

for w in windows:

rv = x.rolling(w).std(ddof=1) * np.sqrt(ann)

ax.plot(rv.index, rv.values, lw=1.5, label=f"{w}d")

ax.set_title(f"rolling vol — {name}")

ax.set_ylabel("ann vol")

ax.legend()

plt.tight_layout()

plt.show()

As we can see all of our objects have been more volatile in 2020 due to Covid crash. but the difference is that our two stocks have experienced more volatility in crash times and our diversified portfolios were able to manage the volatility in those times better and had lower effect from crashes. And as we can see Nvidia has the most noise and movement and high volatility overtime and our MeanVariance model with ewma covariance managed to control volatility overtime better than all the other objects.

4) distribution shape and tail diagnostics

performance ratios (sharpe/sortino) do not tell you what the return distribution looks like. in the metrics that we analyzed we only work with variance and mean. not the real shape and behavior of distribution. two strategies can have the same sharpe but very different crash behavior.

4.1 skewness

skewness measures asymmetry. using centered moments:

\[ \text{skew} = \frac{\mathbb{e}[(r-\mu)^3]}{\sigma^3} \]

- negative skew can mean occasional large negative days (crash)

- positive skew can mean occasional large positive days (lottery-like)

excess kurtosis

kurtosis measures tail heaviness relative to normal:

\[ \text{kurt} = \frac{\mathbb{e}[(r-\mu)^4]}{\sigma^4} - 3 \]

the “\(-3\)” makes normal distribution kurtosis equal to \(0\) (“excess kurtosis”).

tail ratio (quantile-based)

a simple, robust tail comparison is:

\[ \text{tail ratio} = \left|\frac{q_{0.95}}{q_{0.05}}\right| \]

where \(q_p\) is the \(p\)-quantile of daily returns. if the left tail is much larger in magnitude than the right tail, the ratio drops. If we have bigger left tail than right tail, it means we have more extreme negative returns than extreme possitive which can be a sign of risk.

worst-day averages

another tail measure that can be used is:

- worst 1-day return: \(\min_t r_t\)

- average of worst 5 days: mean of the 5 smallest returns

- average of worst 10 days: mean of the 10 smallest returns

these are easy for users to understand what does a bad week look like without introducing a full scenario model.

in below we get to more advanced models for these types of risk with VaR.

shape_rows = []

for name, r in obj.items():

x = pd.Series(r).dropna()

q05 = float(x.quantile(0.05))

q95 = float(x.quantile(0.95))

tail_ratio = float(abs(q95 / q05)) if abs(q05) > 1e-12 else np.nan

worst_1d = float(x.min()) if len(x) else np.nan

worst_5d_avg = float(x.nsmallest(5).mean()) if len(x) >= 5 else np.nan

worst_10d_avg = float(x.nsmallest(10).mean()) if len(x) >= 10 else np.nan

shape_rows.append({

"object": name,

"skew": float(x.skew()) if len(x) else np.nan,

"excess_kurtosis": float(x.kurt()) if len(x) else np.nan,

# pandas returns excess kurtosis so we don't have to subtract 3

"tail_ratio_95_05": tail_ratio,

"worst_1d": worst_1d,

"worst_5d_avg": worst_5d_avg,

"worst_10d_avg": worst_10d_avg,

})

shape_tbl = pd.DataFrame(shape_rows).set_index("object").sort_index()

display(shape_tbl.round(4))| skew | excess_kurtosis | tail_ratio_95_05 | worst_1d | worst_5d_avg | worst_10d_avg | |

|---|---|---|---|---|---|---|

| object | ||||||

| aapl | 0.1655 | 6.8540 | 0.9931 | -0.1286 | -0.0999 | -0.0850 |

| maxsharpe_frontier | -0.1096 | 6.9413 | 0.9987 | -0.1208 | -0.1070 | -0.0907 |

| mv_ewma | -0.4052 | 12.5224 | 1.0800 | -0.1050 | -0.0767 | -0.0602 |

| nvda | 0.1793 | 5.2051 | 1.0930 | -0.1875 | -0.1605 | -0.1310 |

Looks like MV_ewma doesn’t have the best shape if we use skew and kurt, but this is because it’s distribution is closer to 0 and every little extreme loss can drive the skew and kurt to bad situation. based on kurtosis, all of the objects are fat tailed but the amount of losses that we take from tail is different for each object. When it comes to see how much loss we take in worst days we again see that MV_ewma has lower loss and nvidia again has the most loss. and from these measure our maxsharpe and apple are very close to eachother.

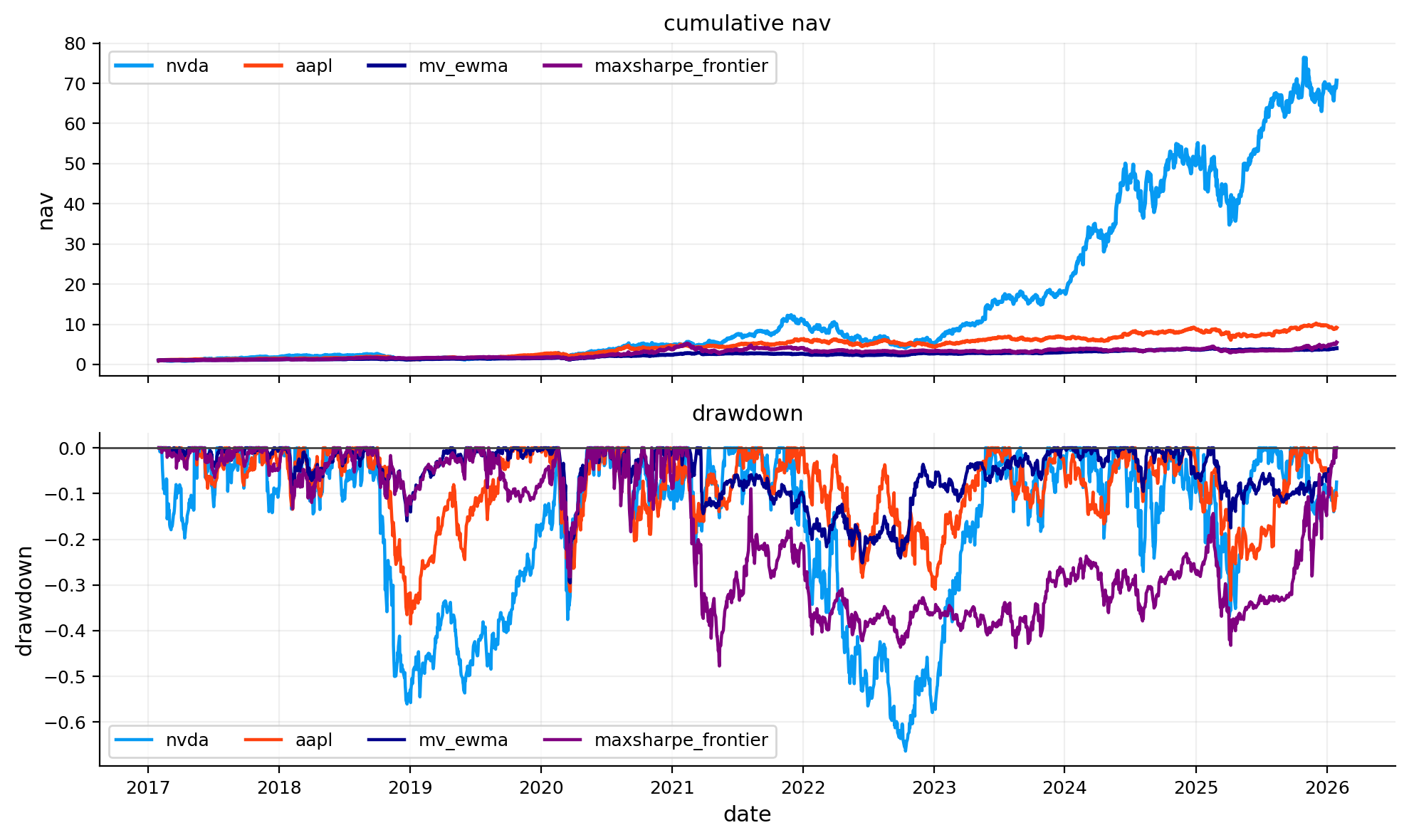

5) cumulative performance and drawdown

5.1 drawdown

drawdown measures how far we get below the previous peak and how much time does it take to get back to peak.

we define peak nav as:

\[ \text{peak}_t = \max_{u \le t} \text{nav}_u \]

then drawdown is:

\[ \text{dd}_t = \frac{\text{nav}_t}{\text{peak}_t} - 1 \]

so drawdown is \(0\) at peaks and negative otherwise.

drawdown analysis answers: - how deep are losses during stress? - how long does it take to recover? - are drawdowns frequent but shallow, or rare but huge?

def dd_series(r):

nav = nav_series(r)

return nav / nav.cummax() - 1.0

fig, ax = plt.subplots(2, 1, figsize=(10, 6), sharex=True)

for name, r in obj.items():

nav = nav_series(r)

ax[0].plot(nav.index, nav.values, lw=2.0, color=obj_colors[name], label=name)

ax[0].set_title("cumulative nav")

ax[0].set_ylabel("nav")

ax[0].legend(ncol=4)

for name, r in obj.items():

dd = dd_series(r)

ax[1].plot(dd.index, dd.values, lw=1.6, color=obj_colors[name], label=name)

ax[1].axhline(0.0, color="#444", lw=1)

ax[1].set_title("drawdown")

ax[1].set_ylabel("drawdown")

ax[1].set_xlabel("date")

ax[1].legend(ncol=4)

plt.tight_layout()

plt.show()

5.2 drawdown episode

the drawdown time-series is great visually, but a user also needs events. we want to know exactly what were the worst drawdowns, when did they start, and how long did they last

an episode starts when drawdown becomes negative and ends when nav reaches the last peak (drawdown returns to \(0\)).

for each episode \(k\) we record: - start date \(t_k^{start}\) - end date \(t_k^{end}\) - depth: \(\min_{t \in [t_k^{start}, t_k^{end}]} \text{dd}_t\) - duration: number of trading days in the episode

def drawdown_episodes(r):

dd = dd_series(r)

in_dd = False

start_i = None

out = []

for i, v in enumerate(dd.values):

if v < 0 and not in_dd:

in_dd = True

start_i = i

if v == 0 and in_dd:

end_i = i

seg = dd.iloc[start_i:end_i]

out.append((seg.index[0], seg.index[-1], float(seg.min()), int(len(seg))))

in_dd = False

if in_dd:

seg = dd.iloc[start_i:]

out.append((seg.index[0], seg.index[-1], float(seg.min()), int(len(seg))))

return pd.DataFrame(out, columns=["start", "end", "depth", "duration"])

def avg_recovery_time(r):

nav = nav_series(r)

peak = nav.cummax()

dd = nav / peak - 1

rec_times = []

in_dd = False

t0 = None

for i, v in enumerate(dd.values):

if v < 0 and not in_dd:

in_dd = True

t0 = i

if v == 0 and in_dd:

rec_times.append(i - t0)

in_dd = False

return float(np.mean(rec_times)) if len(rec_times) else np.nan

dd_rows = []

for name, r in obj.items():

x = pd.Series(r).dropna()

dd = dd_series(x)

ep = drawdown_episodes(x)

longest_dd_days = int(ep["duration"].max()) if len(ep) else 0

dd_rows.append({

"object": name,

"max_dd": float(dd.min()) if len(dd) else np.nan,

"longest_dd_days": longest_dd_days,

"avg_recovery_days": avg_recovery_time(x),

})

dd_summary_tbl = pd.DataFrame(dd_rows).set_index("object").sort_index()

display(dd_summary_tbl.round(4))| max_dd | longest_dd_days | avg_recovery_days | |

|---|---|---|---|

| object | |||

| aapl | -0.3852 | 354 | 21.2234 |

| maxsharpe_frontier | -0.4772 | 1238 | 30.5217 |

| mv_ewma | -0.2954 | 679 | 16.8803 |

| nvda | -0.6634 | 373 | 18.4019 |

episodes_rows = []

for name, r in obj.items():

ep = drawdown_episodes(r).sort_values("depth")

ep = ep.head(2).copy()

ep.insert(0, "object", name)

episodes_rows.append(ep)

episodes_tbl = pd.concat(episodes_rows, axis=0).reset_index(drop=True)

display(episodes_tbl)| object | start | end | depth | duration | |

|---|---|---|---|---|---|

| 0 | nvda | 2021-11-30 | 2023-05-24 | -0.663351 | 373 |

| 1 | nvda | 2018-10-02 | 2020-02-13 | -0.560400 | 344 |

| 2 | aapl | 2018-10-04 | 2019-10-09 | -0.385177 | 255 |

| 3 | aapl | 2024-12-27 | 2025-10-17 | -0.333607 | 202 |

| 4 | mv_ewma | 2020-02-21 | 2020-05-13 | -0.295405 | 58 |

| 5 | mv_ewma | 2021-03-23 | 2023-11-30 | -0.251735 | 679 |

| 6 | maxsharpe_frontier | 2021-02-16 | 2026-01-20 | -0.477211 | 1238 |

| 7 | maxsharpe_frontier | 2020-02-20 | 2020-05-08 | -0.282402 | 56 |

From the plot, we have the most drawdown in Nvidia. it means in around 2022 we reached our peak in nvidia and then it came down around 66% in 2022 before going up and reaching it’s last peak again. this is the part that makes keeping nvidia stocks hard. you would have earned a lot of money from Nvidia Only if you kept it through the 50-60% loss in 2019 and 2022. Apple seems to have better performance than maxsharpe in drawdown. and MV_ewma has the most stable performance and the least max drawdown. below we get to more details about drawdown.

looks like Maxsharpe diversification isn’t as good for reducing drawdown and negative effects of crashes like covid, but in some times like 2019 that both stocks have huge drawdown that can be a market effect, it doesn’t have that much drawdown.

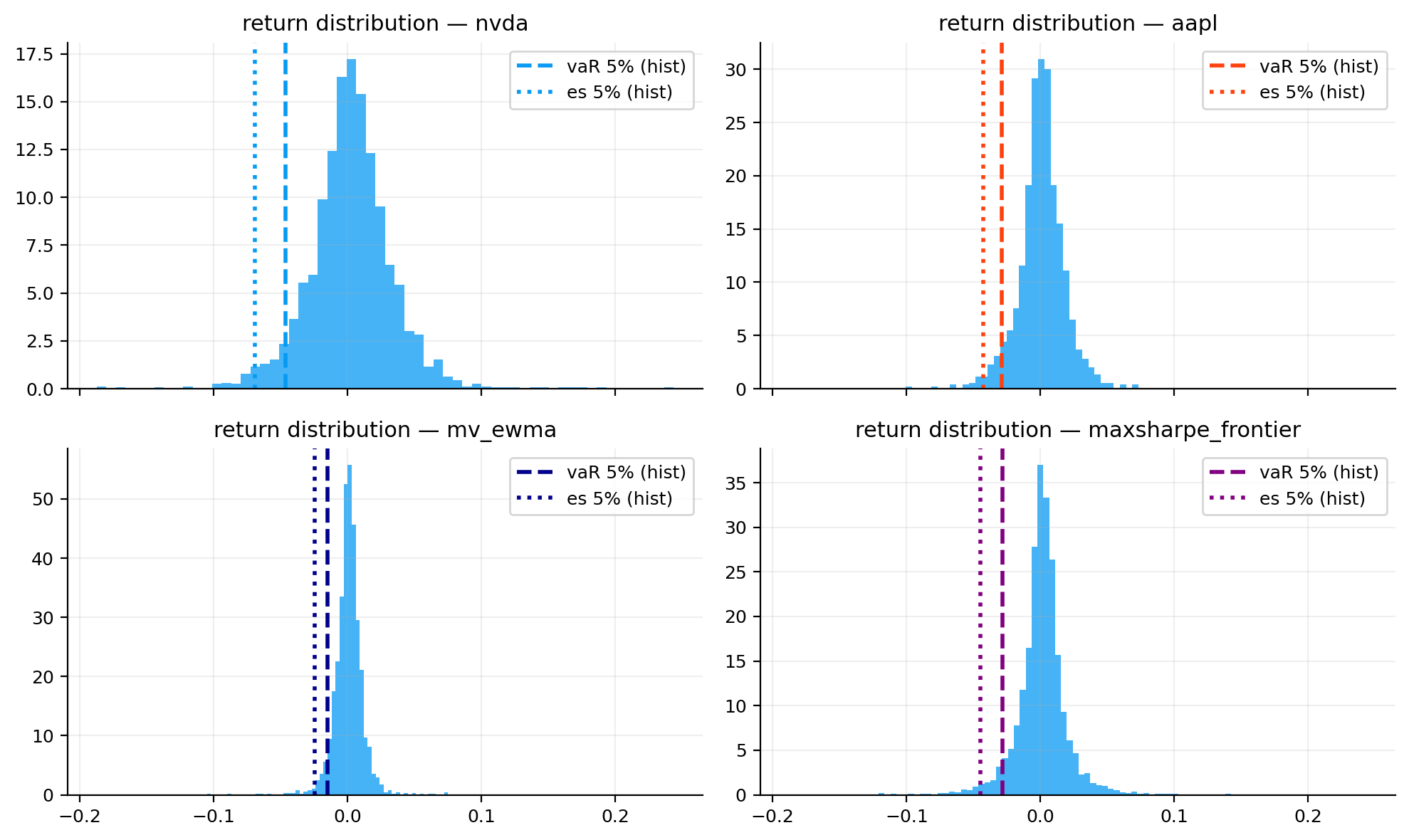

6) Value-at-risk (VaR) and expected shortfall (ES or CVaR)

We analyzed tail and distribution of objects for risk and average worst days to answer On bad days, how large can losses get and how severe are losses once we enter the tail

If daily simple returns be \(r_t\) (\(r_t=-0.02\) means a \(-2\%\) return in one day).

We choose a tail probability \(\alpha\) (common one is \(\alpha=0.05\) for the worst 5% of days).

6.1 left-tail quantile

We define the left-tail quantile \(q_\alpha\) of the return distribution as the threshold such that only an \(\alpha\) fraction of observations fall below it:

\[ P(r \le q_\alpha(r)) = \alpha. \]

Because this is the left tail, \(q_\alpha(r)\) is typically negative (a loss).

Risk reports often present tail risk as a positive loss magnitude for readability.

6.2 value-at-risk (VaR)

VaR is a threshold loss:

Using the quantile definition:

\[ \text{VaR}_\alpha = -q_\alpha(r). \]

For example if \(\alpha=0.05\) - If \(\text{VaR}_{0.05}=2.1\%\), then on 95% of days the loss is no worse than 2.1%. - On the worst 5% of days, losses are worse than 2.1%. - This means we should except this asset to have more than 2.1% loss in the worst 5% of days. It’s rare but it happens and it’s importnant to know how much loss we except as a measure of risk.

The problem is that VaR tells you where the tail begins, but not how large losses are inside the tail.

6.3 expected shortfall (ES) / conditional VaR

Expected Shortfall (ES) measures tail severity by averaging losses beyond VaR:

\[ \text{ES}_\alpha = -E\!\left[r \mid r \le q_\alpha(r)\right]. \]

If \(\alpha=0.05\): - If \(\text{ES}_{0.05}=3.4\%\), then among the worst 5% of days, the average loss is 3.4%.

- VaR is a cutoff (one quantile). It tells us what the best return in the worst 5% losses are.

- ES is a severity measure. it tells us the average loss we should except in the worst 5% of losses.

ES is always bigger (or maybe equal) than VaR. If ES is much larger than VaR, the distribution has a heavier left tail (more extreme losses after crossing the threshold).

6.4 estimation methods used in this report

We report a comparison table for \(\alpha=0.05\) using two other estimators: 1) Cornish–Fisher (CF) adjusted quantiles 2) Filtered historical simulation (FHS) with EWMA volatility

A single VaR/ES estimate can be fragile, so comparing multiple approaches helps users see a plausible range.

6.4.1 cornish–fisher (CF): non-normal quantile correction using skewness and kurtosis

CF starts from the normal quantile and adjusts it to reflect skewness and fat tails.

(a) standardize returns

From a sample window, estimate: - \(\mu\) (sample mean) and \(\sigma\) (sample standard deviation) - standardized values \(x_t = (r_t-\mu)/\sigma\)

Compute standardized skewness \(S\) and excess kurtosis \(K\):

\[ S = E[X^3] \approx \frac{1}{T}\sum_{t=1}^T x_t^3, \qquad K = E[X^4]-3 \approx \frac{1}{T}\sum_{t=1}^T x_t^4 - 3. \]

(b) adjust the normal quantile

We set \(z = z_\alpha = \Phi^{-1}(\alpha)\) as the standard normal \(\alpha\)-quantile.

A commonly used CF expansion is:

\[ z_{\text{CF}} = z +\frac{1}{6}(z^2-1)S +\frac{1}{24}(z^3-3z)K -\frac{1}{36}(2z^3-5z)S^2. \]

Then the CF return quantile is:

\[ q_\alpha^{\text{CF}}(r) = \mu + \sigma z_{\text{CF}}. \]

So the CF VaR is:

\[ \text{VaR}_\alpha^{\text{CF}} = -\left(\mu + \sigma z_{\text{CF}}\right). \]

(c) Cornish Fisher ES

CF primarily provides a corrected quantile (VaR).

A common practical ES approximation is to compute ES empirically using the CF cutoff:

We first compute \(q_\alpha^{\text{CF}}(r)\)

and then average sample returns below that cutoff:

\[ \text{ES}_\alpha^{\text{CF}} \approx -\frac{1}{|\mathcal{T}_\alpha^{\text{CF}}|}\sum_{t\in \mathcal{T}_\alpha^{\text{CF}}} r_t, \qquad \mathcal{T}_\alpha^{\text{CF}}=\{t:r_t\le q_\alpha^{\text{CF}}(r)\}. \]

This model incorporates skewness/kurtosis (non-normality), but approximation can be unstable if skew/kurt estimates are noisy or tails are extreme

negative skew (\(S<0\)) usually worsens left-tail quantiles positive excess kurtosis (\(K>0\)) deepens tail risk vs normal

6.4.2 historical simulation (HS)

HS is the most direct approach: it treats the observed return window as the empirical distribution.

The HS quantile is the empirical quantile

\[ q_\alpha^{\text{HS}}(r) = \text{EmpQuantile}_\alpha(\{r_t\}_{t=1}^T), \]

so

\[ \text{VaR}_\alpha^{\text{HS}} = -q_\alpha^{\text{HS}}(r). \]

And HS ES is:

\[ \text{ES}_\alpha^{\text{HS}} = -E[r \mid r \le q_\alpha^{\text{HS}}(r)] \approx -\frac{1}{|\mathcal{T}_\alpha|}\sum_{t\in \mathcal{T}_\alpha} r_t. \]

This moodel can be sensitive to the chosen window, and to regime changes

6.4.3 filtered historical simulation (FHS): volatility-adjusted tail estimation

HS assumes the return distribution is stable across time.

In reality, returns show volatility clustering (calm vs turbulent periods).

With FHS we address this by filtering out time-varying volatility before sampling the tail.

EWMA volatility filter

There are many ways for filtering. In this project we use this approach:

We estimate conditional variance using EWMA:

\[ \sigma_t^2 = \lambda \sigma_{t-1}^2 + (1-\lambda)r_{t-1}^2, \]

where \(\lambda\in(0,1)\) is the decay parameter (we set as \(\lambda\approx 0.94\)).

FHS expected shortfall

\[ \text{ES}_{\alpha,t+1}^{\text{FHS}} = -\left(\mu_{t+1} + \sigma_{t+1} \, E[\varepsilon \mid \varepsilon \le q_\alpha(\varepsilon)]\right). \]

Empirically:

\[ E[\varepsilon \mid \varepsilon \le q_\alpha(\varepsilon)] \approx \frac{1}{|\mathcal{T}_\alpha^\varepsilon|} \sum_{t\in \mathcal{T}_\alpha^\varepsilon}\varepsilon_t. \]

So:

\[ \text{ES}_{\alpha,t+1}^{\text{FHS}} \approx -\left(\mu_{t+1} + \sigma_{t+1} \frac{1}{|\mathcal{T}_\alpha^\varepsilon|} \sum_{t\in \mathcal{T}_alpha^\varepsilon}\varepsilon_t\right). \]

This model adapts to volatility regimes and has better behavior when today’s volatility differs from the historical average of volatility, but it depends what model we use and can be different

def hist_var_es(r, alpha=0.05):

x = pd.Series(r).dropna()

q = x.quantile(alpha)

es = x[x <= q].mean()

return -float(q), -float(es)

def cf_var_es(r, alpha=0.05, n_sim=70000, seed=7):

x = pd.Series(r).dropna()

mu = float(x.mean())

sd = float(x.std(ddof=1))

if sd <= 1e-12:

return np.nan, np.nan

s = float(x.skew())

k = float(x.kurt())

z = normaldist().inv_cdf(alpha)

zc = z + (z**2 - 1)*s/6 + (z**3 - 3*z)*k/24 - (2*z**3 - 5*z)*(s**2)/36

q = mu + sd * zc

rng = np.random.default_rng(seed)

zs = rng.standard_normal(n_sim)

za = zs + (zs**2 - 1)*s/6 + (zs**3 - 3*zs)*k/24 - (2*zs**3 - 5*zs)*(s**2)/36

rs = mu + sd * za

es = rs[rs <= q].mean()

return -float(q), -float(es)

def fhs_var_es(r, alpha=0.05, lam=0.94):

x = pd.Series(r).dropna().astype(float)

mu = float(x.mean())

e = x - mu

sig = np.zeros(len(e), dtype=float)

sig[0] = max(float(e.std(ddof=1)), 1e-6)

for t in range(1, len(e)):

sig[t] = np.sqrt(lam * sig[t - 1]**2 + (1 - lam) * e.iloc[t - 1]**2)

z = e.to_numpy() / np.where(sig > 1e-12, sig, np.nan)

z = z[np.isfinite(z)]

qz = np.quantile(z, alpha)

ez = z[z <= qz].mean()

sn = sig[-1]

return float(-(mu + sn * qz)), float(-(mu + sn * ez))

var_rows = []

for name, r in obj.items():

x = pd.Series(r).dropna()

hv, he = hist_var_es(x, 0.05)

cv, ce = cf_var_es(x, 0.05)

fv, fe = fhs_var_es(x, 0.05)

var_rows.append({

"object": name,

"hist_var5": hv,

"hist_es5": he,

"cf_var5": cv,

"cf_es5": ce,

"fhs_var5": fv,

"fhs_es5": fe,

})

var_tbl = pd.DataFrame(var_rows).set_index("object").sort_index()

display(var_tbl.round(4))| hist_var5 | hist_es5 | cf_var5 | cf_es5 | fhs_var5 | fhs_es5 | |

|---|---|---|---|---|---|---|

| object | ||||||

| aapl | 0.0287 | 0.0425 | 0.0261 | 0.0547 | 0.0205 | 0.0299 |

| maxsharpe_frontier | 0.0283 | 0.0447 | 0.0276 | 0.0578 | 0.0455 | 0.0679 |

| mv_ewma | 0.0149 | 0.0245 | 0.0156 | 0.0439 | 0.0136 | 0.0207 |

| nvda | 0.0466 | 0.0691 | 0.0446 | 0.0839 | 0.0286 | 0.0409 |

fig, axes = plt.subplots(2, 2, figsize=(10, 6), sharex=True)

axes = axes.ravel()

for i, (name, r) in enumerate(obj.items()):

ax = axes[i]

x = pd.Series(r).dropna()

hv, he = hist_var_es(x, 0.05)

ax.hist(x.values, bins=60, density=True, alpha=0.75)

ax.axvline(-hv, lw=2.0, ls="--", color=obj_colors[name], label="vaR 5% (hist)")

ax.axvline(-he, lw=2.0, ls=":", color=obj_colors[name], label="es 5% (hist)")

ax.set_title(f"return distribution — {name}")

ax.legend()

plt.tight_layout()

plt.show()

We can see from the table that models give us different results. If we only cosider Historic VaR, We might think that Apple is more risky than MaxSharpe, but other models show otherwise. again we see that the loss of Nvidia is the most in most of the models. and MV_ewma has controlled the risk the best way.

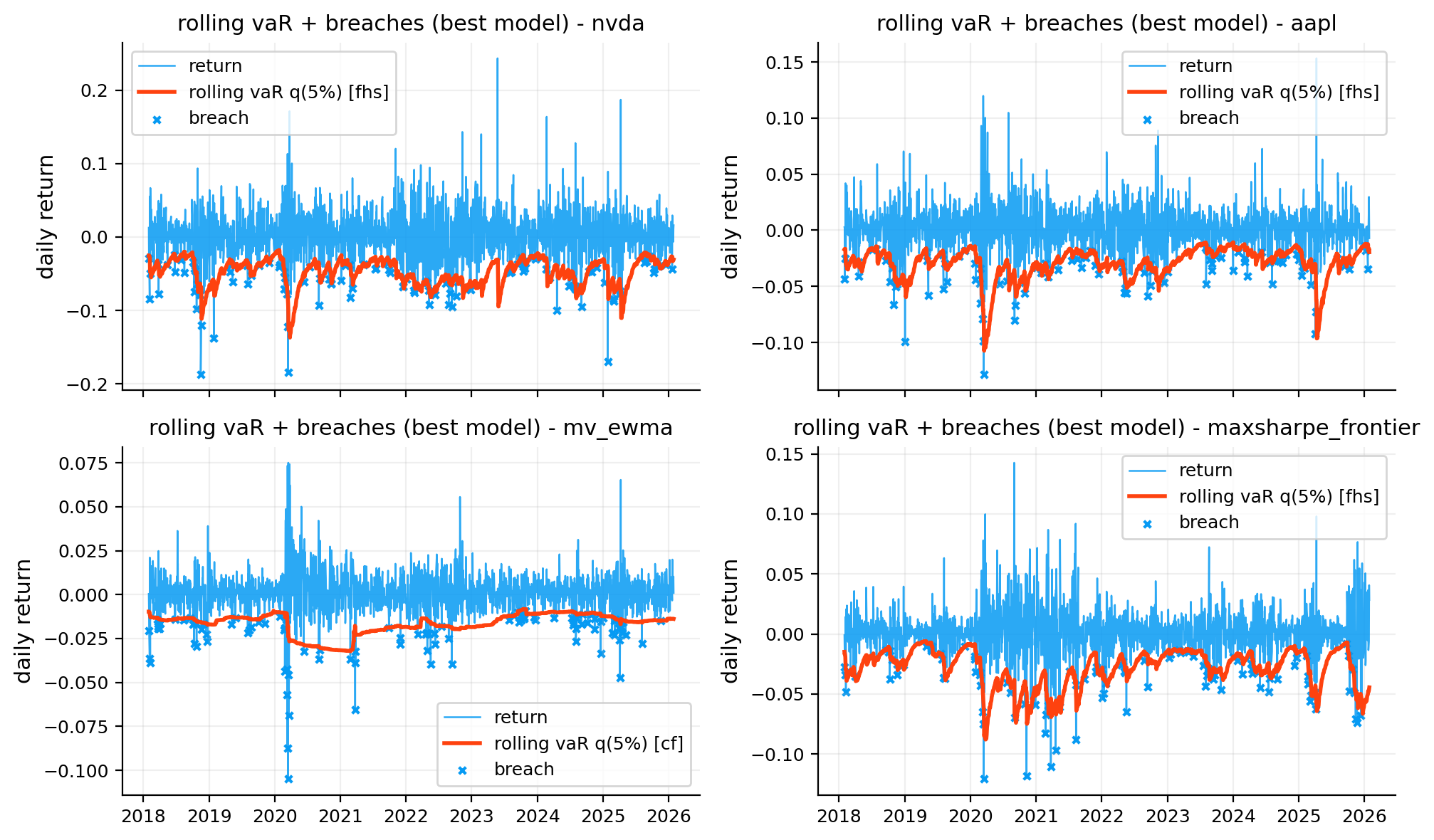

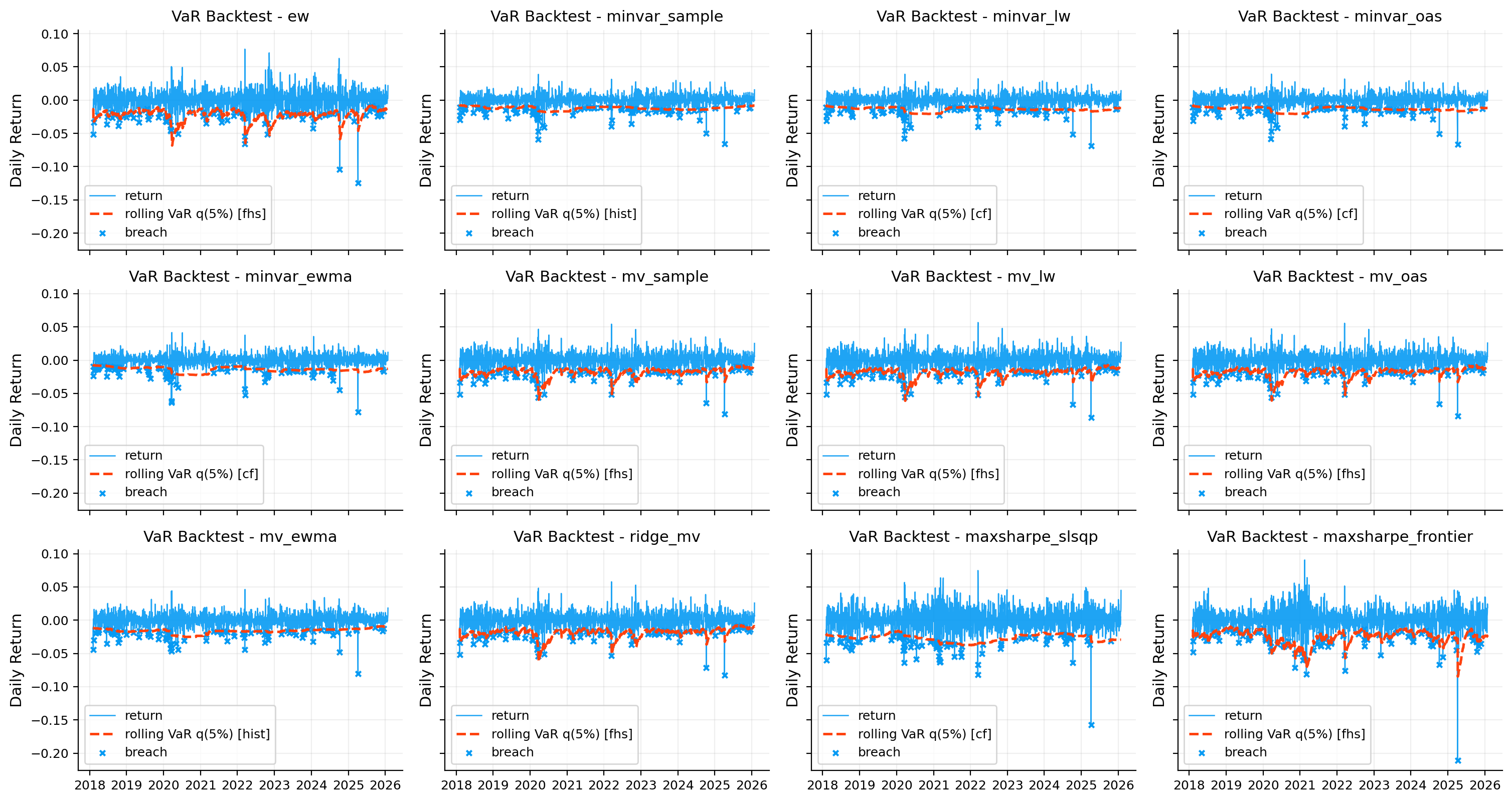

7) VaR backtesting (Model risk)

A VaR model makes a testable promise. For a 5% VaR, Losses should exceed the VaR threshold about 5% of the time.

Backtesting checks whether that promise holds in realized data.

7.1 breach indicator (what counts as a VaR failure)

A VaR breach occurs when the realized return is worse than the VaR threshold:

\[ r_t < -\text{VaR}_{\alpha,t}. \]

We define the breach indicator:

\[ b_t = \mathbb{1}\!\left[r_t < -\text{VaR}_{\alpha,t}\right], \]

where \(b_t=1\) means a breach happened, and \(b_t=0\) otherwise.

We summarize breaches using: - breach count: \(x = \sum_{t=1}^n b_t\) - breach rate: \(\hat p = x/n\) - longest breach streak: \(\max\) number of consecutive \(b_t=1\) (a simple clustering diagnostic)

If the VaR model is correct, we expect \(\hat p \approx \alpha\) over a long sample. If breaches happen in streaks, the model may be underreacting to volatility regime changes (clustering risk).

7.2 Kupiec test: unconditional coverage (frequency)

The Kupiec (POF) test checks whether breaches occur with the correct long-run frequency.

If we have: - \(n\) = number of test days - \(x\) = number of breaches - \(\hat p = x/n\) = observed breach rate - \(p = \alpha\) = model-implied breach probability (5%)

Kupiec’s likelihood ratio statistic is:

The log-likelihood under the null (correct coverage) is:

\[ \ell_0 = (n-x)\log(1-p) + x\log(p). \]

The log-likelihood under the alternative (best-fitting rate) is:

\[ \ell_1 = (n-x)\log(1-\hat p) + x\log(\hat p). \]

Kupiec’s likelihood-ratio statistic is:

\[ \text{LR}_{uc} = -2(\ell_0-\ell_1), \qquad \text{LR}_{uc}\sim \chi^2(1)\ \text{under }H_0. \]

it means: - large \(\text{LR}_{uc}\) (small p-value) means the breach frequency is wrong: - too many breaches means VaR is too small (underestimates risk) - too few breaches means VaR is too conservative

7.3 Christoffersen test: independence / clustering

Correct frequency alone is not enough, breaches should also be independent over time.

If breaches have clustering, the VaR model may fail during volatility spikes or regime shifts. se we test independence

The Christoffersen independence test treats the breach sequence \(b_t\) as a two-state process (0 = no breach, 1 = breach) and checks whether transitions depend on the previous day.

Count transitions: - \(n_{00}\): number of times \(b_{t-1}=0 \to b_t=0\) - \(n_{01}\): number of times \(b_{t-1}=0 \to b_t=1\) - \(n_{10}\): number of times \(b_{t-1}=1 \to b_t=0\) - \(n_{11}\): number of times \(b_{t-1}=1 \to b_t=1\)

Estimate transition probabilities:

\[ \pi_{01} = \frac{n_{01}}{n_{00}+n_{01}}, \qquad \pi_{11} = \frac{n_{11}}{n_{10}+n_{11}}. \]

If breaches are independent, the probability of a breach tomorrow does not depend on whether there was a breach today, so:

\[ H_0: \pi_{01} = \pi_{11}. \]

Log-likelihood under independence: \[ \ell_0 = (n_{00}+n_{10})\log(1-\pi) + (n_{01}+n_{11})\log(\pi). \]

Log-likelihood under dependence (two transition probabilities): \[ \ell_1 = n_{00}\log(1-\pi_{01}) + n_{01}\log(\pi_{01}) + n_{10}\log(1-\pi_{11}) + n_{11}\log(\pi_{11}). \]

The test statistic is: \[ \text{LR}_{ind} = -2(\ell_0-\ell_1), \qquad \text{LR}_{ind}\sim \chi^2(1)\ \text{under }H_0. \]

We define likelihoods: - under independence (Bernoulli with constant probability \(\hat p\)):

\[ L_0 = (1-\hat p)^{n_{00}+n_{10}} \hat p^{n_{01}+n_{11}} \]

- under first-order dependence (different transition probabilities):

\[ L_1 = (1-\pi_{01})^{n_{00}} \pi_{01}^{n_{01}} (1-\pi_{11})^{n_{10}} \pi_{11}^{n_{11}}. \]

Christoffersen’s likelihood ratio statistic:

\[ \text{LR}_{ind} = -2\ln\left(\frac{L_0}{L_1}\right) \qquad \text{LR}_{ind}\sim \chi^2(1) \text{ under } H_0. \]

small p-value means breaches are clustered (depend on previous breach status) clustering is a common sign that the VaR model is not adapting fast enough to changing volatility

We report p-values for:

- Kupiec (coverage): is breach frequency close to \(\alpha\)

- Christoffersen (independence): are breaches unclustered

A well-specified VaR model typically has: - coverage p-value not too small (frequency is plausible) - independence p-value not too small (no strong clustering)

Small p-values suggest misspecification: wrong level of risk, volatility dynamics not captured, or regime changes.

alpha = 0.05

lookback = 252

bt_methods = ["hist", "cf", "fhs"]

def chi2_sf(x, df):

return float(chi2.sf(x, df))

def rolling_var_quantile(r, alpha=0.05, lookback=252, method="hist", cf_n_sim=15000, cf_seed=7, fhs_lambda=0.94):

x = pd.Series(r).dropna().astype(float)

if len(x) < lookback + 1:

return pd.Series(dtype=float)

m = str(method).strip().lower()

q = pd.Series(np.nan, index=x.index, dtype=float)

for i in range(lookback, len(x)):

w = x.iloc[i - lookback:i]

if m == "hist":

v, _ = hist_var_es(w, alpha=alpha)

elif m == "cf":

v, _ = cf_var_es(w, alpha=alpha, n_sim=cf_n_sim, seed=cf_seed)

elif m == "fhs":

v, _ = fhs_var_es(w, alpha=alpha, lam=fhs_lambda)

else:

raise ValueError("method must be one of {'hist', 'cf', 'fhs'}")

q.iloc[i] = -float(v) if np.isfinite(v) else np.nan

return q

def longest_true_streak(mask):

m = np.asarray(mask, dtype=bool)

best = 0

cur = 0

for v in m:

if v:

cur += 1

best = max(best, cur)

else:

cur = 0

return int(best)

def kupiec_test(breach, alpha=0.05):

b = np.asarray(breach, dtype=bool)

n = int(b.size)

x = int(b.sum())

if n <= 0:

return np.nan, np.nan

p = float(alpha)

eps = 1e-12

ph = x / n

ph = min(max(ph, eps), 1.0 - eps)

ll0 = (n - x) * np.log1p(-p) + x * np.log(p)

ll1 = (n - x) * np.log1p(-ph) + x * np.log(ph)

lr = float(-2.0 * (ll0 - ll1))

pv = chi2_sf(lr, df=1)

return lr, pv

def christoffersen_independence(breach):

b = np.asarray(breach, dtype=int)

if b.size < 3:

return np.nan, np.nan

b0 = b[:-1]

b1 = b[1:]

n00 = int(((b0 == 0) & (b1 == 0)).sum())

n01 = int(((b0 == 0) & (b1 == 1)).sum())

n10 = int(((b0 == 1) & (b1 == 0)).sum())

n11 = int(((b0 == 1) & (b1 == 1)).sum())

eps = 1e-12

pi01 = n01 / (n00 + n01 + eps)

pi11 = n11 / (n10 + n11 + eps)

pi = (n01 + n11) / (n00 + n01 + n10 + n11 + eps)

pi01 = min(max(pi01, eps), 1.0 - eps)

pi11 = min(max(pi11, eps), 1.0 - eps)

pi = min(max(pi, eps), 1.0 - eps)

ll0 = (n00 + n10) * np.log1p(-pi) + (n01 + n11) * np.log(pi)

ll1 = (

n00 * np.log1p(-pi01) + n01 * np.log(pi01)

+ n10 * np.log1p(-pi11) + n11 * np.log(pi11)

)

lr = float(-2.0 * (ll0 - ll1))

pv = chi2_sf(lr, df=1)

return lr, pv

def quantile_loss(ret, q, alpha=0.05):

z = pd.concat([pd.Series(ret).rename("ret"), pd.Series(q).rename("q")], axis=1).dropna()

if len(z) == 0:

return np.nan

e = z["ret"] - z["q"]

loss = e * (alpha - (e < 0).astype(float))

return float(loss.mean())

def breach_stats(r, alpha=0.05, lookback=252, method="hist"):

x = pd.Series(r).dropna().astype(float)

q = rolling_var_quantile(x, alpha=alpha, lookback=lookback, method=method)

z = pd.concat([x.rename("ret"), q.rename("var_q")], axis=1).dropna()

br = z["ret"] < z["var_q"]

lr_uc, pv_uc = kupiec_test(br, alpha=alpha)

lr_ind, pv_ind = christoffersen_independence(br)

idx = np.flatnonzero(br.to_numpy())

gaps = np.diff(idx) if idx.size >= 2 else np.array([])

rate = float(br.mean())

return {

"series": z,

"breach": br,

"count": int(br.sum()),

"rate": rate,

"coverage_error": float(rate - alpha),

"abs_coverage_error": float(abs(rate - alpha)),

"longest_streak": longest_true_streak(br.to_numpy()),

"avg_gap": float(np.mean(gaps)) if gaps.size else np.nan,

"med_gap": float(np.median(gaps)) if gaps.size else np.nan,

"kupiec_lr": lr_uc,

"kupiec_p": pv_uc,

"christ_lr": lr_ind,

"christ_p": pv_ind,

"quantile_loss": quantile_loss(z["ret"], z["var_q"], alpha=alpha),

}

rows = []

stats_map = {}

for name, r in obj.items():

stats_map[name] = {}

for m in bt_methods:

st = breach_stats(r, alpha=alpha, lookback=lookback, method=m)

stats_map[name][m] = st

rows.append({

"object": name,

"method": m,

"breach_count": st["count"],

"breach_rate": st["rate"],

"coverage_error": st["coverage_error"],

"abs_coverage_error": st["abs_coverage_error"],

"longest_breach_streak": st["longest_streak"],

"avg_gap_days": st["avg_gap"],

"kupiec_p": st["kupiec_p"],

"christoffersen_p": st["christ_p"],

"quantile_loss": st["quantile_loss"],

})

var_bt_tbl = pd.DataFrame(rows).set_index(["object", "method"]).sort_index()

var_bt_tbl["accuracy_rank"] = np.nan

var_bt_tbl["accuracy_score"] = np.nan

var_bt_tbl["is_best"] = False

for name, g in var_bt_tbl.groupby(level=0, sort=False):

abs_cov = g["abs_coverage_error"]

qloss = g["quantile_loss"]

kup = g["kupiec_p"].fillna(-np.inf)

chrp = g["christoffersen_p"].fillna(-np.inf)

r_abs = abs_cov.rank(ascending=True, method="min", na_option="bottom")

r_ql = qloss.rank(ascending=True, method="min", na_option="bottom")

r_k = kup.rank(ascending=False, method="min")

r_c = chrp.rank(ascending=False, method="min")

rank_sum = (r_abs + r_ql + r_k + r_c).astype(float)

acc_rank = rank_sum.rank(ascending=True, method="min")

acc_score = 1.0 / (1.0 + rank_sum)

var_bt_tbl.loc[g.index, "accuracy_rank"] = acc_rank.to_numpy(dtype=float)

var_bt_tbl.loc[g.index, "accuracy_score"] = acc_score.to_numpy(dtype=float)

best_idx = pd.DataFrame(

{

"rank_sum": rank_sum,

"abs_cov": abs_cov,

"qloss": qloss,

"kupiec": kup,

"christ": chrp,

"method_name": [idx[1] for idx in g.index],

},

index=g.index,

).sort_values(

by=["rank_sum", "abs_cov", "qloss", "kupiec", "christ", "method_name"],

ascending=[True, True, True, False, False, True],

).index[0]

var_bt_tbl.loc[best_idx, "is_best"] = True

display(var_bt_tbl.round(4))

best_method_map = {obj_name: method for obj_name, method in var_bt_tbl[var_bt_tbl["is_best"]].index}

breach_map = {}

for name, r in obj.items():

m = best_method_map.get(name, "hist")

st = stats_map[name][m]

st["method"] = m

breach_map[name] = st

var_bt_tbl_pdf = var_bt_tbl.rename(columns={

"breach_count": "breaches",

"breach_rate": "rate",

"longest_breach_streak": "max_streak",

"avg_gap_days": "avg_gap_d",

"kupiec_p": "kupiec_p",

"christoffersen_p": "christoffersen_p",

})| breach_count | breach_rate | coverage_error | abs_coverage_error | longest_breach_streak | avg_gap_days | kupiec_p | christoffersen_p | quantile_loss | accuracy_rank | accuracy_score | is_best | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| object | method | ||||||||||||

| aapl | cf | 135 | 0.0672 | 0.0172 | 0.0172 | 4 | 14.9254 | 0.0008 | 0.0002 | 0.0023 | 2.0 | 0.0909 | False |

| fhs | 105 | 0.0523 | 0.0023 | 0.0023 | 3 | 19.2308 | 0.6437 | 0.0011 | 0.0022 | 1.0 | 0.2000 | True | |

| hist | 124 | 0.0617 | 0.0117 | 0.0117 | 4 | 16.2602 | 0.0198 | 0.0002 | 0.0023 | 2.0 | 0.0909 | False | |

| maxsharpe_frontier | cf | 119 | 0.0592 | 0.0092 | 0.0092 | 3 | 16.7712 | 0.0647 | 0.0017 | 0.0023 | 3.0 | 0.0909 | False |

| fhs | 107 | 0.0533 | 0.0033 | 0.0033 | 2 | 18.6415 | 0.5068 | 0.0016 | 0.0021 | 1.0 | 0.1667 | True | |

| hist | 117 | 0.0582 | 0.0082 | 0.0082 | 3 | 17.0603 | 0.0983 | 0.0004 | 0.0023 | 2.0 | 0.1000 | False | |

| mv_ewma | cf | 103 | 0.0513 | 0.0013 | 0.0013 | 2 | 19.1961 | 0.7949 | 0.0023 | 0.0014 | 1.0 | 0.1429 | True |

| fhs | 112 | 0.0557 | 0.0057 | 0.0057 | 2 | 17.6396 | 0.2453 | 0.0001 | 0.0013 | 3.0 | 0.0909 | False | |

| hist | 109 | 0.0543 | 0.0043 | 0.0043 | 3 | 17.4537 | 0.3877 | 0.0002 | 0.0013 | 2.0 | 0.1111 | False | |

| nvda | cf | 170 | 0.0846 | 0.0346 | 0.0346 | 3 | 11.8343 | 0.0000 | 0.0009 | 0.0039 | 3.0 | 0.0769 | False |

| fhs | 116 | 0.0577 | 0.0077 | 0.0077 | 2 | 17.3913 | 0.1199 | 0.2037 | 0.0035 | 1.0 | 0.2000 | True | |

| hist | 126 | 0.0627 | 0.0127 | 0.0127 | 3 | 15.9920 | 0.0117 | 0.0344 | 0.0037 | 2.0 | 0.1111 | False |

fig, axes = plt.subplots(2, 2, figsize=(10, 6), sharex=True, sharey=False)

axes = axes.ravel()

for i, name in enumerate(obj.keys()):

ax = axes[i]

z = breach_map[name]["series"]

br = breach_map[name]["breach"]

m = breach_map[name].get("method", "hist")

ax.plot(z.index, z["ret"].values, lw=0.9, alpha=0.85, label="return")

ax.plot(z.index, z["var_q"].values, lw=2.0, label=f"rolling vaR q(5%) [{m}]")

ax.scatter(z.index[br], z.loc[br, "ret"].values, s=12, marker="x", label="breach")

ax.set_title(f"rolling vaR + breaches (best model) - {name}")

ax.set_ylabel("daily return")

ax.legend()

plt.tight_layout()

plt.show()

We can see both CF and FHS models have performed better and more accurate than the Historic VaR estimating. and for 3 of our objects FHS has performed better and for MV_ewma, CF ended up with more accuracy

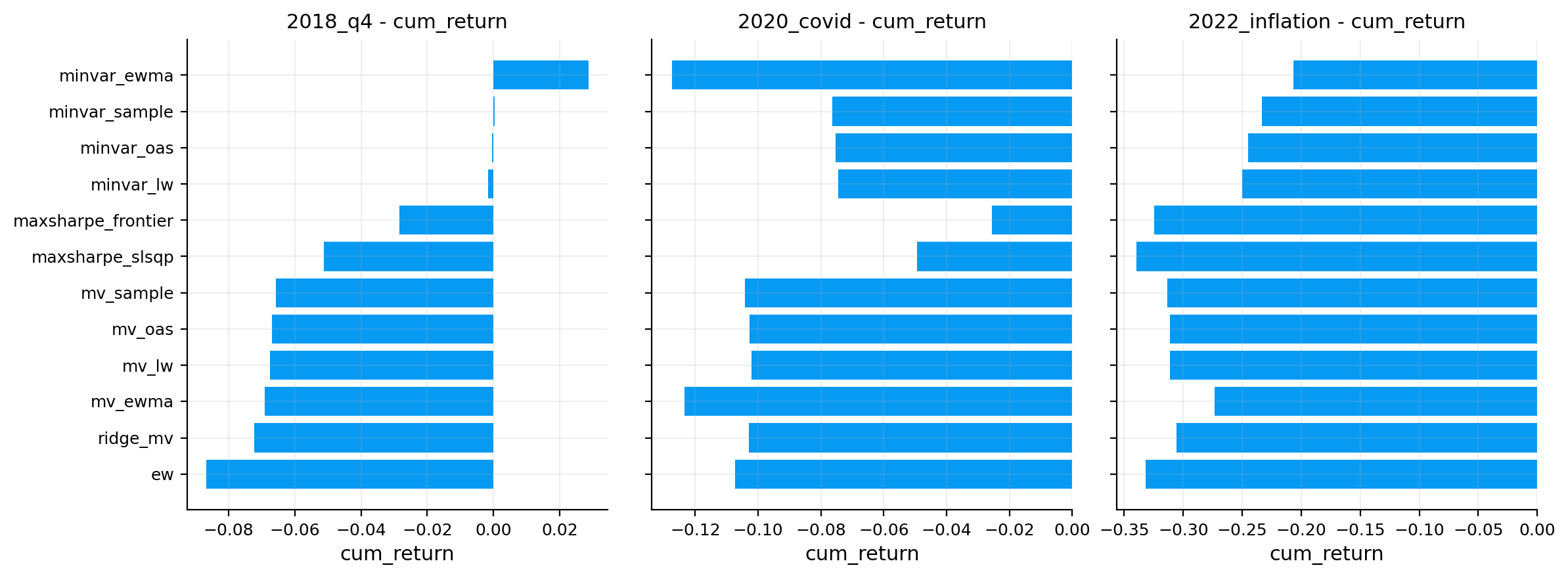

8) historical stress windows (scenario slices)

instead of hypothetical shocks, we can look at real historical periods like covid crash and see how did each object behave during that exact window, what was the max drawdown inside the window and how much loss we would take in that period if we held these objects.

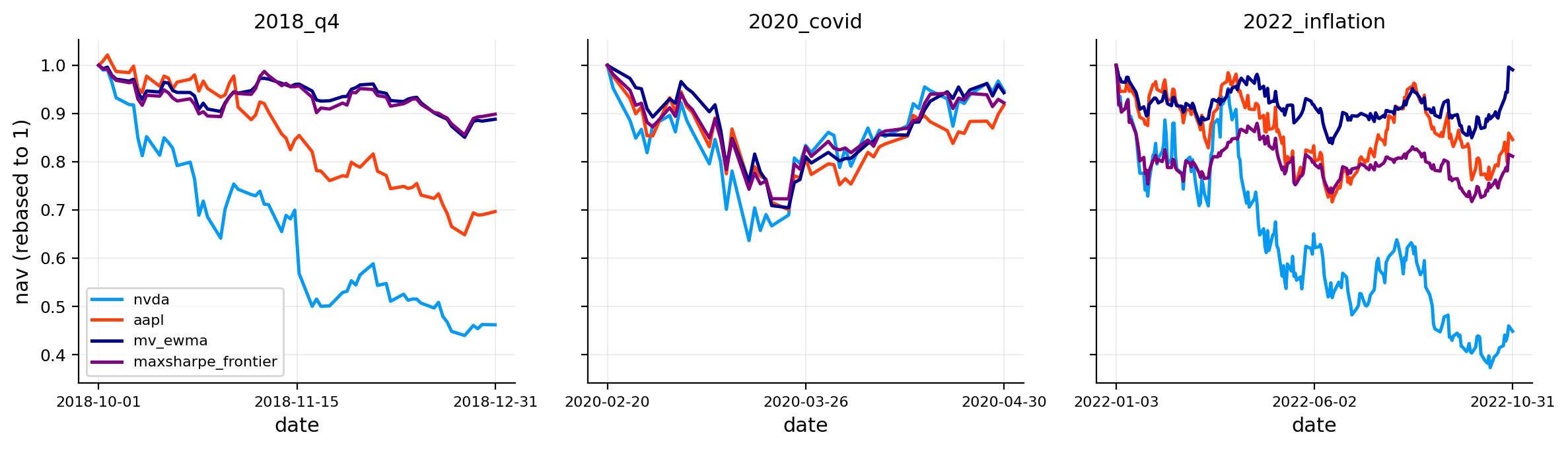

for this project we use three periods: - the 4th quarter of 2018 - the Covid crash in the first months of 2020 - inflation of 2022

stress_windows = {

"2018_q4": ("2018-10-01", "2018-12-31"),

"2020_covid": ("2020-02-20", "2020-04-30"),

"2022_inflation": ("2022-01-03", "2022-10-31"),

}

stress_rows = []

for wname, (s, e) in stress_windows.items():

s = pd.Timestamp(s)

e = pd.Timestamp(e)

for name, r in obj.items():

x = pd.Series(r).loc[(pd.Series(r).index >= s) & (pd.Series(r).index <= e)].dropna()

if len(x) == 0:

continue

nav = nav_series(x)

dd = nav / nav.cummax() - 1

worst_week = x.resample("W-FRI").sum().min() if len(x) > 5 else np.nan

stress_rows.append({

"window": wname,

"object": name,

"cum_return": float(nav.iloc[-1] - 1),

"max_dd": float(dd.min()),

"worst_day": float(x.min()),

"worst_week": float(worst_week) if np.isfinite(worst_week) else np.nan,

})

stress_tbl = pd.DataFrame(stress_rows).sort_values(["window", "object"]).reset_index(drop=True)display(stress_tbl.round(4))| window | object | cum_return | max_dd | worst_day | worst_week | |

|---|---|---|---|---|---|---|

| 0 | 2018_q4 | aapl | -0.2988 | -0.3651 | -0.0663 | -0.1140 |

| 1 | 2018_q4 | maxsharpe_frontier | -0.1036 | -0.1442 | -0.0375 | -0.0506 |

| 2 | 2018_q4 | mv_ewma | -0.1150 | -0.1495 | -0.0296 | -0.0535 |

| 3 | 2018_q4 | nvda | -0.5245 | -0.5604 | -0.1875 | -0.1988 |

| 4 | 2020_covid | aapl | -0.0921 | -0.2995 | -0.1286 | -0.1803 |

| 5 | 2020_covid | maxsharpe_frontier | -0.0848 | -0.2770 | -0.1208 | -0.1448 |

| 6 | 2020_covid | mv_ewma | -0.0461 | -0.2954 | -0.1050 | -0.1666 |

| 7 | 2020_covid | nvda | -0.0707 | -0.3634 | -0.1846 | -0.1286 |

| 8 | 2022_inflation | aapl | -0.1329 | -0.2834 | -0.0587 | -0.0830 |

| 9 | 2022_inflation | maxsharpe_frontier | -0.1842 | -0.2827 | -0.0649 | -0.0999 |

| 10 | 2022_inflation | mv_ewma | -0.0138 | -0.1623 | -0.0396 | -0.0566 |

| 11 | 2022_inflation | nvda | -0.5408 | -0.6270 | -0.0947 | -0.1709 |

import matplotlib.dates as mdates

fig, axes = plt.subplots(1, len(stress_windows), figsize=(12, 3.5), sharey=True)

if len(stress_windows) == 1:

axes = [axes]

for ax, (wname, (s, e)) in zip(axes, stress_windows.items()):

s = pd.Timestamp(s)

e = pd.Timestamp(e)

mid = s + (e - s) / 2

for name, r in obj.items():

x = pd.Series(r).loc[(pd.Series(r).index >= s) & (pd.Series(r).index <= e)].dropna()

if len(x) == 0:

continue

nav = nav_series(x)

nav = nav / nav.iloc[0]

ax.plot(nav.index, nav.values, lw=1.8, color=obj_colors[name], label=name)

ax.set_title(wname)

ax.set_xlabel("date")

ax.grid(True, alpha=0.2)

# Force exactly 3 x-ticks to avoid overlap: start, mid, end.

ticks = [s, mid, e]

ax.set_xticks(ticks)

ax.xaxis.set_major_formatter(mdates.DateFormatter("%Y-%m-%d"))

ax.tick_params(axis="x", labelrotation=0, labelsize=8)

axes[0].set_ylabel("nav (rebased to 1)")

axes[0].legend(ncol=1, fontsize=8)

plt.tight_layout()

plt.show()

When it comes to these periods, companies get effected directly and these events cause more drops and negative returns in stock prices and diversification seems to be the safer choice. this is one of the rare measures that we can see MaxSharpe performed better than apple.

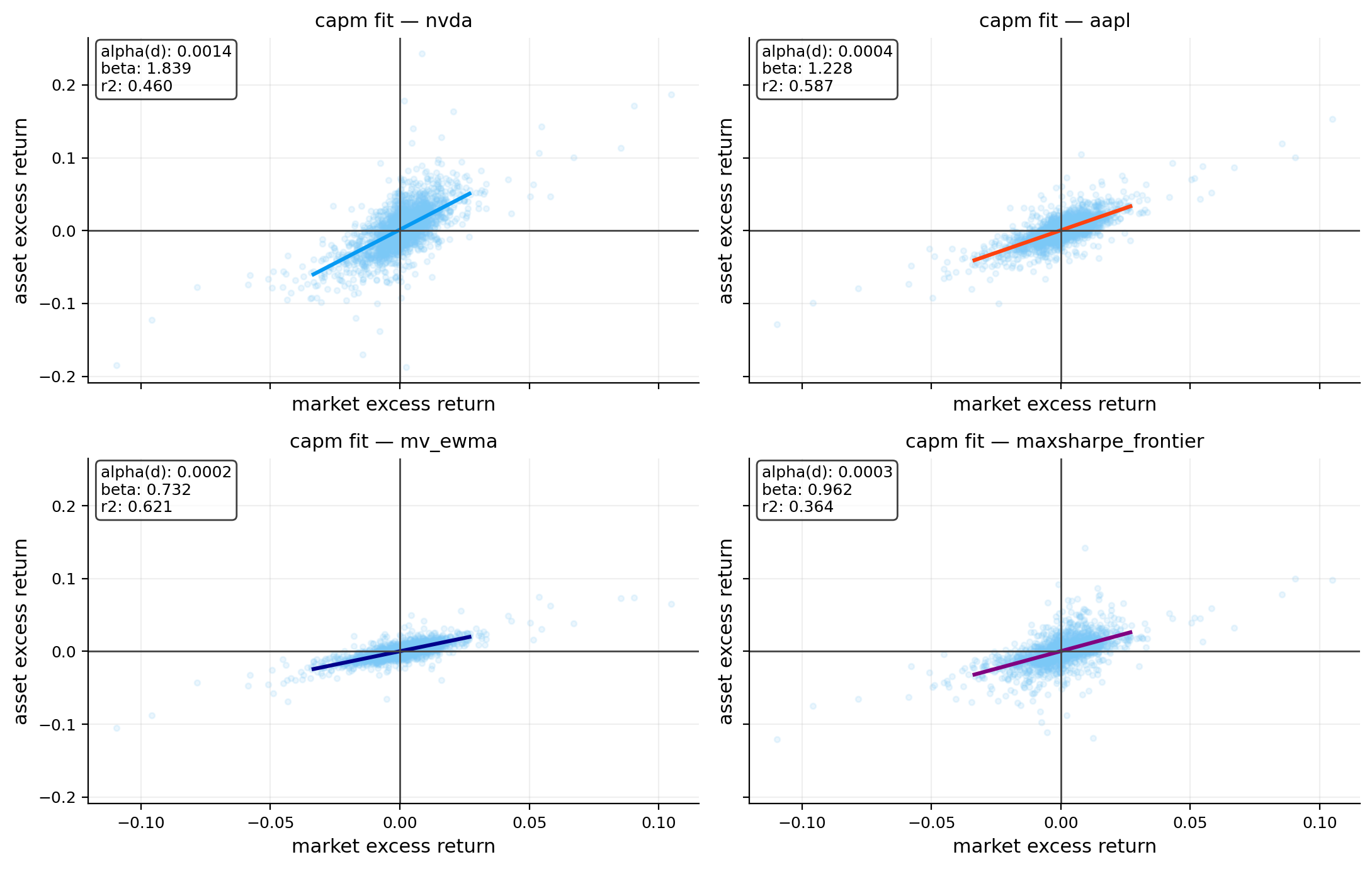

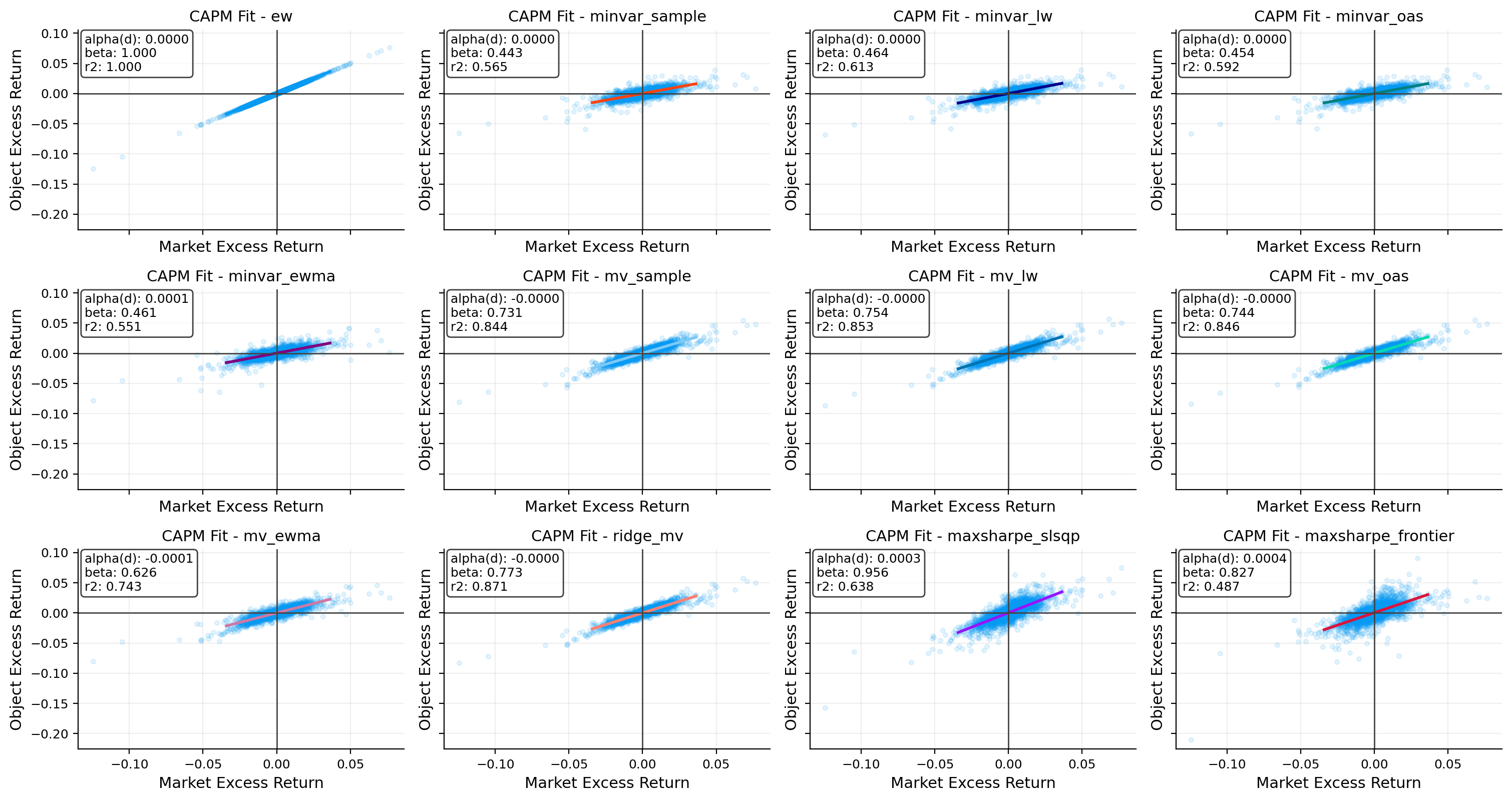

9) CAPM / market factor decomposition

capm explains returns using a single market factor. here, we use spy data that we imported as our market proxy (S&P 500 ETF).

With this model we assume that any return more than risk free rate of our objects can be explained by market returns and market risk effects on these returns.

From this relationship we can interpret how much each object’s risk is dependent to market risk and we can see if each object has any returns more than market returns that can’t be explained with market risk.

9.1 capm model (daily)

using excess returns:

\[ r^{ex}_{j,t} = \alpha_j + \beta_j\, r^{ex}_{m,t} + \varepsilon_{j,t} \]

where: - \(r^{ex}_{j,t} = r_{j,t} - r_{f,t}\) (excess return of the object) - \(r^{ex}_{m,t} = r_{m,t} - r_{f,t}\) (market = spy) - \(\alpha_j\) is “alpha” (average unexplained excess return) - \(\beta_j\) is market sensitivity - \(\varepsilon\) is the residual

estimation (ols via sklearn)

OLS (ordinary least squares) is a model for minimizing the square of residuals between the prediction and the real data for fitting a linear regression on our data.

\[ \min_{\alpha,\beta} \sum_t \left(r^{ex}_{j,t} - \alpha - \beta r^{ex}_{m,t}\right)^2 \]

the fitted values are:

\[ \hat r^{ex}_{j,t} = \hat\alpha + \hat\beta r^{ex}_{m,t} \]

and \(r^2\) is:

\[ r^2 = 1 - \frac{\sum_t (r^{ex}_{j,t} - \hat r^{ex}_{j,t})^2}{\sum_t (r^{ex}_{j,t} - \bar r^{ex}_j)^2} \]

9.2 Active risk vs benchmark (tracking error and information ratio)

We define active return as performance relative to the benchmark:

\[ a_t = r_t - m_t \]

Then Tracking error (annualized):

\[ TE = \sqrt{ann}\;\sigma(a_t) \]

Information ratio (annualized): \[ IR = \sqrt{ann}\;\frac{E[a_t]}{\sigma(a_t)} \]

- high TE: we deviate a lot from the benchmark (high active risk)

- high IR: we’re rewarded well per unit of active risk

9.3 Up capture and down capture

These measure how the object behaves when the market is up vs down.

Let: - \(\mathcal{U}=\{t: m_t>0\}\) (up-market days) - \(\mathcal{D}=\{t: m_t<0\}\) (down-market days)

Then:

\[ \text{UpCapture}=\frac{E[r_t\mid t\in\mathcal{U}]}{E[m_t\mid t\in\mathcal{U}]}, \qquad \text{DownCapture}=\frac{E[r_t\mid t\in\mathcal{D}]}{E[m_t\mid t\in\mathcal{D}]} \]

- UpCapture > 1: stronger upside participation than the benchmark

- DownCapture > 1: worse downside participation than the benchmark

- < 1 means more calm moves than the benchmark in that regime

the regression scatter plot

- each dot is a day (\(x\) = market excess return, \(y\) = object excess return)

- the fitted line slope is \(\beta\)

- the intercept is \(\alpha\)

capm_rows = []

roll_store = {}

m_ex = pd.Series(market_ret, index=base_idx) - rf_daily

def capm_ols(y, x):

xy = pd.concat([pd.Series(y), pd.Series(x)], axis=1).dropna()

yv = xy.iloc[:, 0].to_numpy(float)

xv = xy.iloc[:, 1].to_numpy(float)

xmat = np.column_stack([np.ones(len(xv)), xv])

coef = np.linalg.lstsq(xmat, yv, rcond=None)[0]

alpha = float(coef[0])

beta = float(coef[1])

yhat = xmat @ coef

ssr = float(((yv - yhat) ** 2).sum())

sst = float(((yv - yv.mean()) ** 2).sum())

r2 = 1 - ssr / sst if sst > 1e-12 else np.nan

return alpha, beta, r2

def rolling_beta_corr(r, m, w):

x = pd.concat([pd.Series(r), pd.Series(m)], axis=1).dropna()

rp = x.iloc[:, 0]

rm = x.iloc[:, 1]

beta = rp.rolling(w).cov(rm) / rm.rolling(w).var()

corr = rp.rolling(w).corr(rm)

beta.name = f"beta_{w}"

corr.name = f"corr_{w}"

return beta, corr

for name, r in obj.items():

y_ex = pd.Series(r, index=base_idx) - rf_daily

xy_ex = pd.concat([m_ex, y_ex], axis=1).dropna()

if len(xy_ex) >= 30:

x_reg = xy_ex.iloc[:, 0].to_numpy(float).reshape(-1, 1)

y_reg = xy_ex.iloc[:, 1].to_numpy(float)

reg = LinearRegression().fit(x_reg, y_reg)

alpha = float(reg.intercept_)

beta = float(reg.coef_[0])

r2 = float(reg.score(x_reg, y_reg))

else:

alpha, beta, r2 = np.nan, np.nan, np.nan

alpha_ann = (1 + alpha) ** ann - 1 if alpha > -0.999 else np.nan

active = (pd.Series(r, index=base_idx) - pd.Series(market_ret, index=base_idx)).dropna()

te = float(active.std(ddof=1) * np.sqrt(ann))

ir = float(active.mean() / active.std(ddof=1) * np.sqrt(ann))

m = pd.Series(market_ret, index=base_idx).dropna()

y = pd.Series(r, index=base_idx).dropna()

xy = pd.concat([y, m], axis=1).dropna()

y_aligned = xy.iloc[:, 0].to_numpy(float)

m_aligned = xy.iloc[:, 1].to_numpy(float)

up_m = m_aligned > 0

dn_m = m_aligned < 0

up_cap = (np.mean(y_aligned[up_m]) / np.mean(m_aligned[up_m]))

dn_cap = (np.mean(y_aligned[dn_m]) / np.mean(m_aligned[dn_m]))

var_m = float(np.var(m_ex.dropna().to_numpy(float), ddof=1))

var_y = float(np.var(y_ex.dropna().to_numpy(float), ddof=1))

sys_share = (beta ** 2) * var_m / var_y

capm_rows.append({

"object": name,

"alpha_daily": alpha,

"alpha_ann": alpha_ann,

"beta": beta,

"r2": r2,

"tracking_error": te,

"information_ratio": ir,

"up_capture": up_cap,

"down_capture": dn_cap,

"systematic_var_share": sys_share,

})

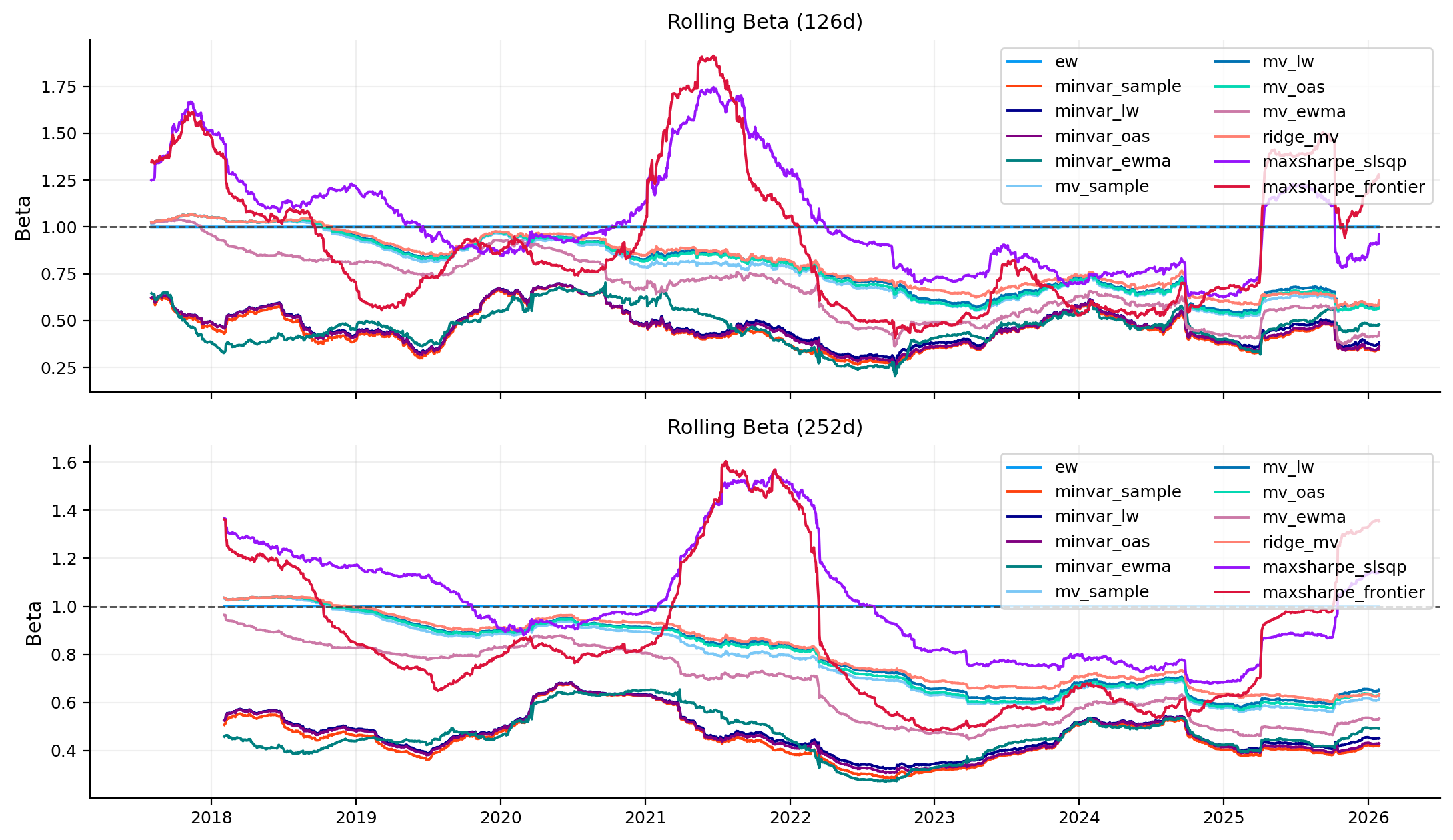

b126, c126 = rolling_beta_corr(y_ex, m_ex, 126)

b252, c252 = rolling_beta_corr(y_ex, m_ex, 252)

roll_store[name] = {"beta_126": b126, "corr_126": c126, "beta_252": b252, "corr_252": c252}

capm_tbl = pd.DataFrame(capm_rows).set_index("object").sort_index()display(capm_tbl.round(4))| alpha_daily | alpha_ann | beta | r2 | tracking_error | information_ratio | up_capture | down_capture | systematic_var_share | |

|---|---|---|---|---|---|---|---|---|---|

| object | |||||||||

| aapl | 0.0004 | 0.1117 | 1.2284 | 0.5866 | 0.1954 | 0.6806 | 1.2905 | 1.1891 | 0.5866 |

| maxsharpe_frontier | 0.0003 | 0.0829 | 0.9622 | 0.3637 | 0.2355 | 0.3193 | 1.0845 | 1.0116 | 0.3637 |

| mv_ewma | 0.0002 | 0.0458 | 0.7316 | 0.6213 | 0.1167 | 0.1114 | 0.7570 | 0.6969 | 0.6213 |

| nvda | 0.0014 | 0.4093 | 1.8388 | 0.4598 | 0.4000 | 1.1068 | 2.1876 | 1.8877 | 0.4598 |

fig, axes = plt.subplots(2, 2, figsize=(11, 7), sharex=True, sharey=True)

axes = axes.ravel()

for i, name in enumerate(obj.keys()):

ax = axes[i]

y_ex = (pd.Series(obj[name], index=base_idx) - rf_daily).dropna()

m_ex = (pd.Series(market_ret, index=base_idx) - rf_daily).dropna()

xy = pd.concat([m_ex, y_ex], axis=1).dropna()

x = xy.iloc[:, 0].to_numpy(float)

y = xy.iloc[:, 1].to_numpy(float)

alpha = capm_tbl.loc[name, "alpha_daily"]

beta = capm_tbl.loc[name, "beta"]

r2 = capm_tbl.loc[name, "r2"]

ax.scatter(x, y, s=10, alpha=0.15, color=palette[5])

xs = np.linspace(np.percentile(x, 1), np.percentile(x, 99), 200)

ys = alpha + beta * xs

ax.plot(xs, ys, lw=2.2, color=obj_colors[name])

ax.axhline(0.0, color="#444", lw=1)

ax.axvline(0.0, color="#444", lw=1)

ax.set_title(f"capm fit — {name}")

ax.set_xlabel("market excess return")

ax.set_ylabel("asset excess return")

ax.text(

0.02, 0.98,

f"alpha(d): {alpha:.4f}\n"

f"beta: {beta:.3f}\n"

f"r2: {r2:.3f}",

transform=ax.transAxes,

va="top",

fontsize=9,

bbox=dict(boxstyle="round", facecolor="white", alpha=0.75),

)

plt.tight_layout()

plt.show()

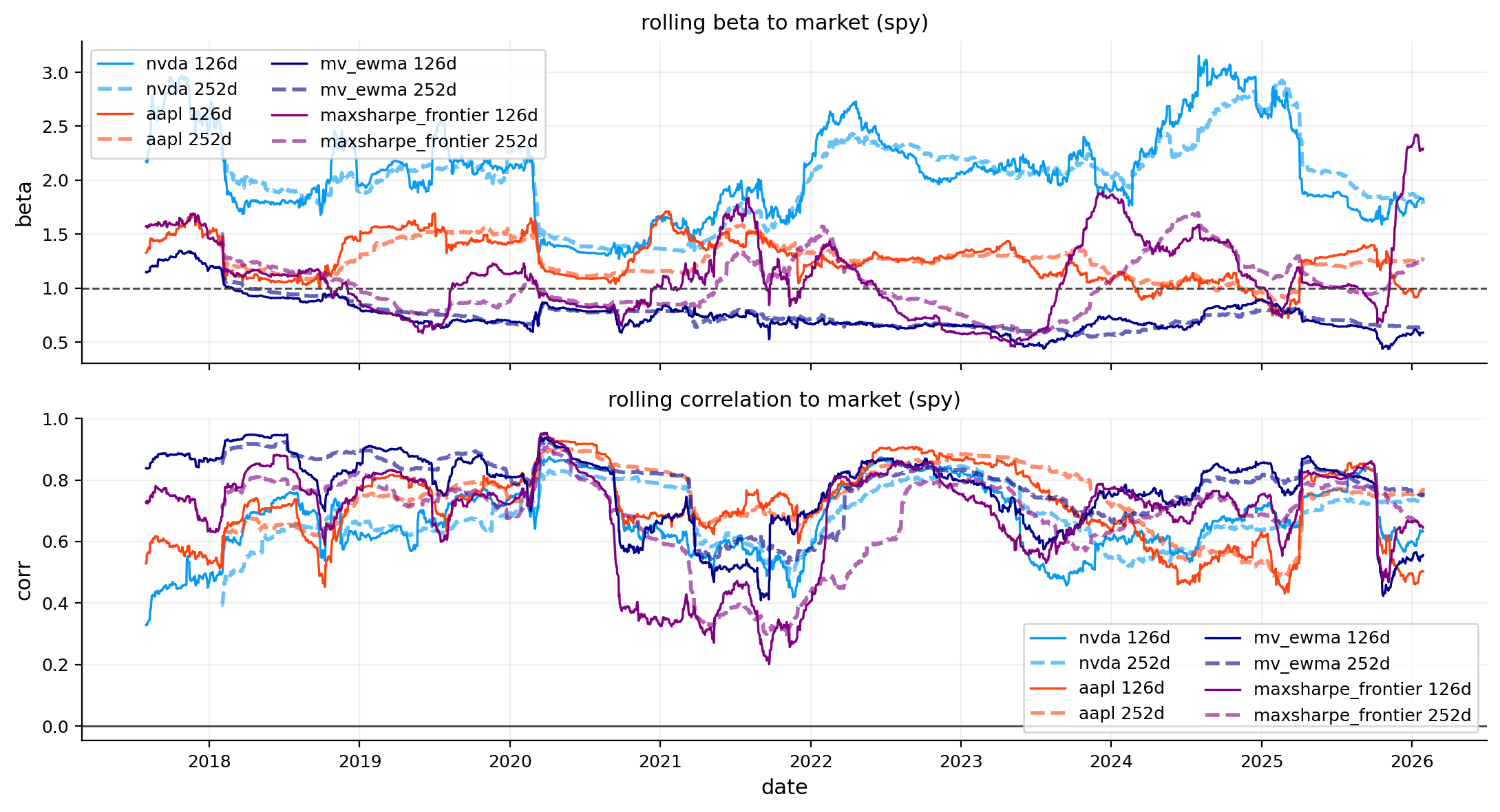

fig, axes = plt.subplots(2, 1, figsize=(11, 6), sharex=True)

for name in obj.keys():

axes[0].plot(roll_store[name]["beta_126"], lw=1.2, color=obj_colors[name], label=f"{name} 126d")

axes[0].plot(roll_store[name]["beta_252"], lw=2.0, color=obj_colors[name], alpha=0.6, ls="--", label=f"{name} 252d")

axes[0].axhline(1.0, color="#444", lw=1, ls="--")

axes[0].set_title("rolling beta to market (spy)")

axes[0].set_ylabel("beta")

axes[0].legend(ncol=2)

for name in obj.keys():

axes[1].plot(roll_store[name]["corr_126"], lw=1.2, color=obj_colors[name], label=f"{name} 126d")

axes[1].plot(roll_store[name]["corr_252"], lw=2.0, color=obj_colors[name], alpha=0.6, ls="--", label=f"{name} 252d")

axes[1].axhline(0.0, color="#444", lw=1)

axes[1].set_title("rolling correlation to market (spy)")

axes[1].set_ylabel("corr")

axes[1].set_xlabel("date")

axes[1].legend(ncol=2)

plt.tight_layout()

plt.show()

Now we can see the difference between our models and our picked stocks. both of our models have beta under 1 and both the stocks have over 1 beta. this means that the risk of these stocks are more dependent on market risk than the diversified portfolios. all the 4 objects have positive alpha which means they all have more returns that can’t be explained by market return and can be interpreted as outperformance of these objects relative to S&P 500. correlation of all the objects to SPY are high through time and MaxSharpe has some times that has higher than 1 beta, but MV_ewma has less than 1 beta almost all the time and is the only object with less than one up and down capture, but this object moves with market a lot due to correlation and R2 and var share.

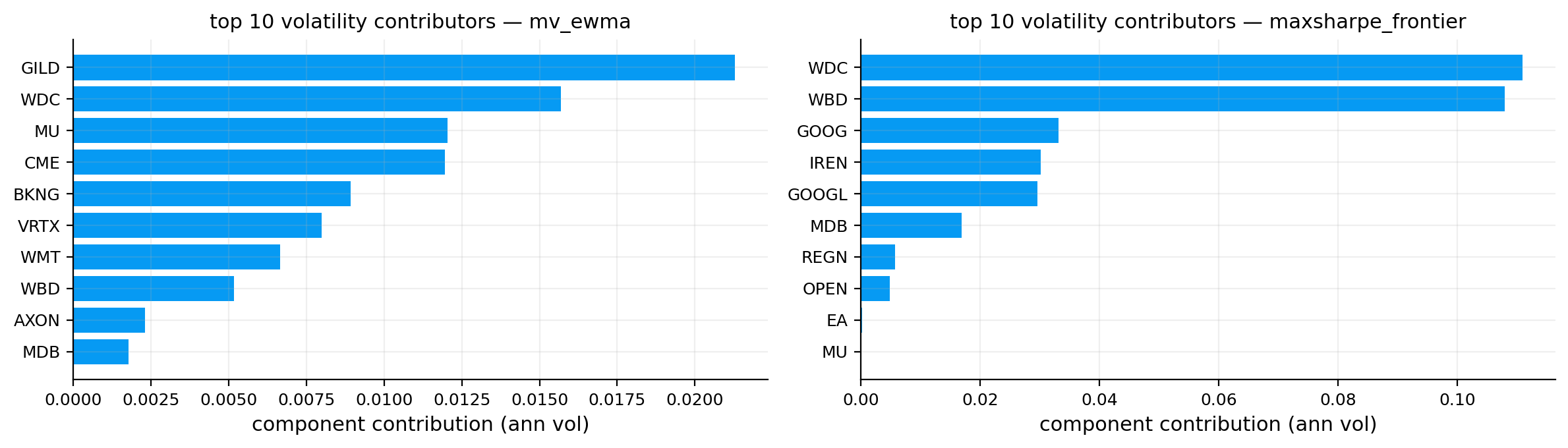

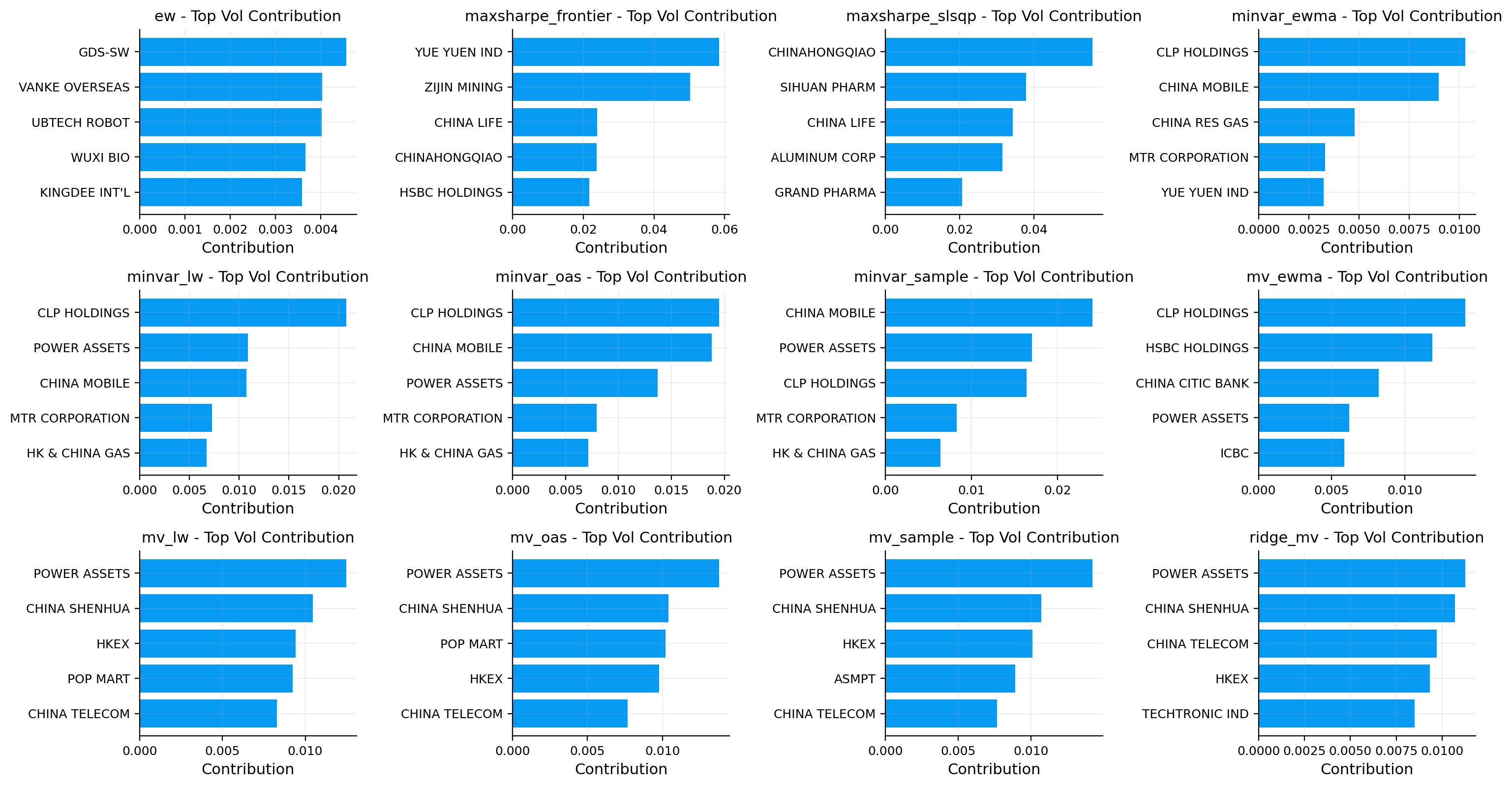

10) risk attribution

We explained this in last notebook. now we repeat it and add another part. for our portfolios we are looking for the assets that have the most share of portfolios risk.

10.1 volatility attribution (covariance-based)

If portfolio weights are \(w\) and covariance matrix is \(\Sigma\). portfolio volatility is:

\[ \sigma_p = \sqrt{w^\top \Sigma w} \]

the marginal contribution to risk (mrc) of asset \(i\) is:

\[ \text{mrc}_i = \frac{(\Sigma w)_i}{\sigma_p} \]

the component contribution is:

\[ \text{rc}_i = w_i\,\text{mrc}_i \]

and the contributions sum to total volatility:

\[ \sum_i \text{rc}_i = \sigma_p \]

this tells us which names drive most of the volatility.

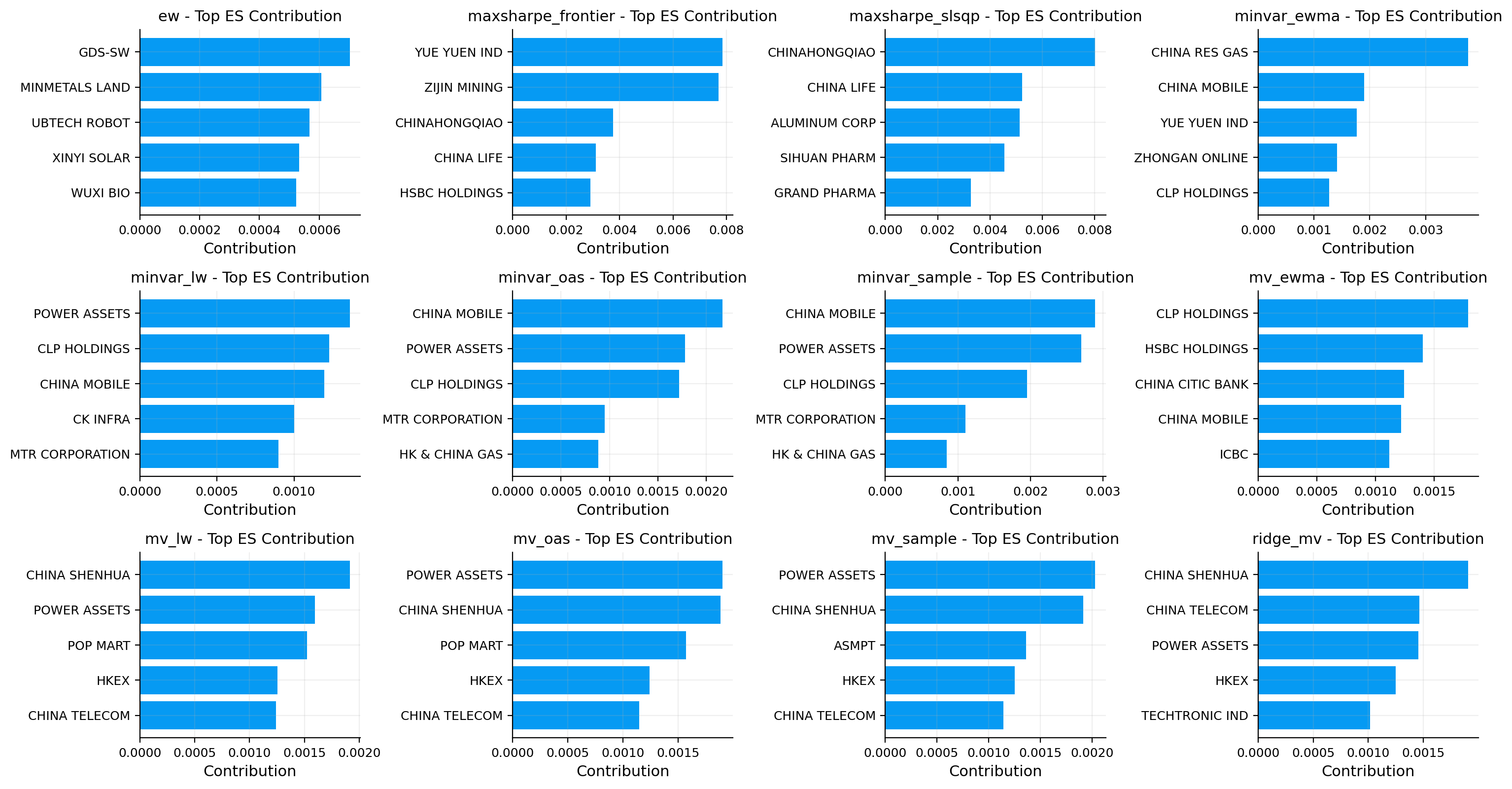

10.2 es attribution

for expected shortfall at level \(\alpha\), identify the set of tail days:

\[ \mathcal{t}_\alpha = \{t : r_{p,t} \le q_\alpha(r_p)\} \]

a simple scenario-based contribution approximation is:

\[ \text{es contrib}_i \approx -\frac{1}{|\mathcal{t}_\alpha|}\sum_{t \in \mathcal{t}_\alpha} w_i r_{i,t} \]

so the largest contributors are the positions that lose the most on the worst portfolio days.

port_info = {

"mv_ewma": (res_mv, "ewma"),

"maxsharpe_frontier": (res_mx, "ledoitwolf")}

vol_contrib = {}

es_contrib = {}

overlap_rows = []

for pname, (res, cov_key) in port_info.items():

dt = res.weights.index[-1]

st = cache[dt]

tickers = st["tickers"]

w = res.weights.loc[dt].reindex(tickers).fillna(0.0).to_numpy(float)

w = w / w.sum()

cov = st["cov_ann_map"][cov_key]

port_vol = np.sqrt(float(w @ cov @ w))

m = cov @ w

rc = pd.Series(w * m / port_vol, index=tickers).sort_values(ascending=False)

vol_contrib[pname] = rc

x = st["window"][tickers].to_numpy(float)

rp = x @ w

q = np.quantile(rp, 0.05)

mask = rp <= q

esc = pd.Series(-(x[mask] * w).mean(axis=0), index=tickers).sort_values(ascending=False)

es_contrib[pname] = esc

ov = len(set(rc.head(10).index).intersection(set(esc.head(10).index)))

overlap_rows.append({"portfolio": pname, "top10_overlap_count": ov})

overlap_tbl = pd.DataFrame(overlap_rows).set_index("portfolio")display(overlap_tbl)| top10_overlap_count | |

|---|---|

| portfolio | |

| mv_ewma | 9 |

| maxsharpe_frontier | 9 |

for both strategies, 9 of top 10 contributions seem to be the same in Volatility and ES contributions. but the order might be different.

fig, axes = plt.subplots(1, 2, figsize=(12, 3.5))

for ax, pname in zip(axes, port_info.keys()):

top = vol_contrib[pname].head(10).sort_values()

ax.barh(top.index, top.values)

ax.set_title(f"top 10 volatility contributors — {pname}")

ax.set_xlabel("component contribution (ann vol)")

plt.tight_layout()

plt.show()

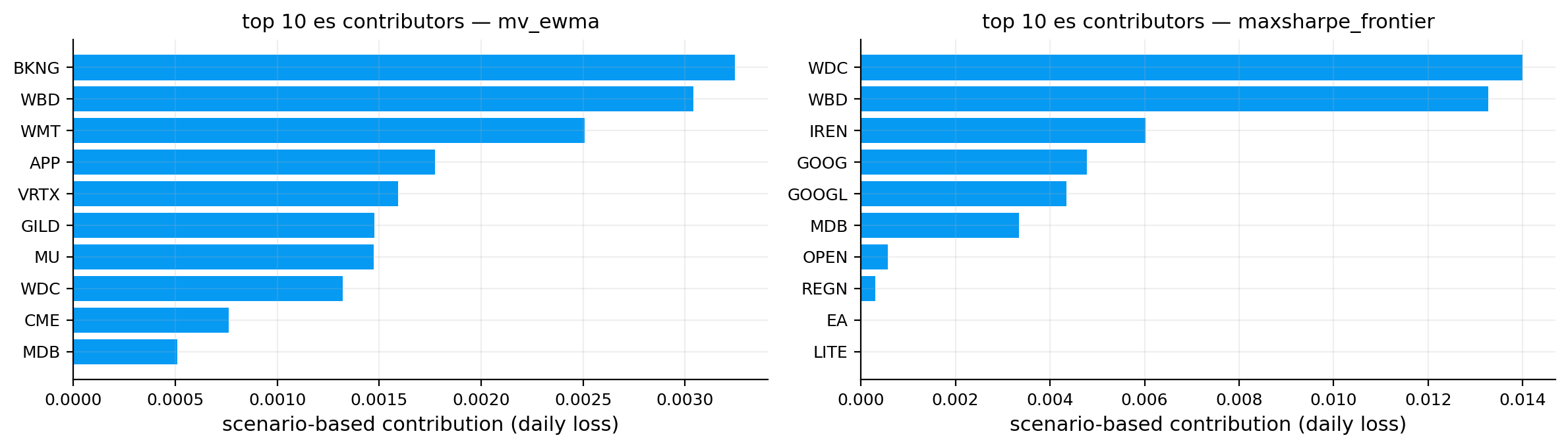

fig, axes = plt.subplots(1, 2, figsize=(12, 3.5))

for ax, pname in zip(axes, port_info.keys()):

top = es_contrib[pname].head(10).sort_values()

ax.barh(top.index, top.values)

ax.set_title(f"top 10 es contributors — {pname}")

ax.set_xlabel("scenario-based contribution (daily loss)")

plt.tight_layout()

plt.show()

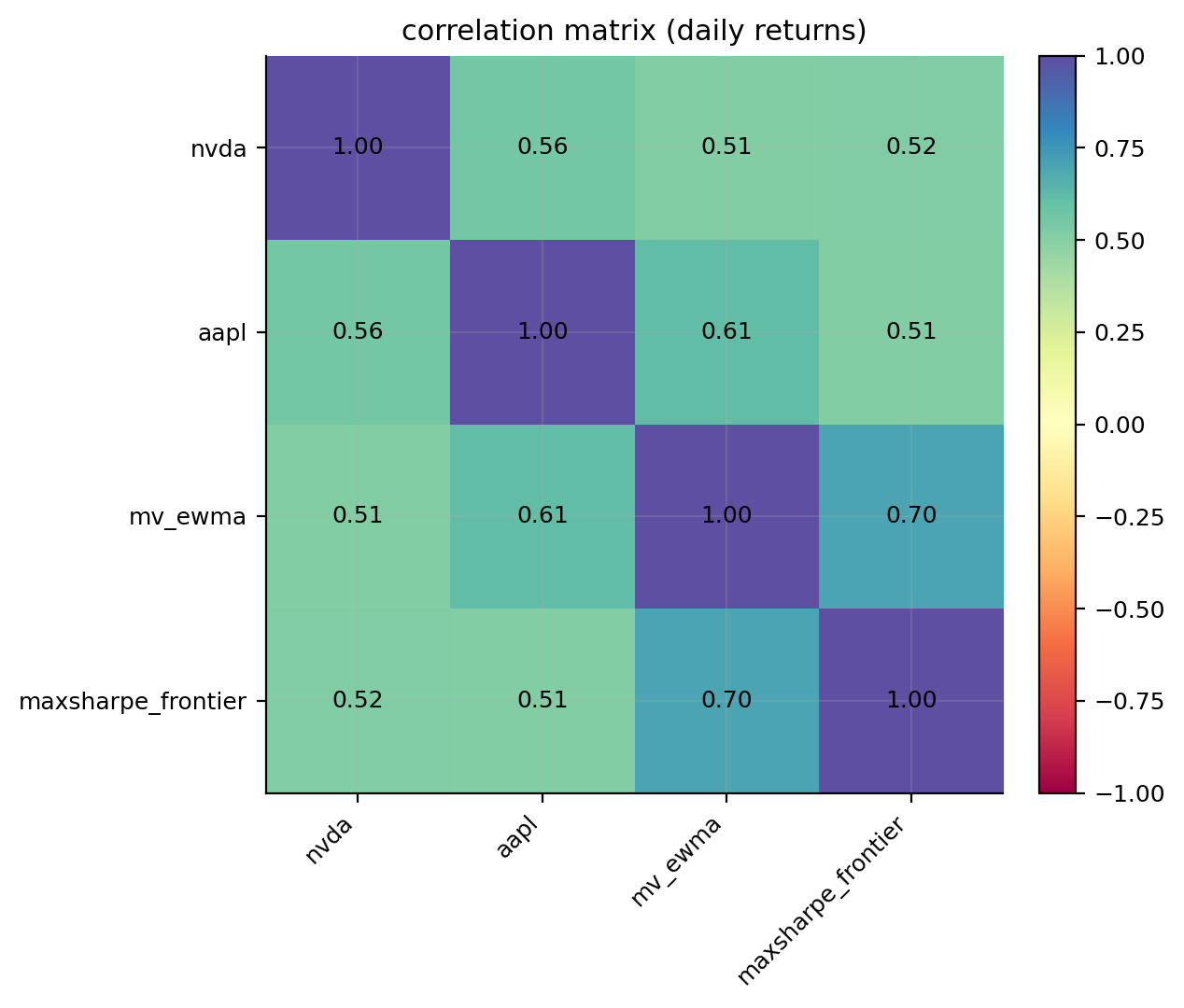

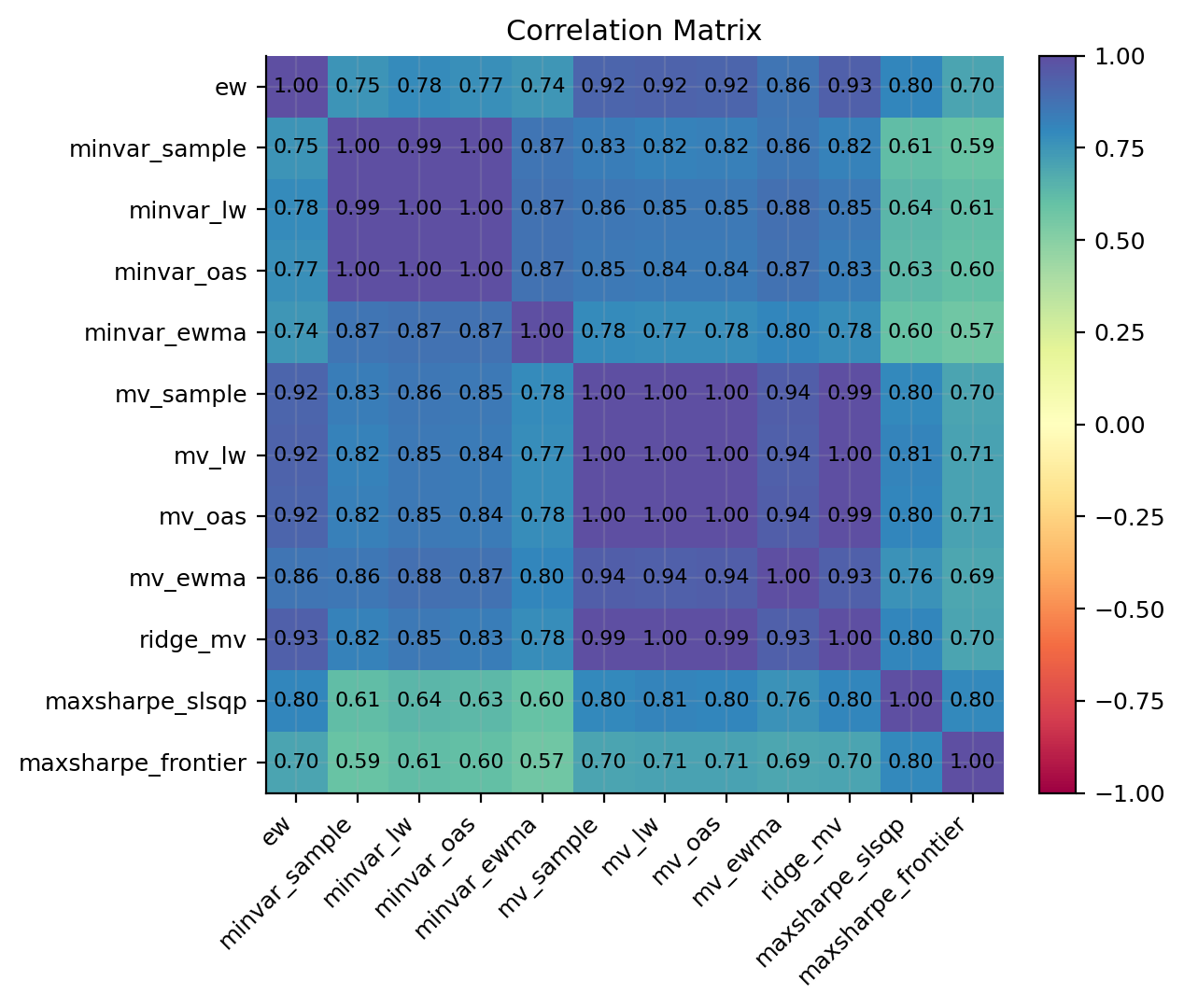

13) correlation and diversification (object-to-object)

correlation is a core ingredient of diversification. for two return series \(x_t\) and \(y_t\):

\[ \rho_{x,y} = \frac{\text{cov}(x,y)}{\sigma_x\sigma_y} \]

we compute the correlation matrix across objects and visualize it as a heatmap.

- \(\rho \approx 1\): objects move together (low diversification benefit)

- \(\rho \approx 0\): movements are mostly independent

- \(\rho < 0\): objects move in opposite direction, and we can hedge the other in some regimes (so rare in stock market)

corr = pd.DataFrame({k: pd.Series(v) for k, v in obj.items()}).dropna().corr()

fig, ax = plt.subplots(1, 1, figsize=(6.5, 5.5))

im = ax.imshow(corr.values, vmin=-1, vmax=1, cmap= "Spectral")

ax.set_xticks(range(len(corr.columns)))

ax.set_yticks(range(len(corr.index)))

ax.set_xticklabels(corr.columns, rotation=45, ha="right")

ax.set_yticklabels(corr.index)

ax.set_title("correlation matrix (daily returns)")

for i in range(corr.shape[0]):

for j in range(corr.shape[1]):

ax.text(j, i, f"{corr.values[i, j]:.2f}", ha="center", va="center", fontsize=9)

fig.colorbar(im, ax=ax, fraction=0.046, pad=0.04)

plt.tight_layout()

plt.show()

Implementation with Quantfinlab

We can implement this whole project in two ways with our library:

in this notebook we show both implementation on US market and Hong Kong market

now we compare all of our portfolios in the last project based on these risk measures.

1) Using mannual functions for risk measures and plots (US data)

import warnings

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import quantfinlab.portfolio as pf

import quantfinlab.risk as rk

import quantfinlab.plots as pl

warnings.filterwarnings("ignore")

rf_annual = 0.04

rf_daily = (1.0 + rf_annual) ** (1.0 / 252.0) - 1.0

df = pd.read_parquet("../data/nasdaq_all_close_volume.parquet")

df["date"] = pd.to_datetime(df["Date"], errors="coerce")

df = df.dropna(subset=["date"]).sort_values("date")

close_map, vol_map = {}, {}

for c in df.columns:

c_str = str(c)

if c_str.lower() == "date" or "__" not in c_str:

continue

t, f = c_str.rsplit("__", 1)

fl = f.lower()

if fl == "close":

close_map[t] = c

elif fl == "volume":

vol_map[t] = c

tickers_all = sorted(set(close_map).intersection(vol_map))

if len(tickers_all) < 2:

raise ValueError("Not enough ticker__close/ticker__volume pairs in NASDAQ parquet.")

close_prices = df[[close_map[t] for t in tickers_all]].copy()

volumes = df[[vol_map[t] for t in tickers_all]].copy()

close_prices.columns = tickers_all

volumes.columns = tickers_all

close_prices.index = pd.to_datetime(df["date"].values)

volumes.index = pd.to_datetime(df["date"].values)

stack = pf.build_all_portfolio_strategies(

close_prices,

volumes,

start="2016-01-01",

rf_annual=rf_annual,

rf_daily=rf_daily,

)

results = dict(stack.results)

cache = dict(stack.cache)

cov_key_for_rc = dict(stack.cov_key_for_rc)

common_idx = None

for res in results.values():

idx_res = pd.DatetimeIndex(res.net_returns.index)

common_idx = idx_res if common_idx is None else common_idx.intersection(idx_res)

if common_idx is None or len(common_idx) == 0:

raise ValueError("No overlapping index across strategy returns.")

objects = {name: res.net_returns.reindex(common_idx).fillna(0.0) for name, res in results.items()}

obj_colors = pl.make_color_map(objects.keys(), pl.LAB_COLORS)

spy = pd.read_csv("../data/spy_yfinance.csv")

spy["date"] = pd.to_datetime(spy["Date"], errors="coerce")

spy = spy.dropna(subset=["date"]).sort_values("date").set_index("date")

if "Adj Close" in spy.columns:

spy_px = pd.to_numeric(spy["Adj Close"], errors="coerce")

elif "Close" in spy.columns:

spy_px = pd.to_numeric(spy["Close"], errors="coerce")

else:

raise ValueError("spy_yfinance.csv missing close/adj close column")

market_ret = spy_px.pct_change(fill_method=None).reindex(common_idx).fillna(0.0)

portfolios = {

name: {

"backtest": results[name],

"state_cache": cache,

"cov_key": cov_key_for_rc[name],

}

for name in results.keys()

}

perf_tbl = rk.performance_table(objects, rf_daily=rf_daily, annualization=252)

shape_tbl = rk.tail_shape_table(objects)

dd_summary_tbl = rk.drawdown_summary_table(objects)

dd_episodes_tbl = rk.drawdown_episodes_table(objects, top_n=1)

var_es_tbl = rk.var_es_table(objects, alpha=0.05, methods=["hist", "cf", "fhs"])

var_bt_methods = ["hist", "cf", "fhs"]

var_bt_tbl = rk.var_backtest_table(objects, alpha=0.05, methods=var_bt_methods, lookback=252)

stress_windows = {

"2018_q4": ("2018-10-01", "2018-12-31"),

"2020_covid": ("2020-02-20", "2020-04-30"),

"2022_inflation": ("2022-01-03", "2022-10-31"),

}

stress_tbl = rk.stress_table(objects, windows=stress_windows, worst_only=True)

stress_tbl_full = rk.stress_table(objects, windows=stress_windows, worst_only=False)

capm_tbl, capm_roll = rk.capm_table(objects, market_ret=market_ret, rf_daily=rf_daily, rolling=[126, 252])

corr = rk.corr_matrix(objects)

vol_rc_tbl, es_rc_tbl, overlap_tbl = rk.attribution_tables(portfolios, es_alpha=0.05, top_k=10)

display(perf_tbl.round(4))

display(shape_tbl.round(4))

display(dd_summary_tbl.round(4))

display(var_es_tbl.round(4))

display(var_bt_tbl.round(4))

display(capm_tbl.round(4))

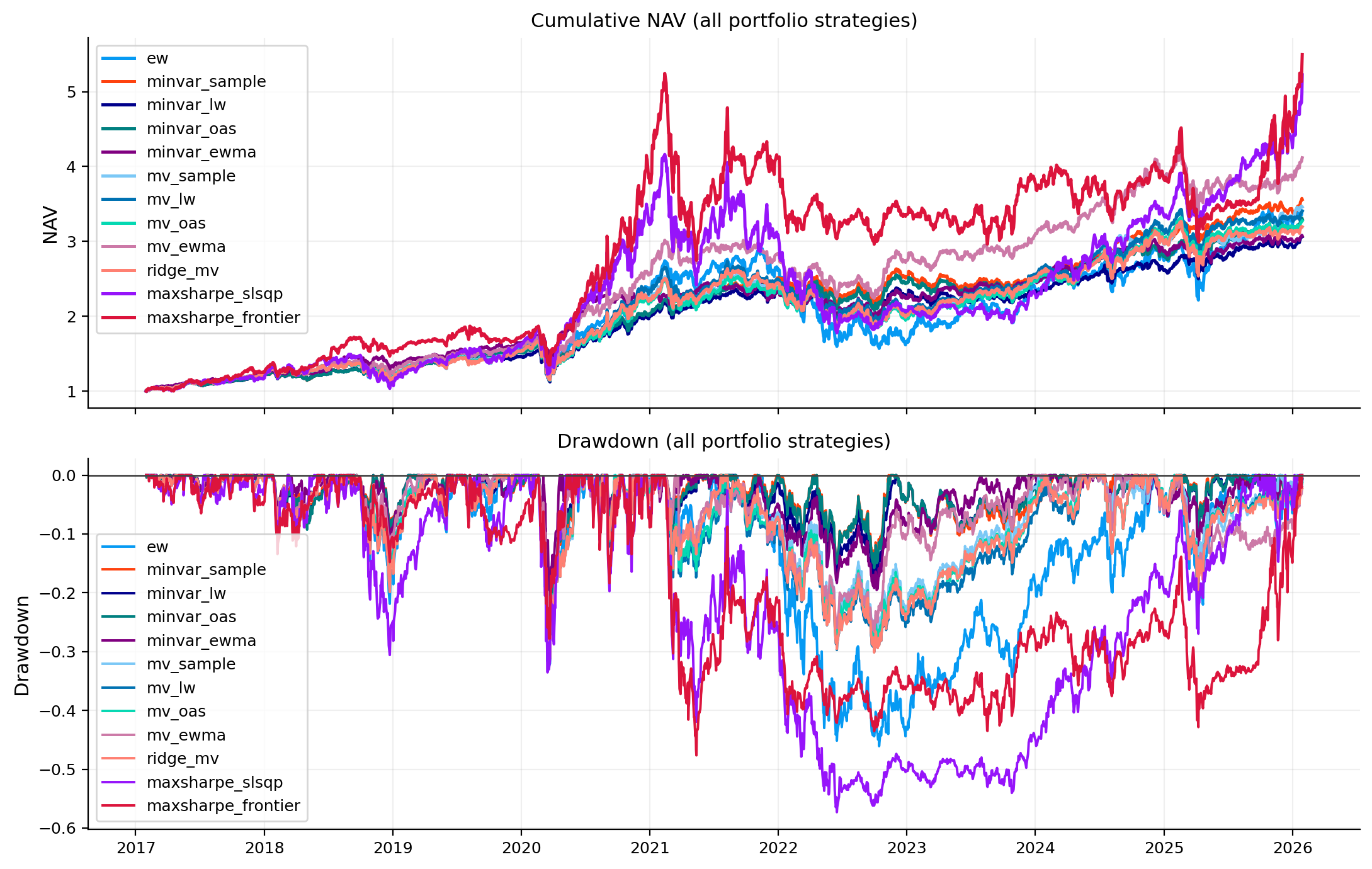

fig, ax = plt.subplots(2, 1, figsize=(11, 7), sharex=True)

pl.plot_nav_compare(ax[0], objects, colors=obj_colors, title="Cumulative NAV (all portfolio strategies)")

pl.plot_drawdown_compare_objects(ax[1], objects, colors=obj_colors, title="Drawdown (all portfolio strategies)")

plt.tight_layout()

plt.show()

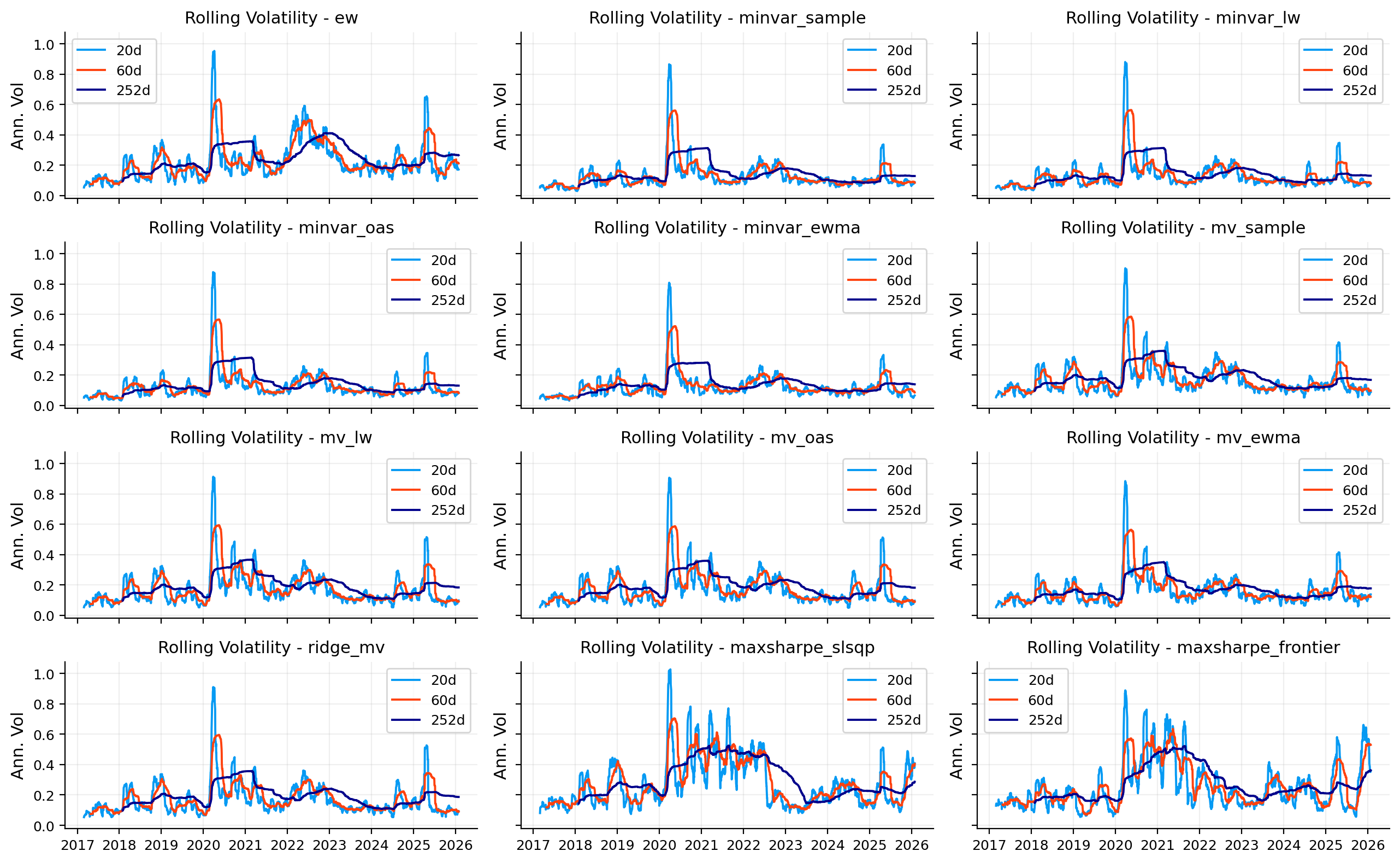

fig, axes = pl.auto_grid(len(objects), ncols=3, figsize=(13, 8), sharex=True, sharey=True)

for a, (name, r) in zip(axes, objects.items()):

pl.plot_rolling_vol(a, r, windows=[20, 60, 252], name=name)

pl.turn_off_unused_axes(axes, used=len(objects))

plt.tight_layout()

plt.show()

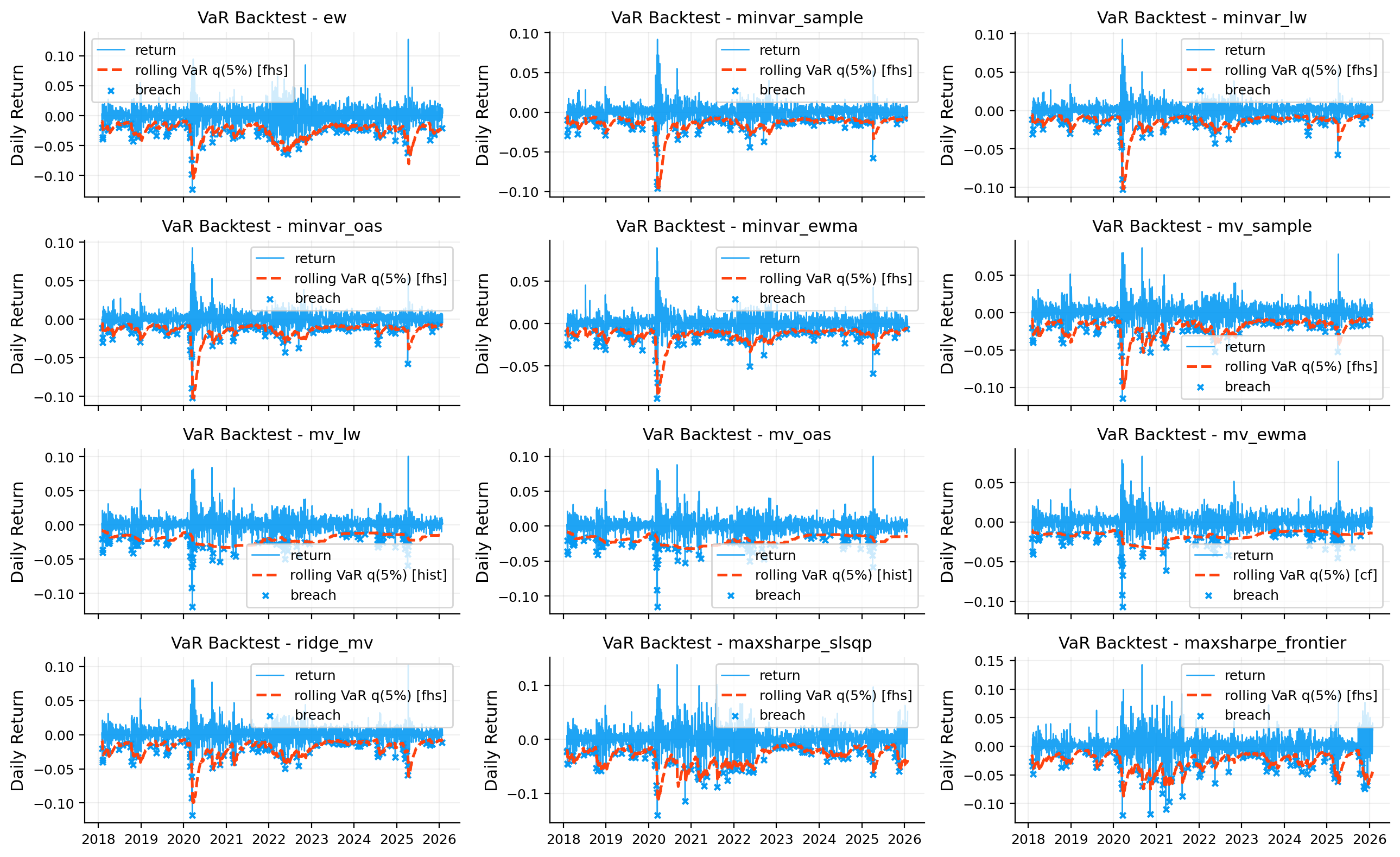

fig, axes = pl.auto_grid(len(objects), ncols=3, figsize=(13, 8), sharex=True, sharey=False)

for a, (name, r) in zip(axes, objects.items()):

pl.plot_var_backtest(a, r, alpha=0.05, lookback=252, method="best", methods=var_bt_methods, name=name)

pl.turn_off_unused_axes(axes, used=len(objects))

plt.tight_layout()

plt.show()

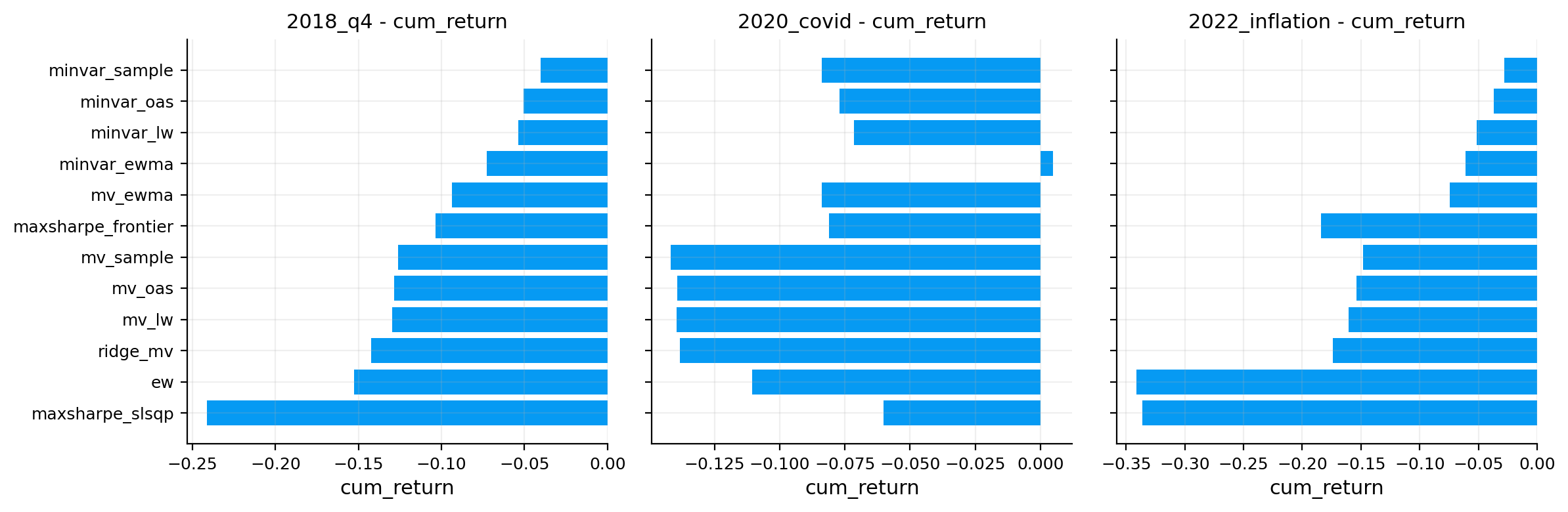

fig, axes = pl.auto_grid(stress_tbl_full.index.nunique(), ncols=3, figsize=(12, 4), sharey=True)

for a, w in zip(axes, stress_tbl_full.index.unique()):

pl.plot_stress_bar(a, stress_tbl_full, window=w)

pl.turn_off_unused_axes(axes, used=stress_tbl_full.index.nunique())

plt.tight_layout()

plt.show()

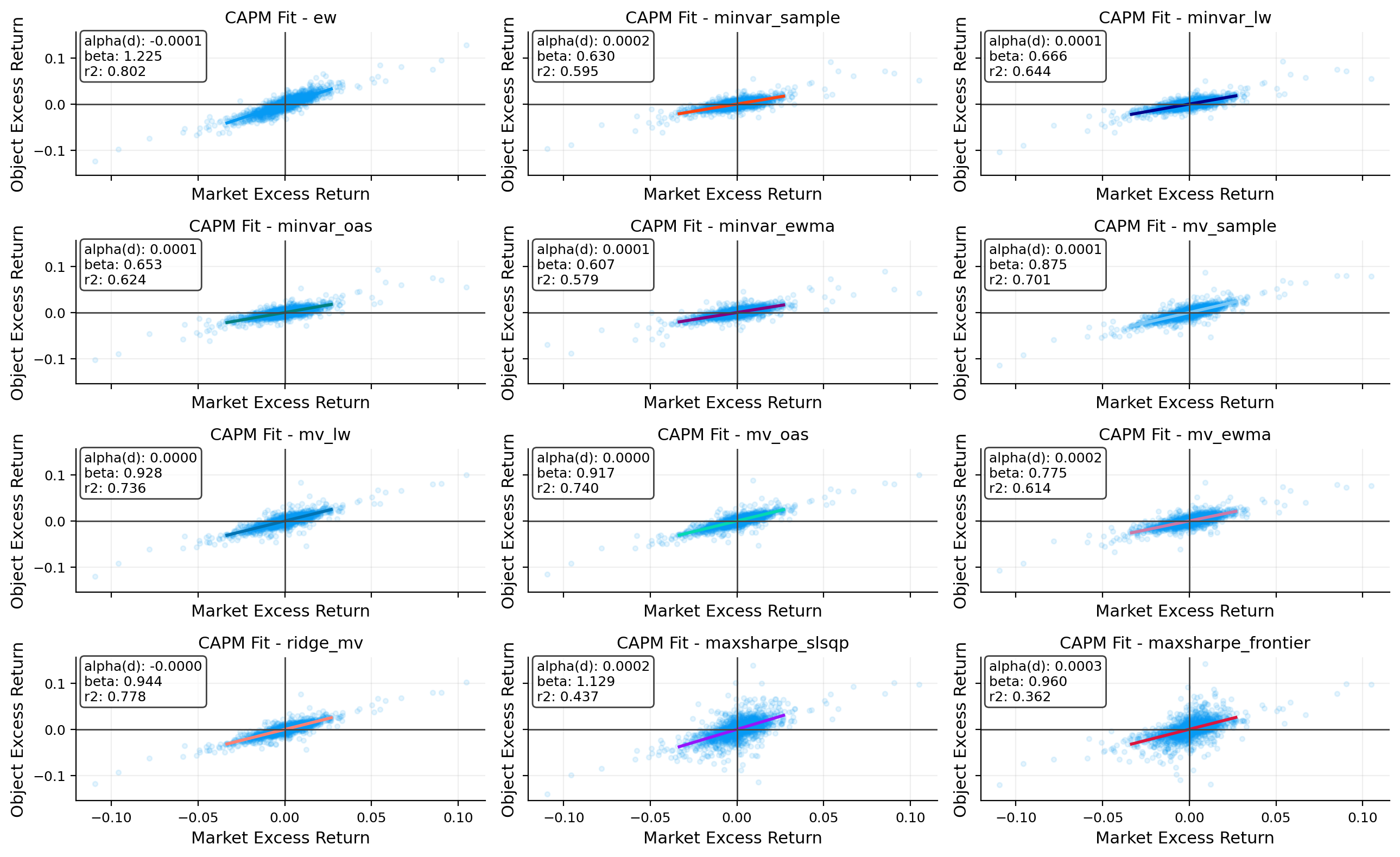

fig, axes = pl.auto_grid(len(objects), ncols=3, figsize=(13, 8), sharex=True, sharey=True)

for a, (name, r) in zip(axes, objects.items()):

pl.plot_capm_scatter(a, r, market_ret, rf_daily=rf_daily, name=name, color=obj_colors[name])

pl.turn_off_unused_axes(axes, used=len(objects))

plt.tight_layout()

plt.show()

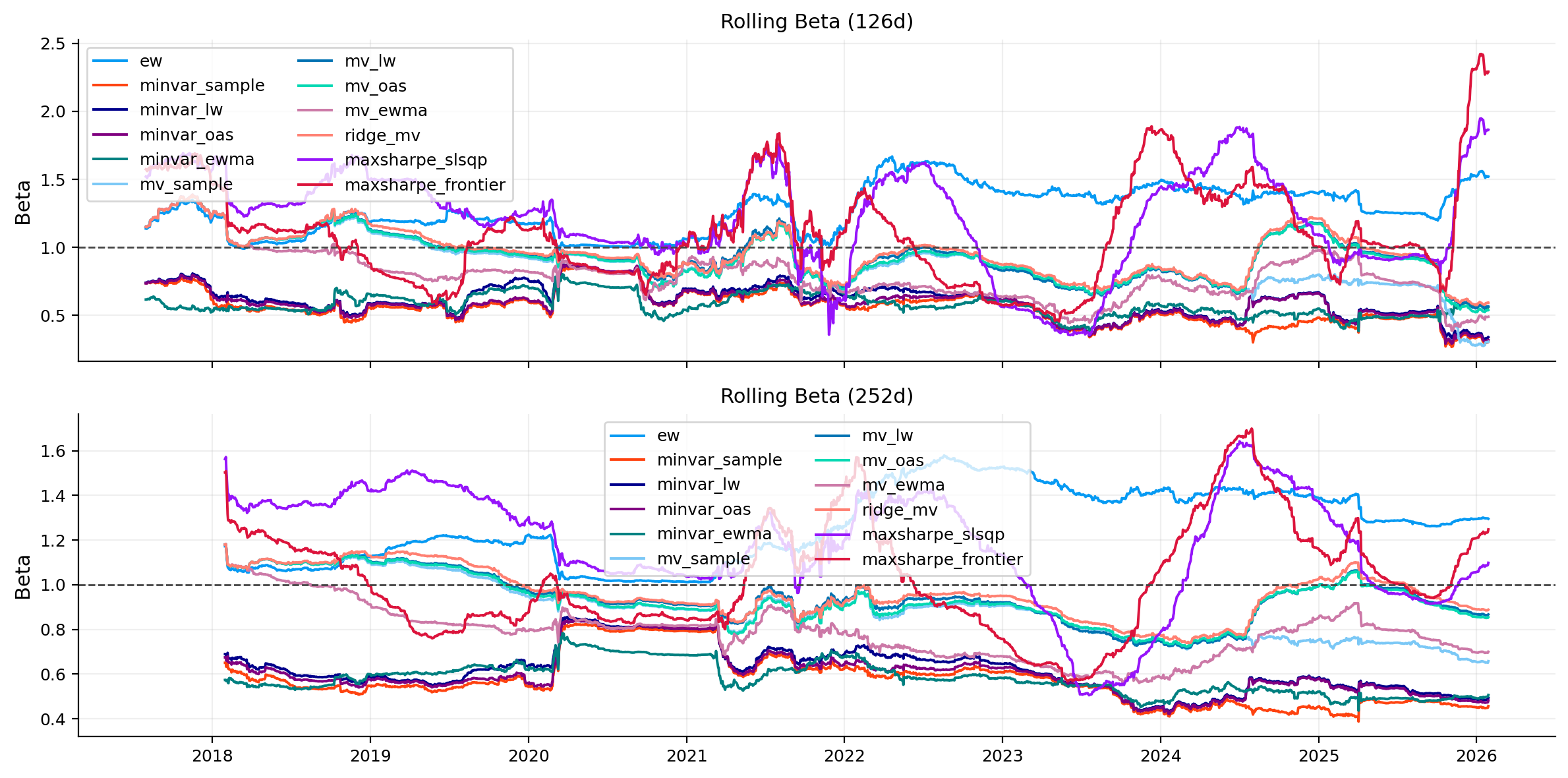

fig, ax = plt.subplots(2, 1, figsize=(12, 6), sharex=True)

pl.plot_rolling_beta_compare(ax[0], capm_roll, window=126)

pl.plot_rolling_beta_compare(ax[1], capm_roll, window=252)

plt.tight_layout()

plt.show()

fig, ax = plt.subplots(1, 1, figsize=(8, 6))

pl.plot_corr_heatmap(ax, corr)

plt.tight_layout()

plt.show()

names = list(portfolios.keys())

fig, axes = plt.subplots(2, len(names), figsize=(3.8 * len(names), 7))

if len(names) == 1:

axes = axes.reshape(2, 1)

for j, pname in enumerate(names):

pl.plot_top_contrib(axes[0, j], vol_rc_tbl.loc[pname], title=f"{pname} - top vol rc", k=10)

pl.plot_top_contrib(axes[1, j], es_rc_tbl.loc[pname], title=f"{pname} - top es rc", k=10)

plt.tight_layout()

plt.show()| ann_return | ann_vol | sharpe | sortino | |

|---|---|---|---|---|

| object | ||||

| ew | 0.1464 | 0.2530 | 0.5119 | 0.7195 |

| maxsharpe_frontier | 0.2092 | 0.2949 | 0.6590 | 0.9431 |

| maxsharpe_slsqp | 0.2024 | 0.3161 | 0.6175 | 0.8760 |

| minvar_ewma | 0.1332 | 0.1477 | 0.6552 | 0.9331 |

| minvar_lw | 0.1329 | 0.1535 | 0.6344 | 0.9059 |

| minvar_oas | 0.1423 | 0.1530 | 0.6903 | 0.9910 |

| minvar_sample | 0.1520 | 0.1512 | 0.7524 | 1.0898 |

| mv_ewma | 0.1708 | 0.1830 | 0.7393 | 1.0615 |

| mv_lw | 0.1462 | 0.2001 | 0.5864 | 0.8235 |

| mv_oas | 0.1413 | 0.1971 | 0.5703 | 0.8035 |

| mv_sample | 0.1484 | 0.1935 | 0.6096 | 0.8608 |

| ridge_mv | 0.1382 | 0.1980 | 0.5553 | 0.7769 |

| skew | excess_kurtosis | tail_ratio_95_05 | worst_1d | worst_5d_avg | worst_10d_avg | |

|---|---|---|---|---|---|---|

| object | ||||||

| ew | -0.1442 | 6.5229 | 0.8626 | -0.1239 | -0.0852 | -0.0729 |

| maxsharpe_frontier | -0.1042 | 6.9172 | 1.0044 | -0.1203 | -0.1067 | -0.0904 |

| maxsharpe_slsqp | -0.1347 | 5.6136 | 0.9442 | -0.1403 | -0.1056 | -0.0903 |

| minvar_ewma | -0.2237 | 15.7958 | 1.0361 | -0.0879 | -0.0686 | -0.0549 |

| minvar_lw | -0.2048 | 20.0543 | 1.0072 | -0.1031 | -0.0717 | -0.0569 |

| minvar_oas | -0.1550 | 20.2887 | 1.0248 | -0.1021 | -0.0717 | -0.0568 |

| minvar_sample | -0.0546 | 19.5492 | 1.0468 | -0.0962 | -0.0708 | -0.0561 |

| mv_ewma | -0.1893 | 11.7377 | 1.0380 | -0.1067 | -0.0762 | -0.0613 |

| mv_lw | -0.2516 | 11.2377 | 0.9091 | -0.1194 | -0.0776 | -0.0639 |

| mv_oas | -0.1621 | 11.4696 | 0.9020 | -0.1151 | -0.0757 | -0.0629 |

| mv_sample | -0.2380 | 10.9302 | 0.9371 | -0.1144 | -0.0742 | -0.0625 |

| ridge_mv | -0.2658 | 11.2937 | 0.8839 | -0.1177 | -0.0776 | -0.0626 |

| max_dd | longest_dd_days | avg_recovery_days | ulcer_index | |

|---|---|---|---|---|

| object | ||||

| ew | -0.4608 | 771 | 18.1759 | 0.1689 |

| maxsharpe_frontier | -0.4764 | 1238 | 30.5072 | 0.2400 |

| maxsharpe_slsqp | -0.5730 | 1153 | 28.5616 | 0.2750 |

| minvar_ewma | -0.2339 | 613 | 15.0547 | 0.0518 |

| minvar_lw | -0.2791 | 312 | 14.6493 | 0.0495 |

| minvar_oas | -0.2787 | 288 | 13.5208 | 0.0456 |

| minvar_sample | -0.2797 | 304 | 12.0562 | 0.0459 |

| mv_ewma | -0.3087 | 701 | 15.1034 | 0.0822 |

| mv_lw | -0.3128 | 695 | 16.5981 | 0.1080 |

| mv_oas | -0.3063 | 620 | 16.5981 | 0.0994 |

| mv_sample | -0.3098 | 609 | 17.9910 | 0.0937 |

| ridge_mv | -0.3116 | 621 | 16.8019 | 0.1024 |

| hist_var5 | hist_es5 | cf_var5 | cf_es5 | fhs_var5 | fhs_es5 | |

|---|---|---|---|---|---|---|

| object | ||||||

| ew | 0.0267 | 0.0377 | 0.0241 | 0.0490 | 0.0197 | 0.0281 |

| maxsharpe_frontier | 0.0281 | 0.0446 | 0.0276 | 0.0577 | 0.0453 | 0.0679 |

| maxsharpe_slsqp | 0.0317 | 0.0480 | 0.0303 | 0.0583 | 0.0389 | 0.0537 |

| minvar_ewma | 0.0126 | 0.0213 | 0.0124 | 0.0417 | 0.0068 | 0.0109 |

| minvar_lw | 0.0129 | 0.0218 | 0.0120 | 0.0494 | 0.0083 | 0.0123 |

| minvar_oas | 0.0127 | 0.0215 | 0.0117 | 0.0493 | 0.0088 | 0.0128 |

| minvar_sample | 0.0124 | 0.0210 | 0.0114 | 0.0471 | 0.0088 | 0.0132 |

| mv_ewma | 0.0161 | 0.0262 | 0.0162 | 0.0444 | 0.0133 | 0.0200 |

| mv_lw | 0.0192 | 0.0306 | 0.0181 | 0.0481 | 0.0102 | 0.0147 |

| mv_oas | 0.0191 | 0.0300 | 0.0175 | 0.0474 | 0.0099 | 0.0144 |

| mv_sample | 0.0182 | 0.0289 | 0.0175 | 0.0459 | 0.0099 | 0.0143 |

| ridge_mv | 0.0190 | 0.0305 | 0.0180 | 0.0478 | 0.0097 | 0.0142 |

| breach_count | breach_rate | coverage_error | abs_coverage_error | longest_breach_streak | avg_gap_days | kupiec_p | christoffersen_p | quantile_loss | accuracy_rank | accuracy_score | is_best | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| object | method | ||||||||||||

| ew | cf | 133 | 0.0662 | 0.0162 | 0.0162 | 3 | 15.1515 | 0.0015 | 0.0030 | 0.0020 | 3.0 | 0.0769 | False |

| fhs | 118 | 0.0587 | 0.0087 | 0.0087 | 3 | 17.0940 | 0.0801 | 0.0268 | 0.0018 | 1.0 | 0.2000 | True | |

| hist | 128 | 0.0637 | 0.0137 | 0.0137 | 3 | 15.4409 | 0.0067 | 0.0033 | 0.0020 | 2.0 | 0.1111 | False | |

| maxsharpe_frontier | cf | 120 | 0.0597 | 0.0097 | 0.0097 | 3 | 16.6303 | 0.0519 | 0.0021 | 0.0023 | 3.0 | 0.0909 | False |

| fhs | 106 | 0.0528 | 0.0028 | 0.0028 | 2 | 18.8190 | 0.5732 | 0.0013 | 0.0021 | 1.0 | 0.1667 | True | |

| hist | 116 | 0.0577 | 0.0077 | 0.0077 | 3 | 17.2087 | 0.1199 | 0.0003 | 0.0023 | 2.0 | 0.1000 | False | |

| maxsharpe_slsqp | cf | 138 | 0.0687 | 0.0187 | 0.0187 | 3 | 14.5547 | 0.0003 | 0.0029 | 0.0024 | 2.0 | 0.0909 | False |

| fhs | 113 | 0.0562 | 0.0062 | 0.0062 | 3 | 17.5179 | 0.2074 | 0.0048 | 0.0023 | 1.0 | 0.2000 | True | |

| hist | 129 | 0.0642 | 0.0142 | 0.0142 | 3 | 15.5781 | 0.0050 | 0.0014 | 0.0024 | 2.0 | 0.0909 | False | |

| minvar_ewma | cf | 109 | 0.0543 | 0.0043 | 0.0043 | 3 | 17.8889 | 0.3877 | 0.0002 | 0.0012 | 2.0 | 0.1250 | False |

| fhs | 114 | 0.0567 | 0.0067 | 0.0067 | 3 | 17.6991 | 0.1741 | 0.0006 | 0.0011 | 1.0 | 0.1429 | True | |

| hist | 118 | 0.0587 | 0.0087 | 0.0087 | 3 | 16.5128 | 0.0801 | 0.0000 | 0.0012 | 3.0 | 0.0833 | False | |

| minvar_lw | cf | 100 | 0.0498 | -0.0002 | 0.0002 | 2 | 19.6465 | 0.9632 | 0.0043 | 0.0012 | 2.0 | 0.1250 | False |

| fhs | 111 | 0.0553 | 0.0053 | 0.0053 | 2 | 18.0727 | 0.2879 | 0.0583 | 0.0011 | 1.0 | 0.1429 | True | |

| hist | 114 | 0.0567 | 0.0067 | 0.0067 | 4 | 17.2124 | 0.1741 | 0.0000 | 0.0012 | 3.0 | 0.0833 | False | |

| minvar_oas | cf | 101 | 0.0503 | 0.0003 | 0.0003 | 2 | 19.4500 | 0.9551 | 0.0016 | 0.0012 | 2.0 | 0.1250 | False |

| fhs | 112 | 0.0557 | 0.0057 | 0.0057 | 2 | 17.9099 | 0.2453 | 0.0282 | 0.0011 | 1.0 | 0.1429 | True | |

| hist | 116 | 0.0577 | 0.0077 | 0.0077 | 3 | 16.9130 | 0.1199 | 0.0001 | 0.0012 | 3.0 | 0.0833 | False | |

| minvar_sample | cf | 104 | 0.0518 | 0.0018 | 0.0018 | 3 | 18.8835 | 0.7178 | 0.0002 | 0.0011 | 2.0 | 0.1250 | False |

| fhs | 108 | 0.0538 | 0.0038 | 0.0038 | 2 | 18.5794 | 0.4449 | 0.0159 | 0.0011 | 1.0 | 0.1429 | True | |

| hist | 111 | 0.0553 | 0.0053 | 0.0053 | 4 | 17.6818 | 0.2879 | 0.0001 | 0.0011 | 3.0 | 0.0833 | False | |

| mv_ewma | cf | 107 | 0.0533 | 0.0033 | 0.0033 | 2 | 18.2642 | 0.5068 | 0.0001 | 0.0014 | 1.0 | 0.1429 | True |

| fhs | 110 | 0.0548 | 0.0048 | 0.0048 | 2 | 17.9817 | 0.3353 | 0.0028 | 0.0013 | 1.0 | 0.1429 | False | |

| hist | 112 | 0.0557 | 0.0057 | 0.0057 | 3 | 16.9820 | 0.2453 | 0.0000 | 0.0014 | 3.0 | 0.0769 | False | |

| mv_lw | cf | 115 | 0.0572 | 0.0072 | 0.0072 | 3 | 17.5439 | 0.1450 | 0.0067 | 0.0016 | 3.0 | 0.1000 | False |