import re

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from IPython.display import display

from pathlib import Path

import warnings

import quantfinlab.fixed_income as fi

import quantfinlab.plots as pl

warnings.filterwarnings("ignore")

path = Path("../data/japan_yield_curve_all.csv")

first_line = Path(path).read_text(encoding="utf-8", errors="ignore").splitlines()[0].strip().lower()

if first_line.startswith("interest rate"):

raw = pd.read_csv(path, skiprows=1, na_values=["-"], keep_default_na=True)

else:

raw = pd.read_csv(path, na_values=["-"], keep_default_na=True)

raw = raw.rename(columns={c: str(c).strip() for c in raw.columns})

def normalize_tenor_name(c):

s = str(c).strip().upper().replace(" ", "")

s = s.replace("MONTHS", "M").replace("MONTH", "M").replace("MOS", "M").replace("MO", "M")

s = s.replace("YEARS", "Y").replace("YEAR", "Y").replace("YRS", "Y").replace("YR", "Y")

return s

lower_cols = [c.lower() for c in raw.columns]

date_col = raw.columns[lower_cols.index("date")] if "date" in lower_cols else raw.columns[0]

raw = raw.rename(columns={date_col: "date"})

raw["date"] = pd.to_datetime(raw["date"], errors="coerce")

raw = raw.dropna(subset=["date"]).sort_values("date").set_index("date")

raw = raw.rename(columns={c: normalize_tenor_name(c) for c in raw.columns})

tenor_cols = [c for c in raw.columns if re.fullmatch(r"\d+(M|Y)", str(c))]

tenor_cols = sorted(tenor_cols, key=fi.tenor_to_years)

par = raw[tenor_cols].apply(pd.to_numeric, errors="coerce").dropna(how="all").sort_index()

if np.nanmedian(par.to_numpy(dtype=float)) > 1.0:

par = par / 100.0

methods = ["loglinear", "pchip", "nss", "qp"]

holdouts = ["2Y", "7Y", "20Y", "30Y"]

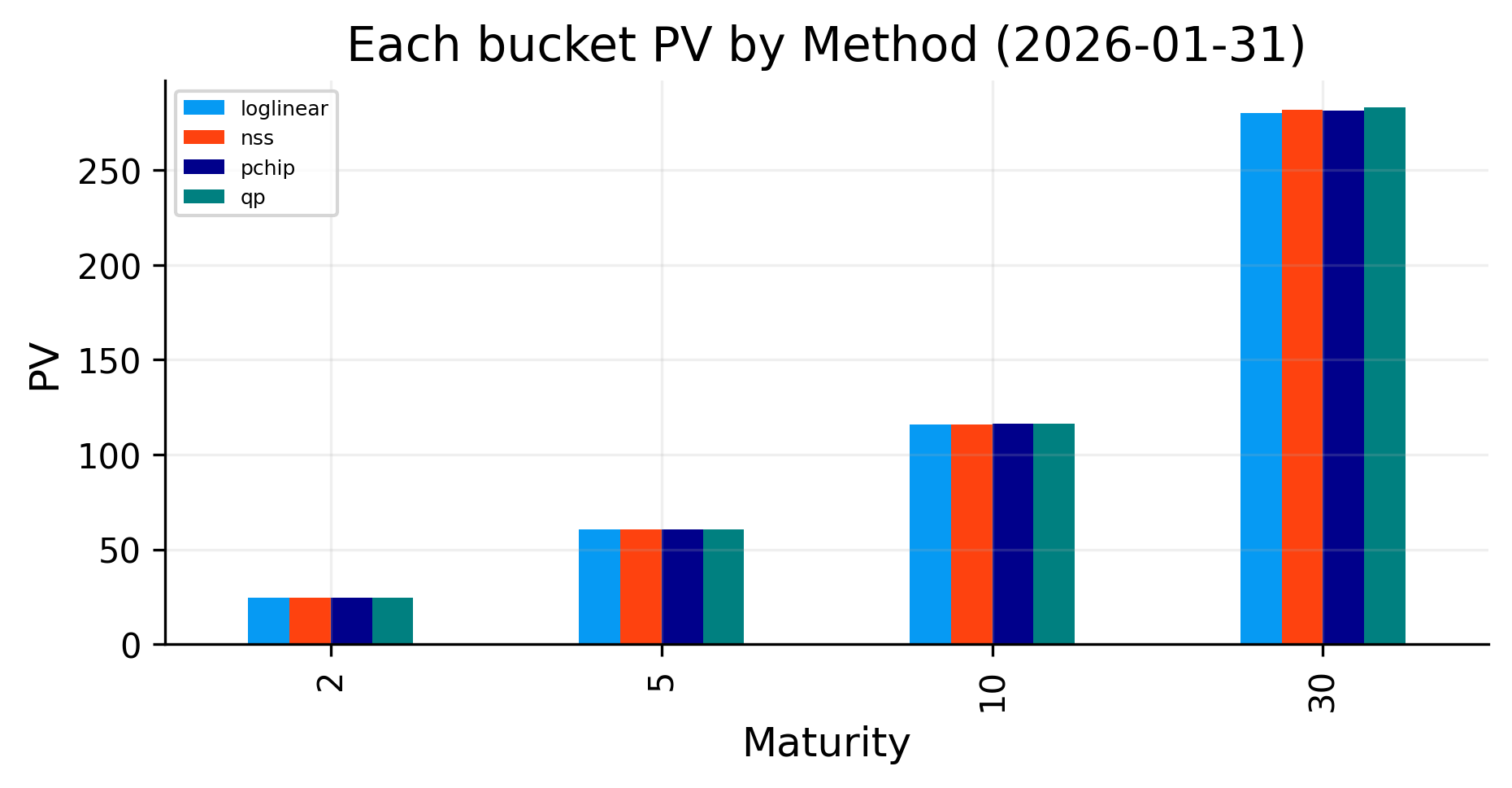

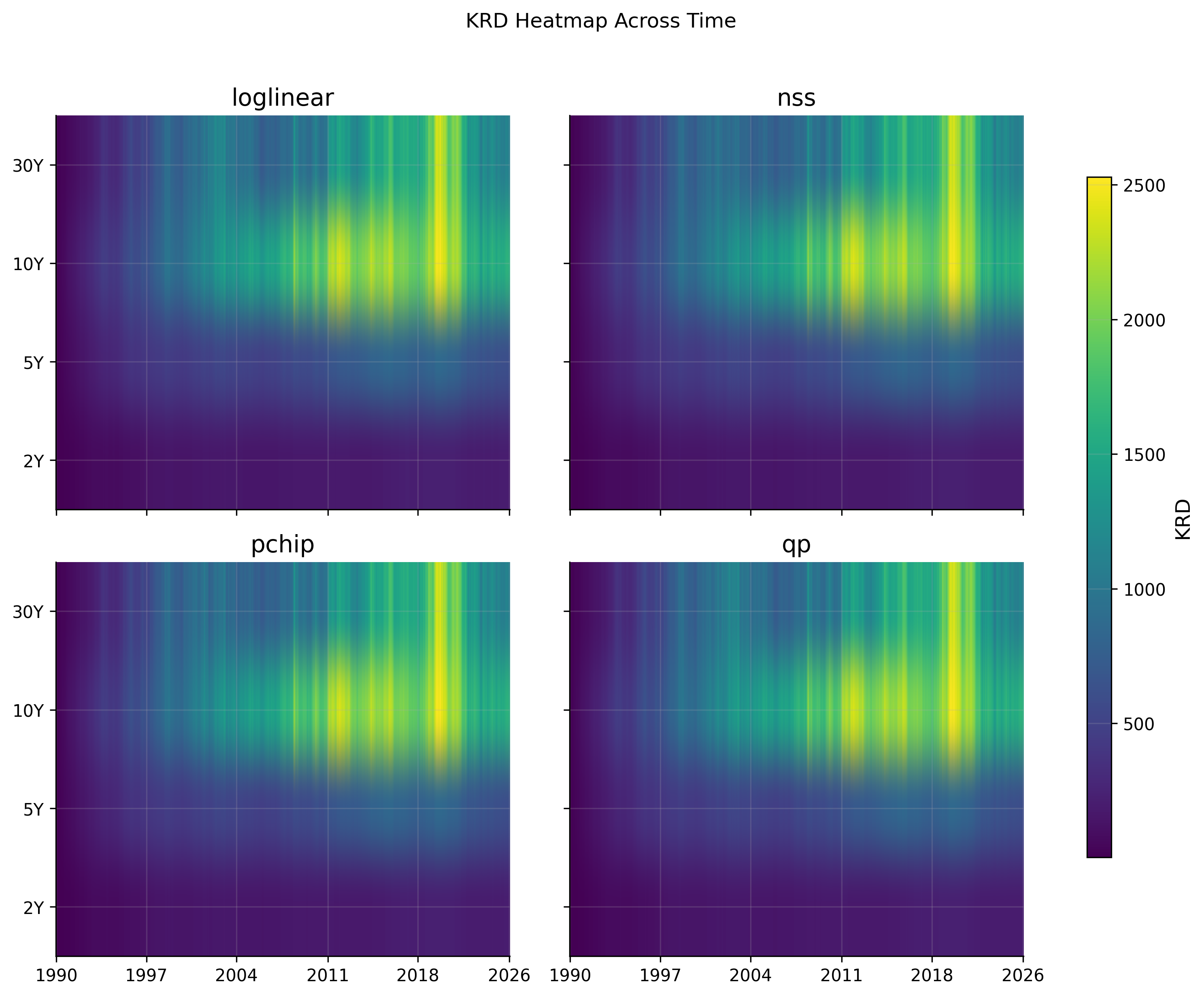

keys = [2, 5, 10, 30]

freq = 2

short_end = "continuous"

asof = fi.resolve_asof(par.index)

pillars = fi.bootstrap_pillars(par.loc[asof], asof=asof, tenor_cols=tenor_cols, freq=freq, short_end=short_end)

curves = fi.fit_curves(pillars, methods=methods, freq=freq)

curve_t_max = max(30.0, float(pillars.T.max()))

plot_grid = np.linspace(max(1 / 12, float(pillars.T.min())), float(pillars.T.max()), 250)

par_fit_table = fi.par_curve_table(curves, grid=plot_grid, freq=freq)

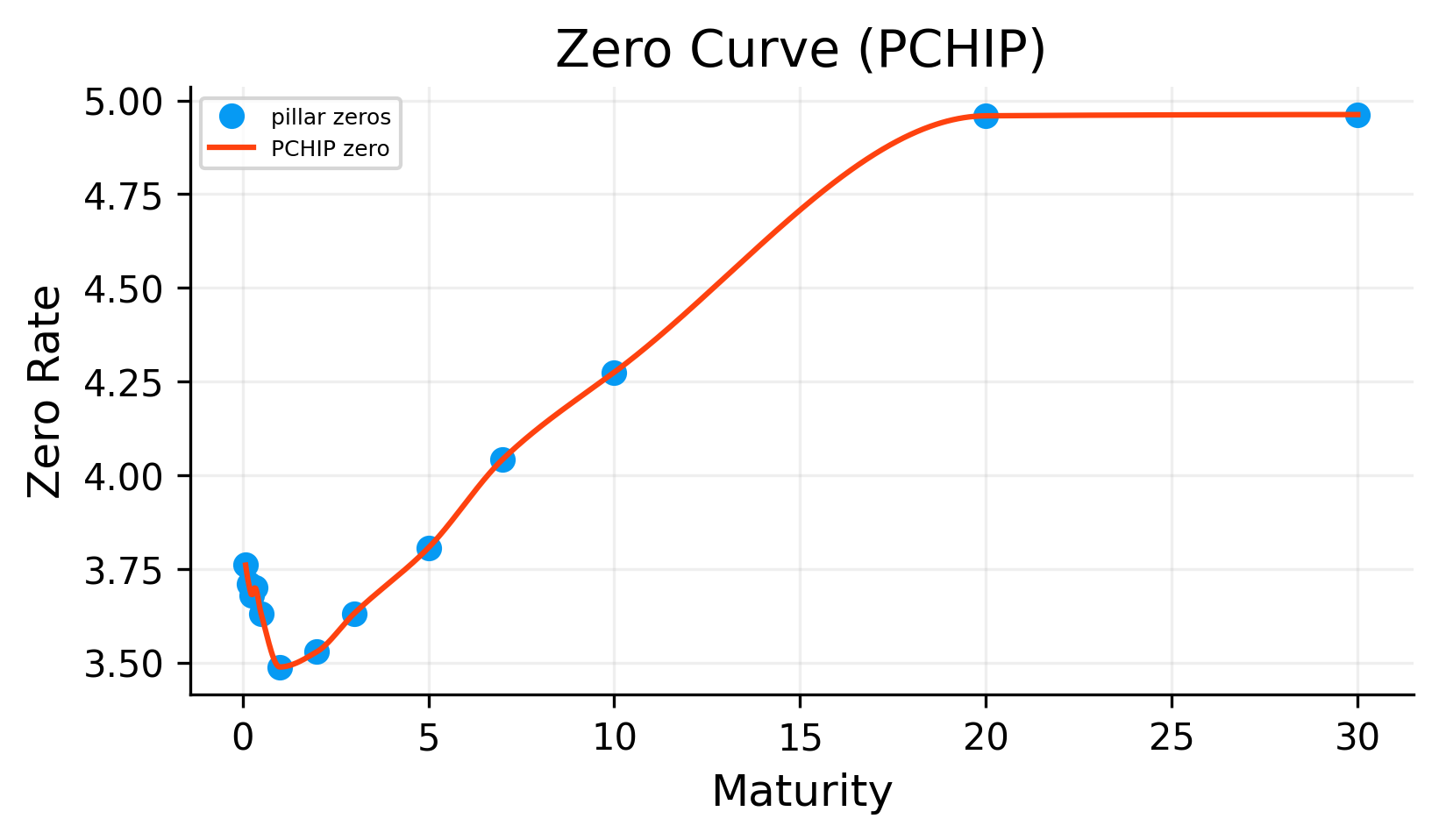

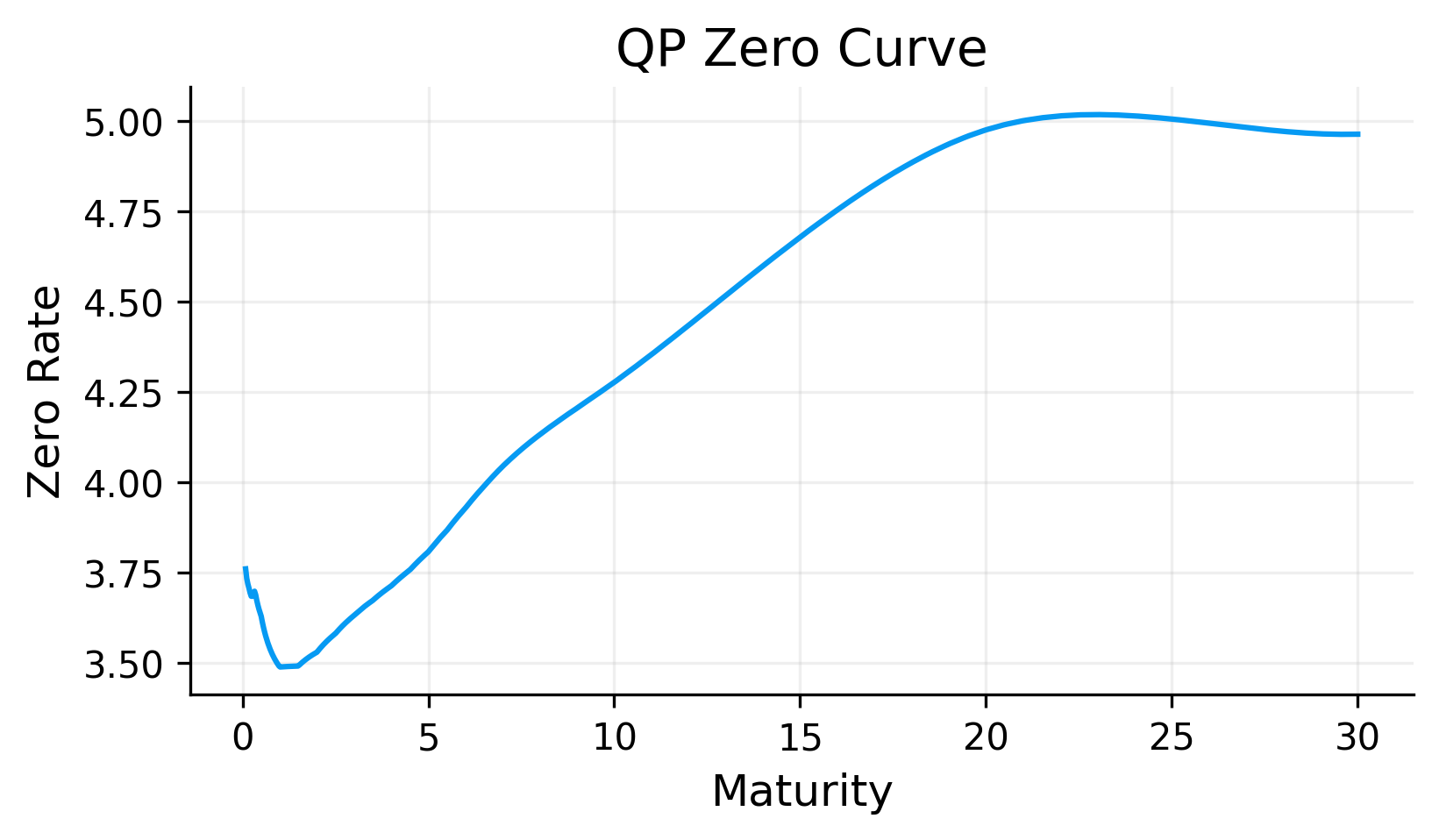

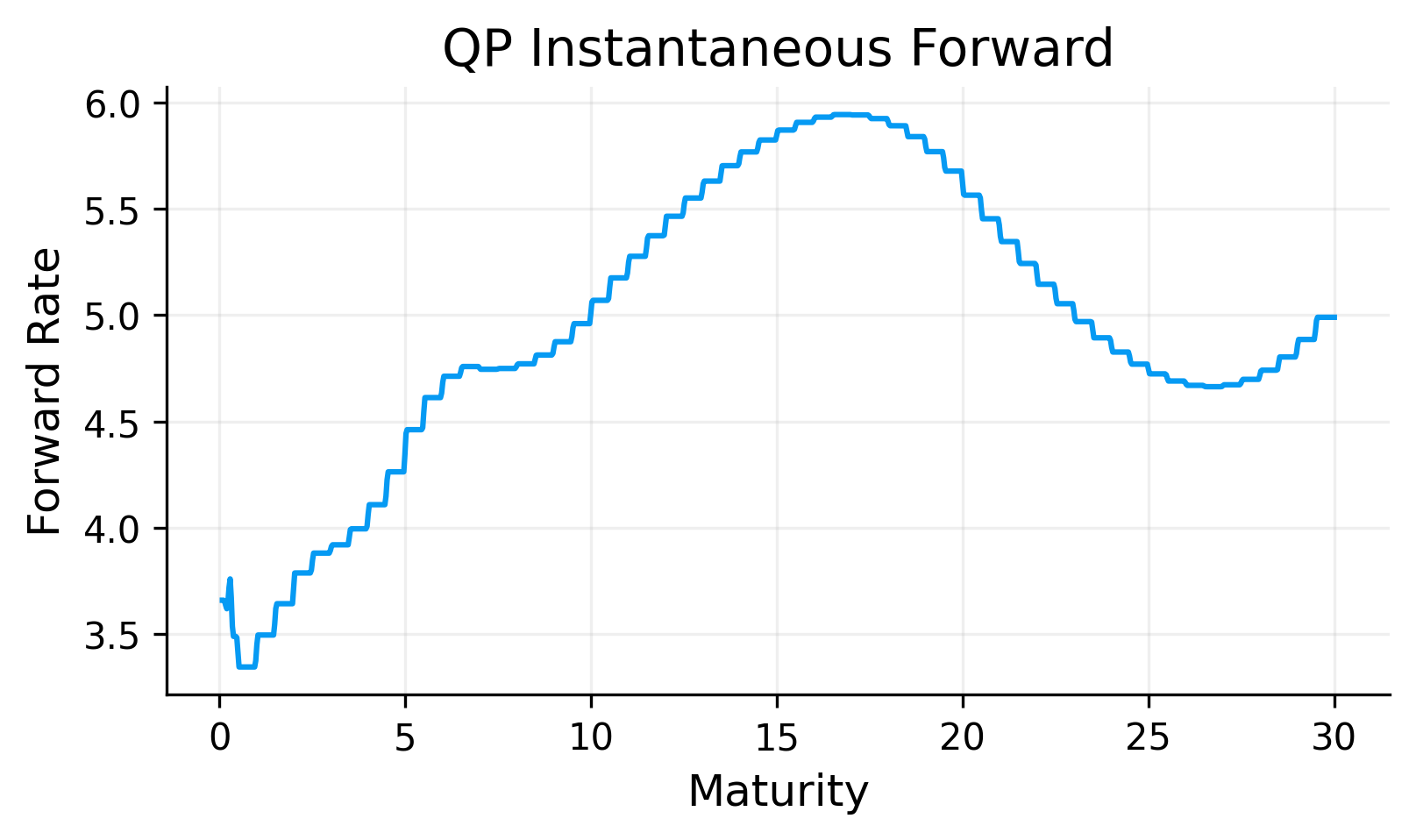

zero_table = fi.zero_curve_table(curves, t_min=1 / 12, t_max=curve_t_max, points=400)

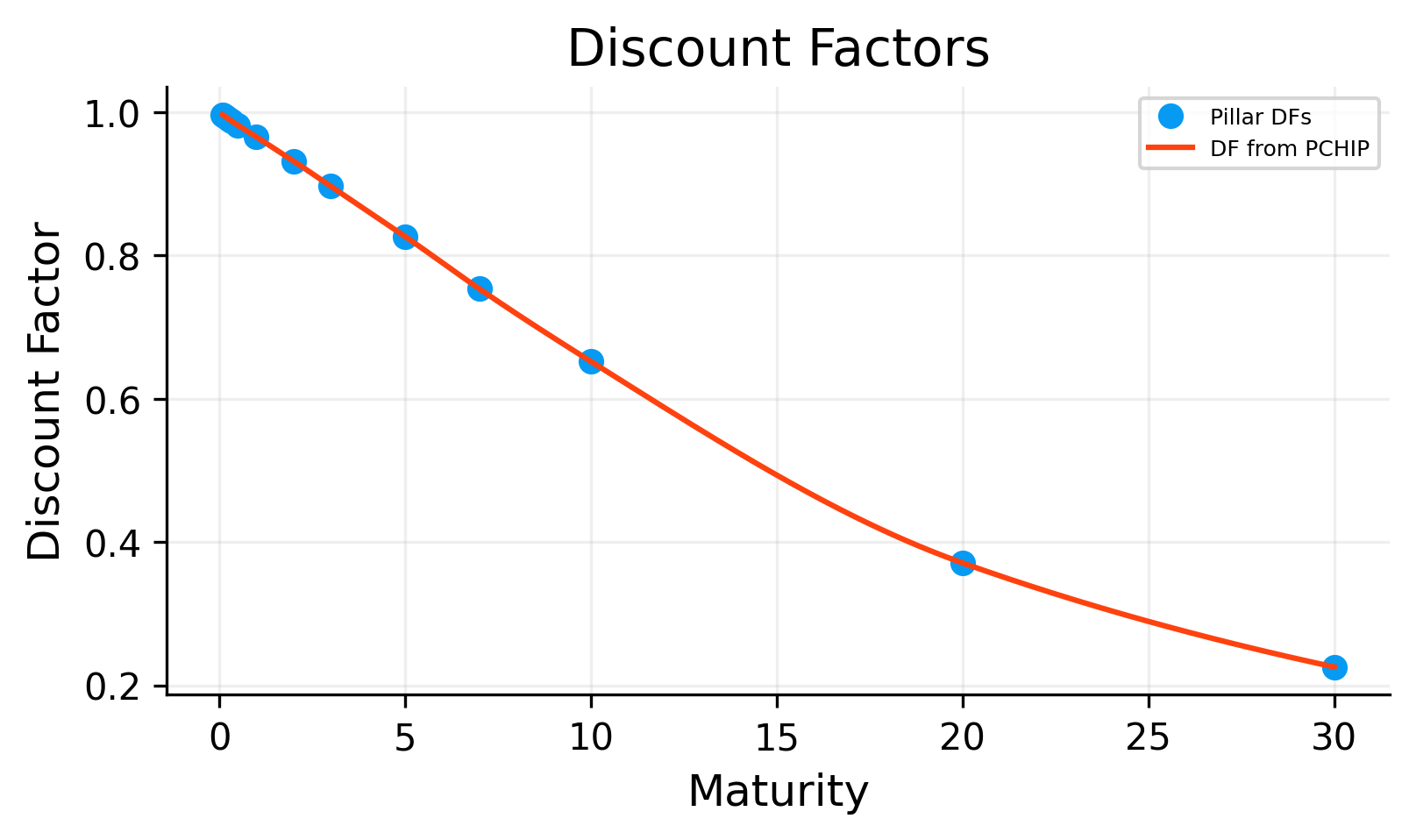

df_table = fi.discount_curve_table(curves, t_min=1 / 12, t_max=curve_t_max, points=400)

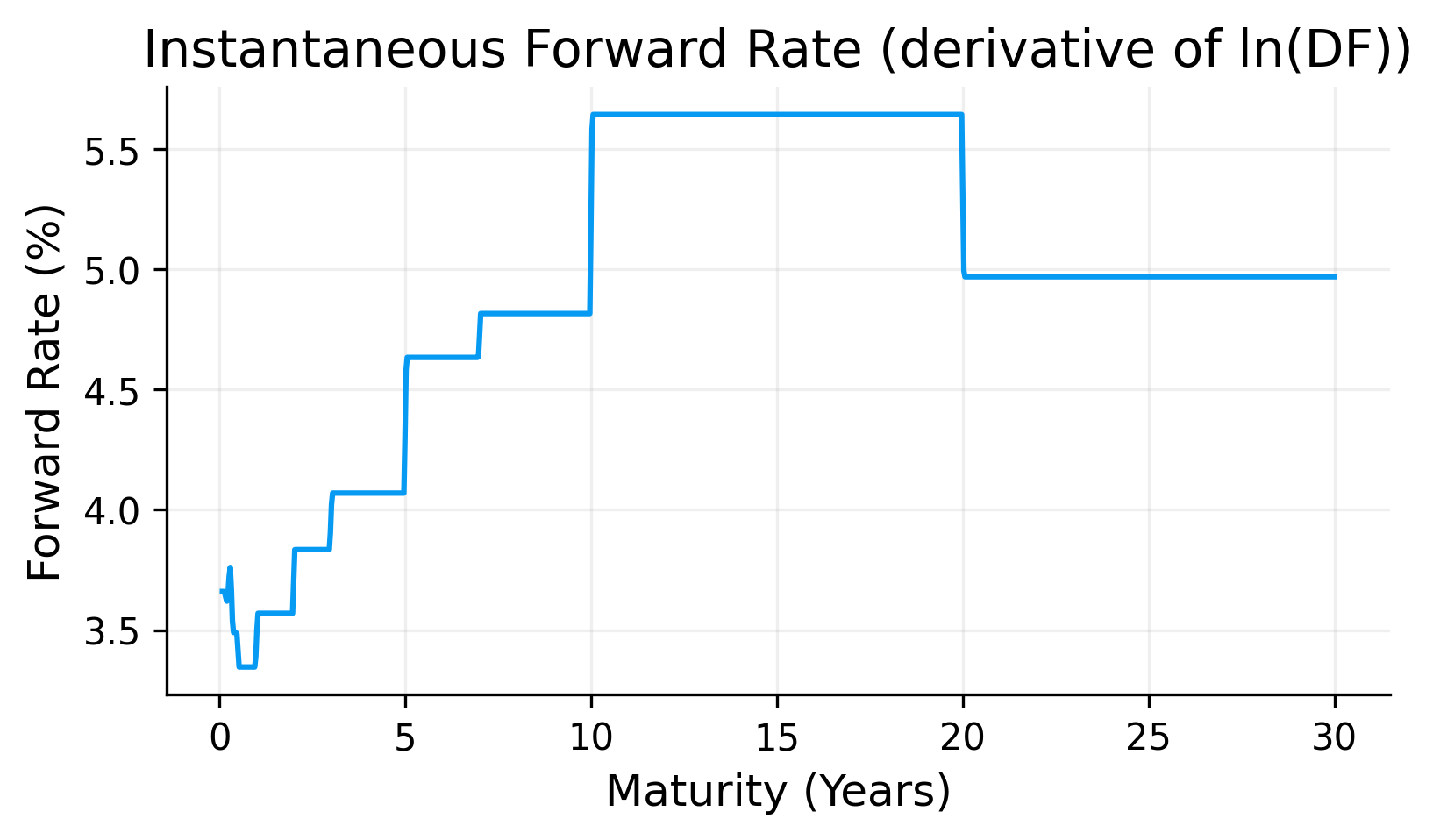

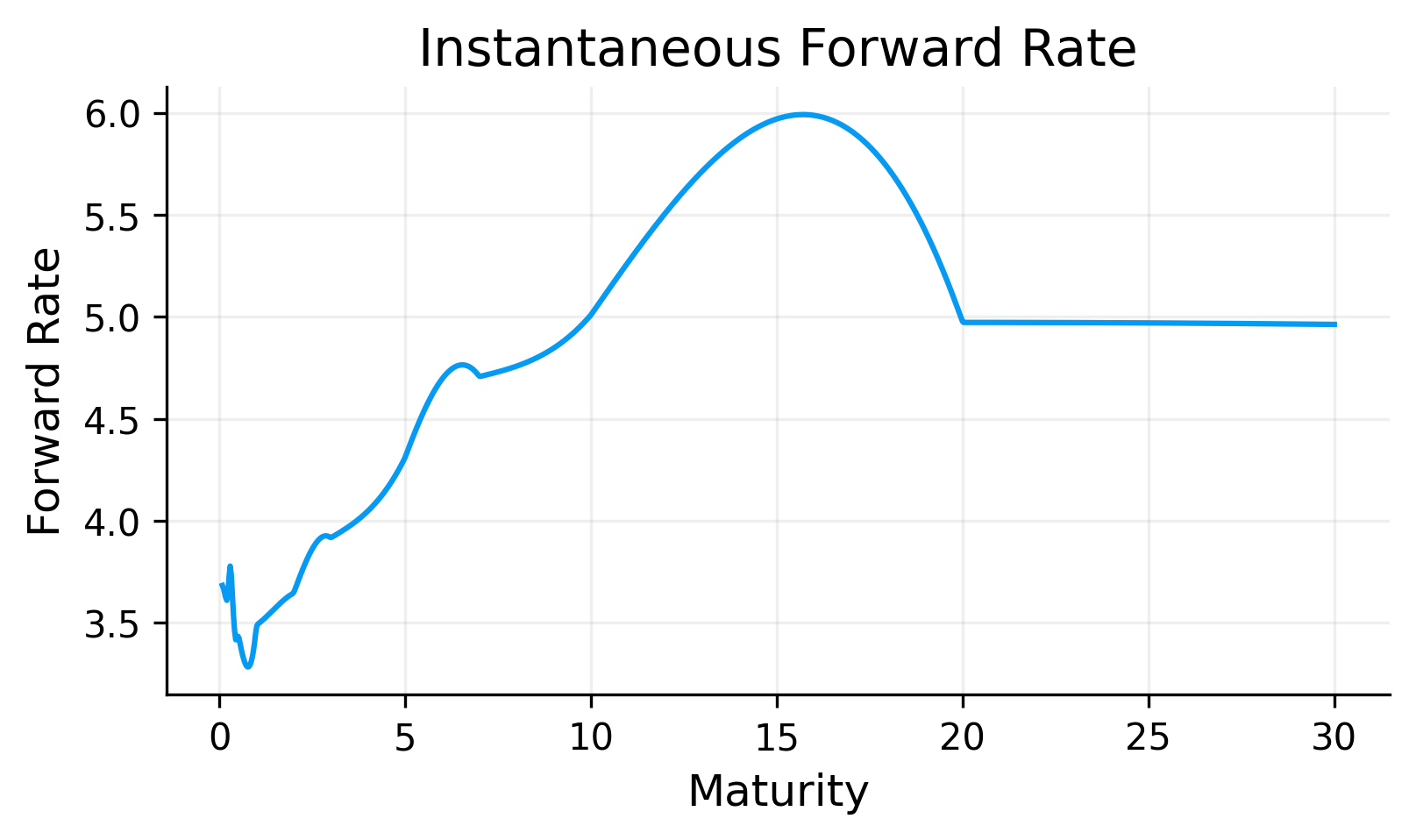

fwd_table = fi.forward_curve_table(curves, t_min=1 / 12, t_max=curve_t_max, points=400)

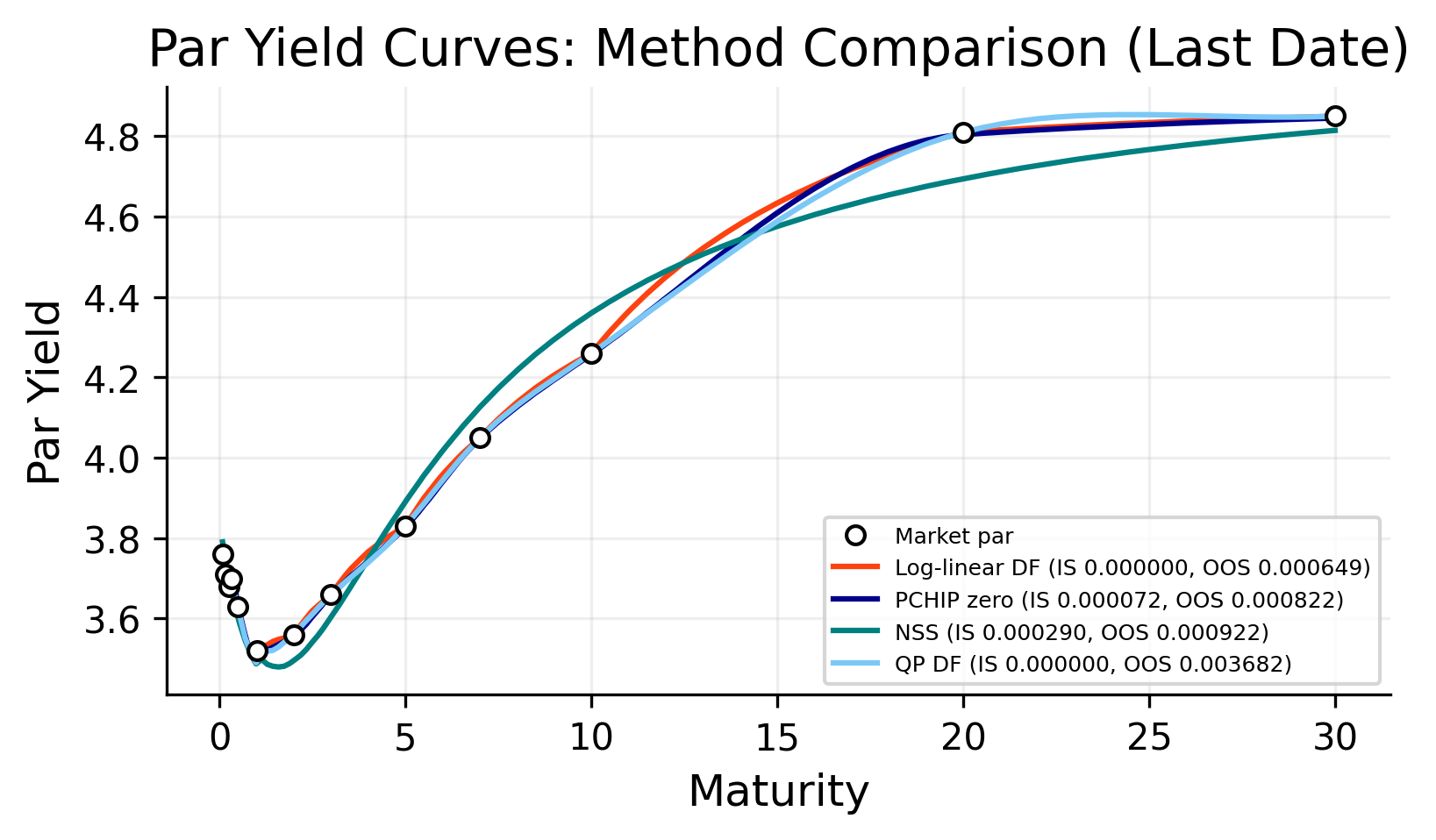

rmse = fi.rmse_backtest(par.tail(100), methods=methods, holdouts=holdouts, freq=freq, short_end=short_end,

tenor_cols=tenor_cols)

rmse_sorted = fi.sort_rmse_table(rmse, methods=methods)

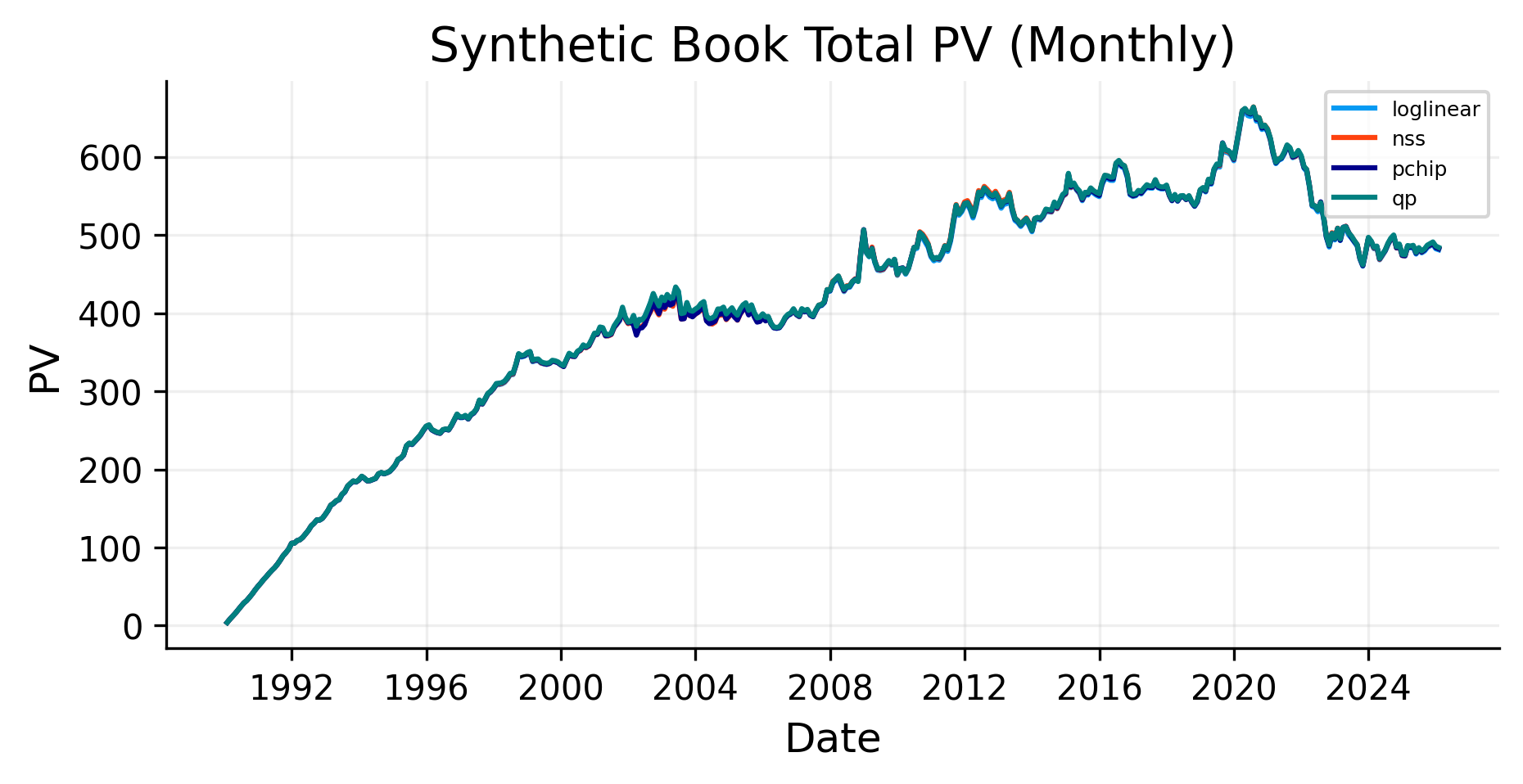

month_end = par.resample("ME").last().dropna(how="all")

book = fi.synthetic_issuance_book(month_end, maturities=keys, freq=freq)

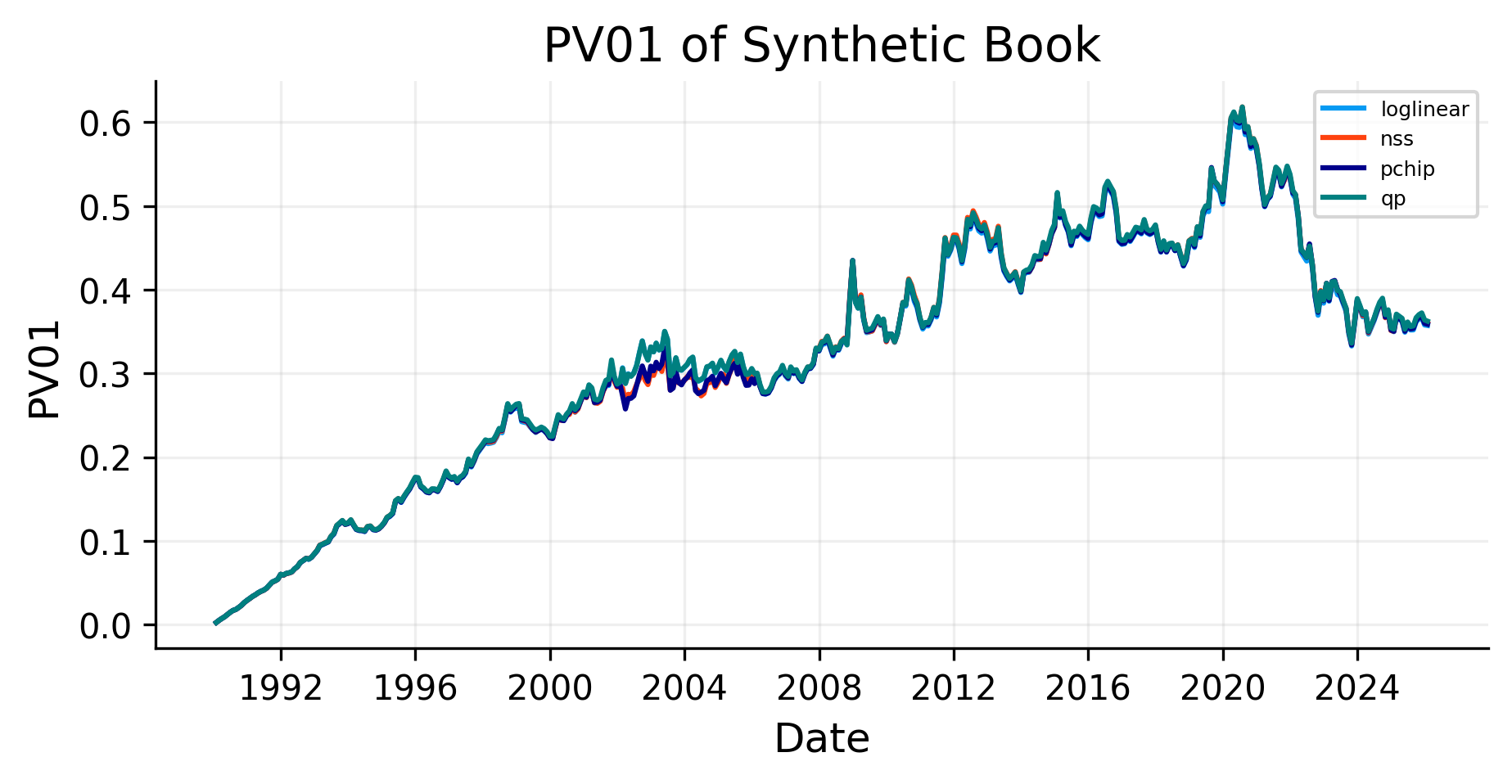

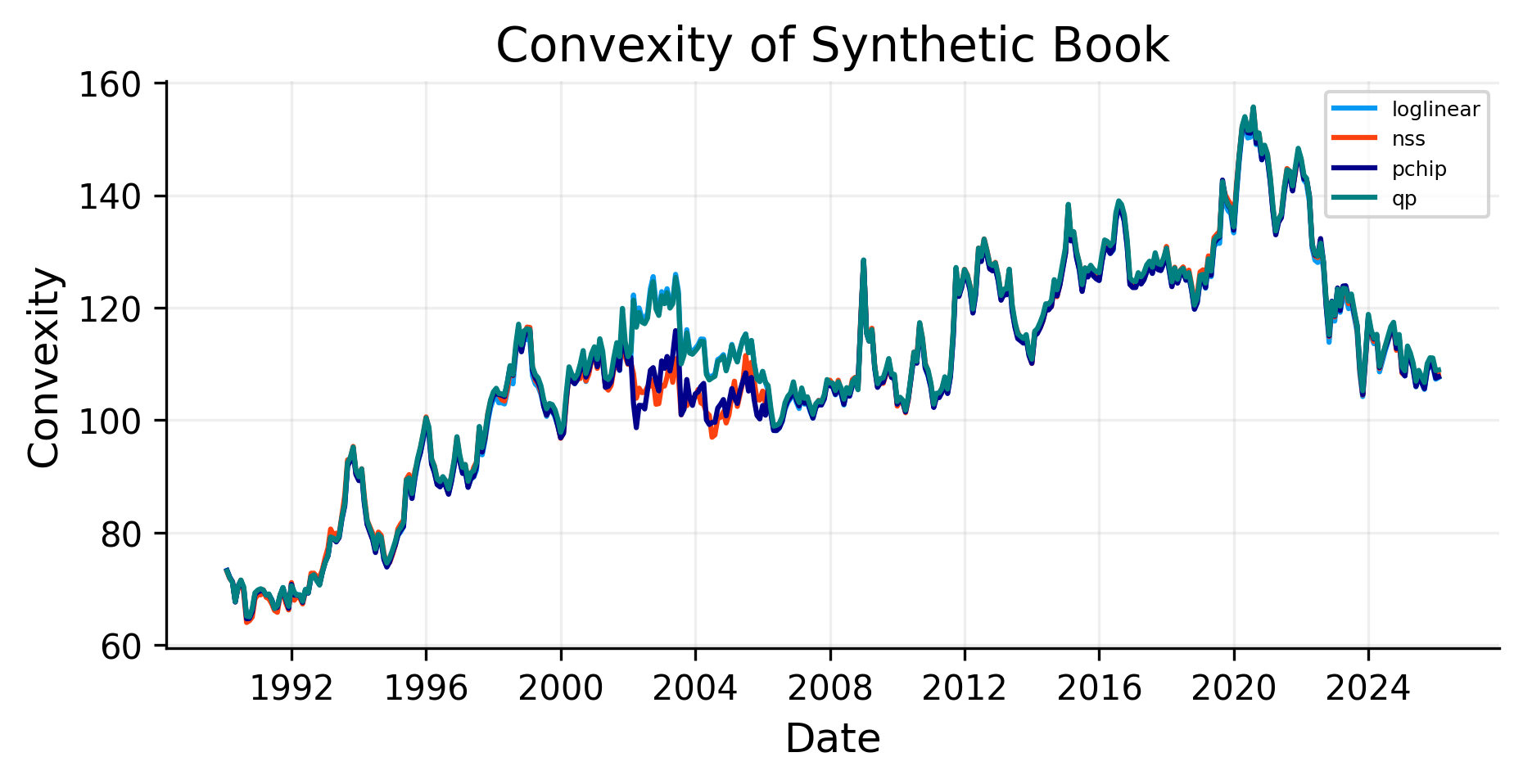

curves_for_dates = fi.curves_by_valuation_date(month_end.index, par, methods=methods, freq=freq,

short_end=short_end, tenor_cols=tenor_cols)

pv_total, pv_buckets = fi.book_pv_timeseries(book, curves_for_dates)

risk_df = fi.book_parallel_risk_timeseries(book, curves_for_dates, bump_bp=1.0)

krd_df = fi.book_krd_timeseries(book, curves_for_dates, keys=keys, bump_bp=1.0)

metrics = fi.make_book_metrics(pv_total, pv_buckets, risk_df)

bond, used_tenor = fi.bond_from_par_curve_row(par.loc[asof], maturity_years=10, tenor_cols=tenor_cols, freq=freq)

bond_tbl = fi.bond_price_and_risk(bond,curves, bump_bp=1.0, key_tenors=keys).reindex(methods)

n = len(par.index)

sample_dates = [par.index[0], par.index[n // 3], par.index[(2 * n) // 3], par.index[-1]]

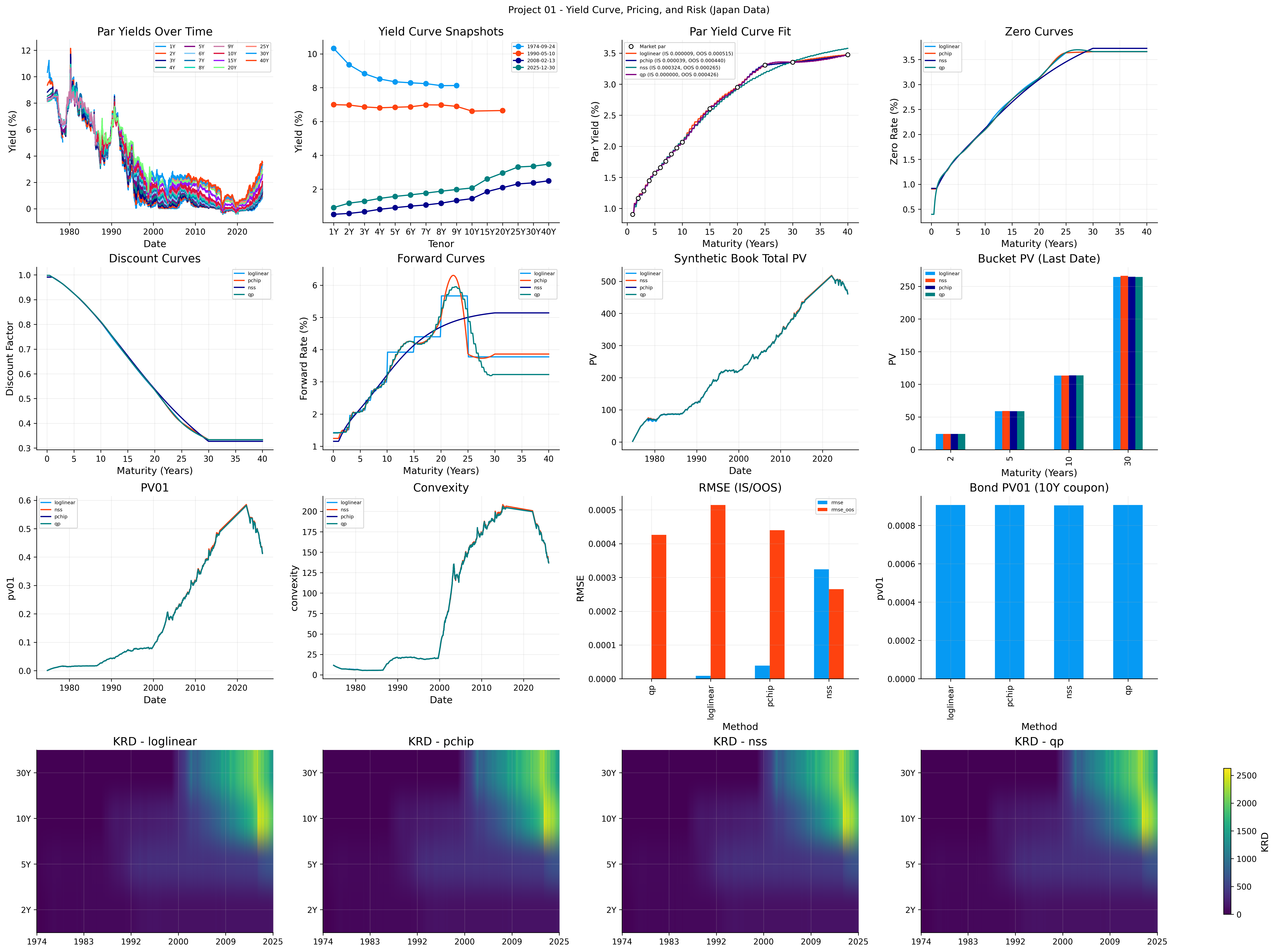

fig = plt.figure(figsize=(22, 16), constrained_layout=True)

gs = fig.add_gridspec(4, 4)

ax_all = fig.add_subplot(gs[0, 0])

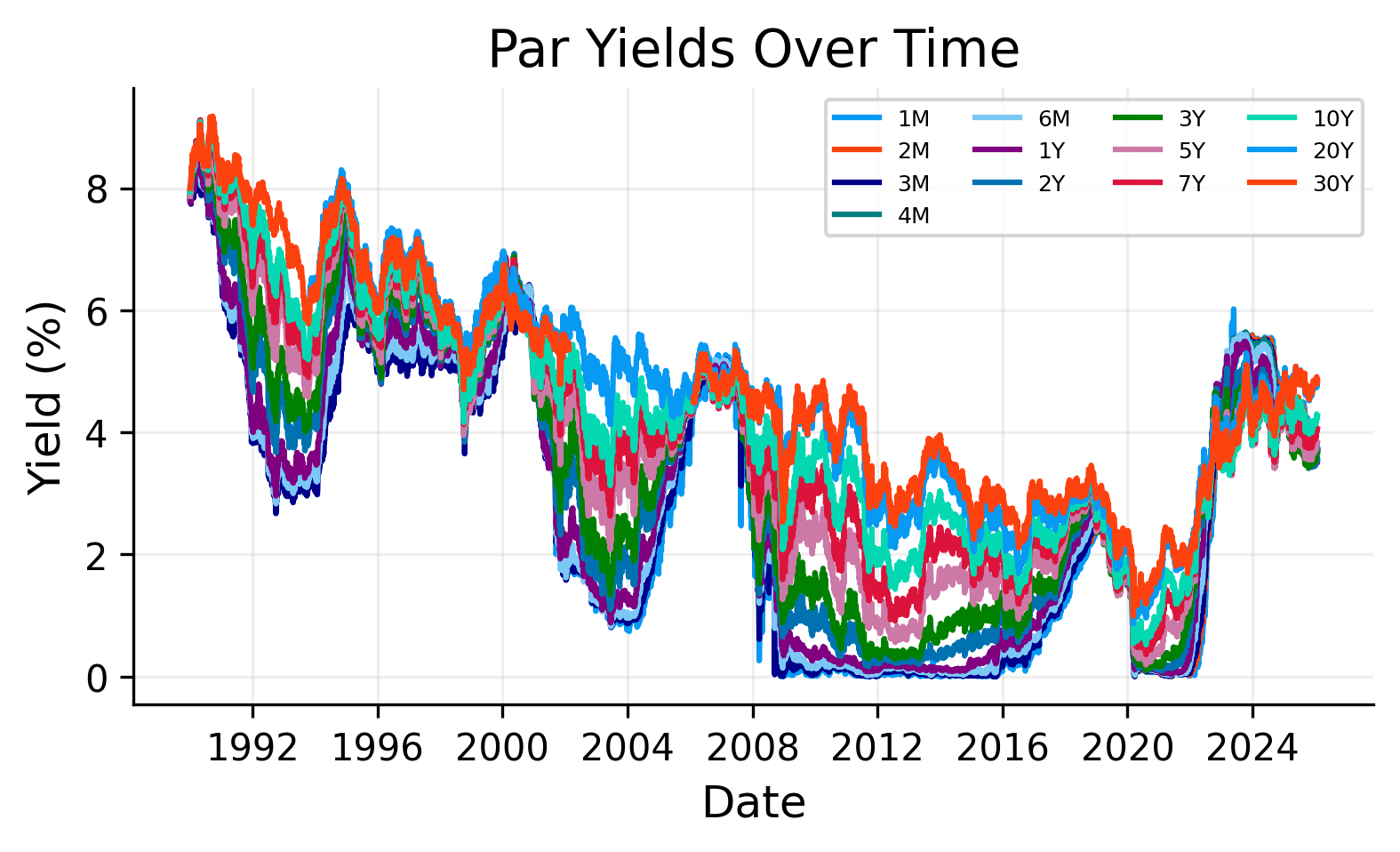

pl.plot_par_yields_history(ax_all, par, title="Par Yields Over Time")

ax_snap = fig.add_subplot(gs[0, 1])

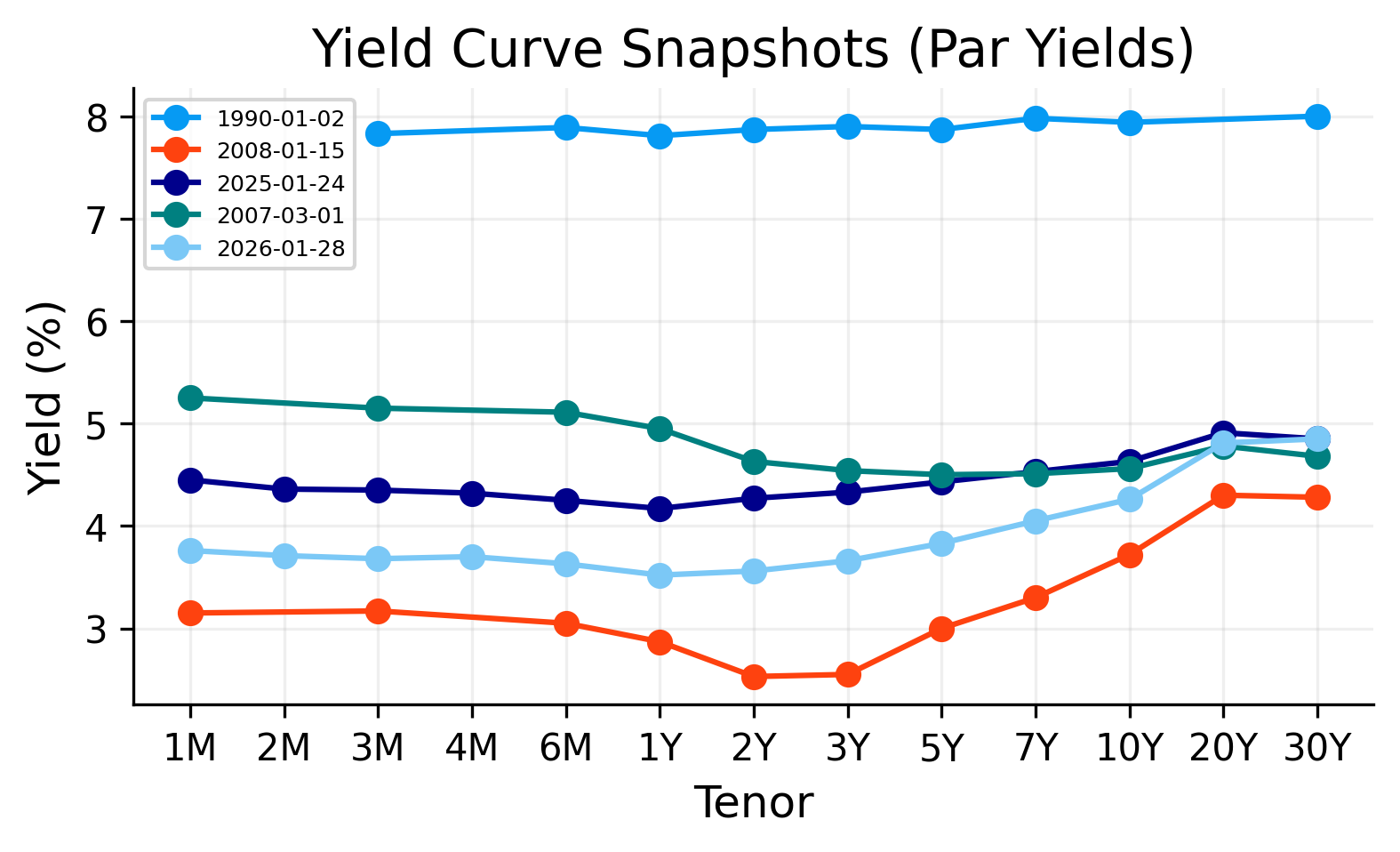

pl.plot_yield_curve_snapshots(ax_snap, par, tenor_cols=tenor_cols, sample_dates=sample_dates, title="Yield Curve Snapshots")

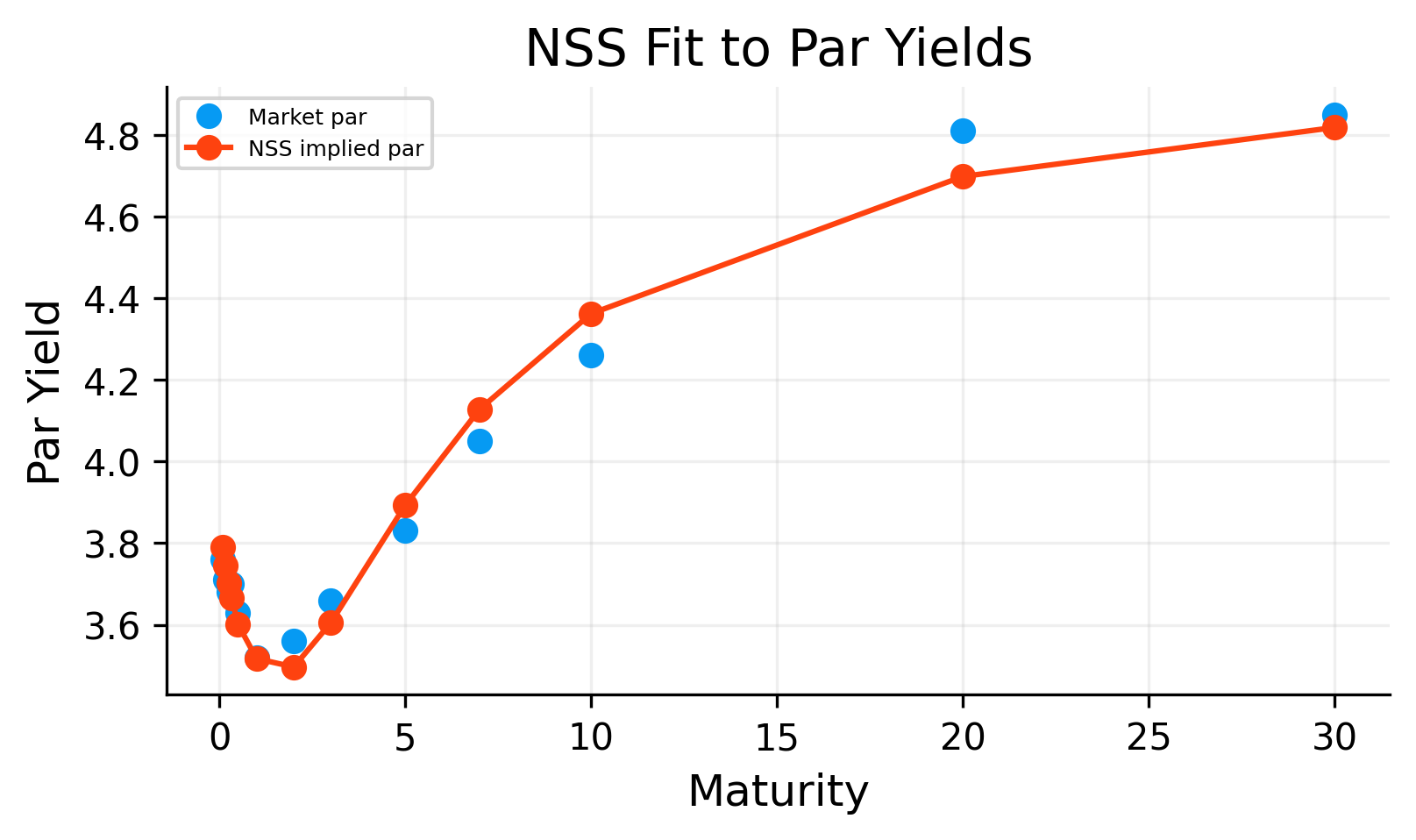

ax_par = fig.add_subplot(gs[0, 2])

pl.draw_market_par_points(ax_par, pillars.T, pillars.par)

rmse_label_map = {

m: f"{m} (IS {rmse.loc[m, 'rmse']:.6f}, OOS {rmse.loc[m, 'rmse_oos']:.6f})" if m in rmse.index else m

for m in methods

}

pl.draw_curve_lines(ax_par, par_fit_table, scale=100.0, label_map=rmse_label_map)

pl.style_axis(ax_par, title="Par Yield Curve Fit", xlabel="Maturity (Years)", ylabel="Par Yield (%)")

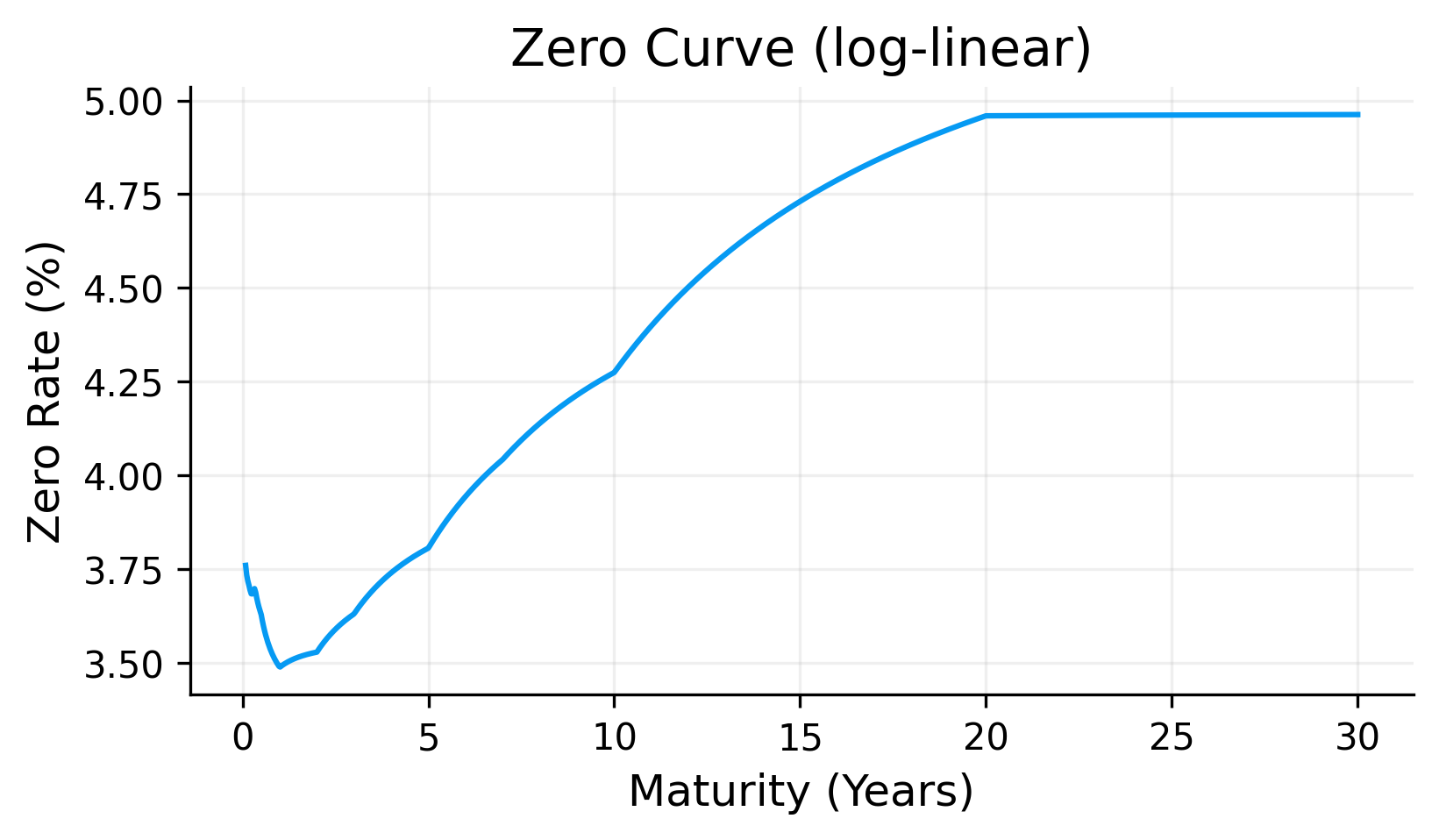

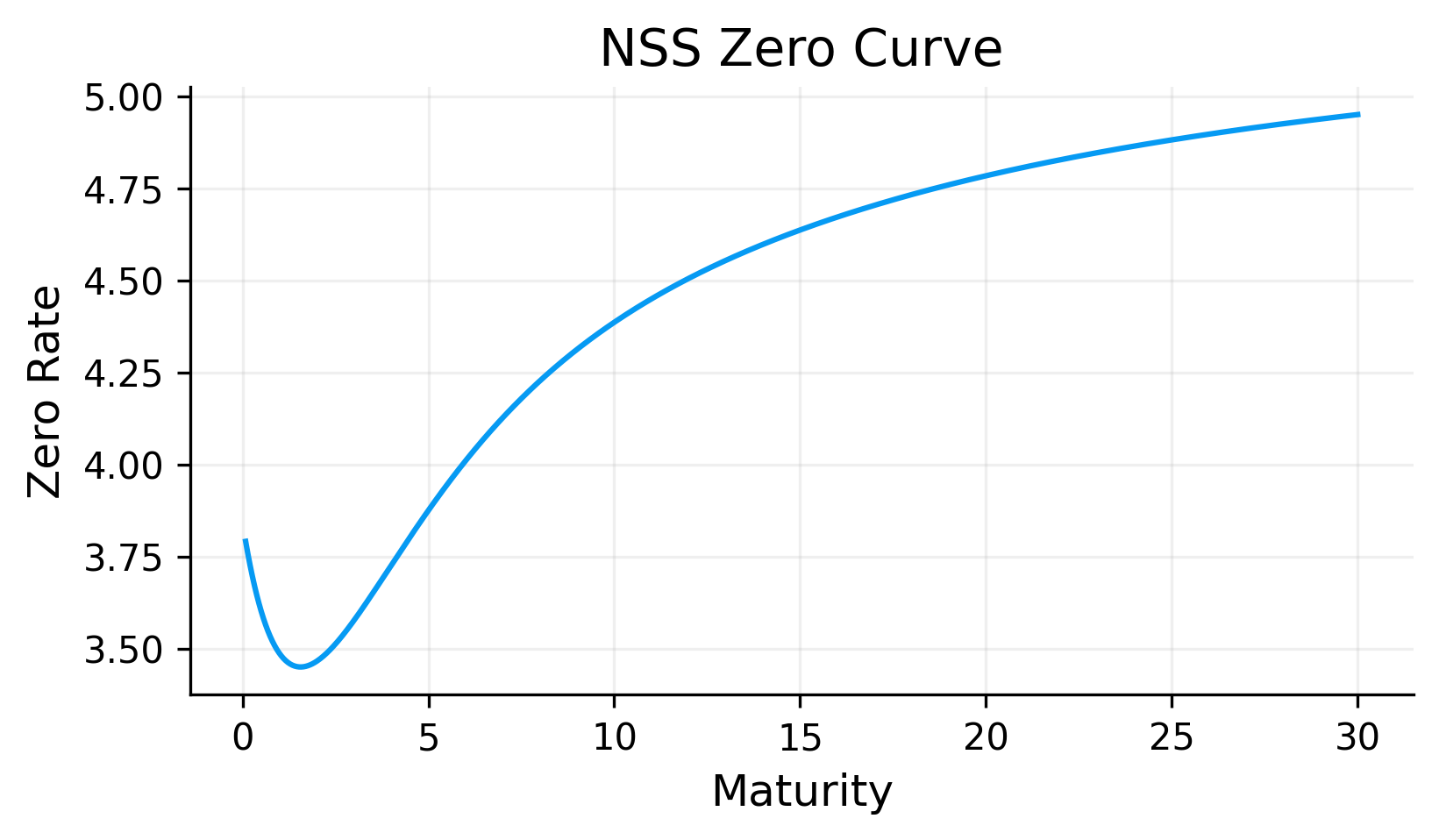

ax_zero = fig.add_subplot(gs[0, 3])

pl.plot_zero_curves(ax_zero, zero_table, title="Zero Curves")

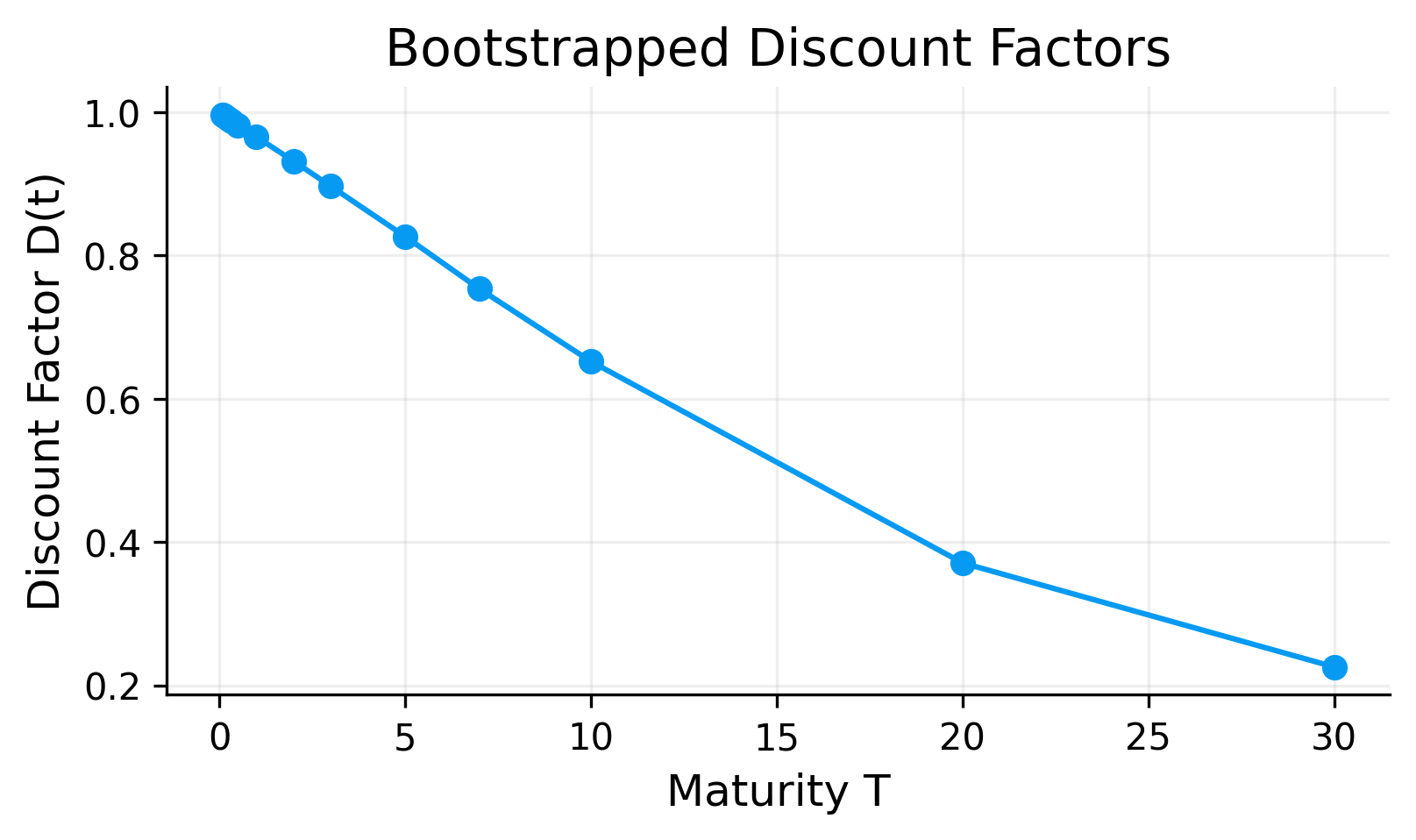

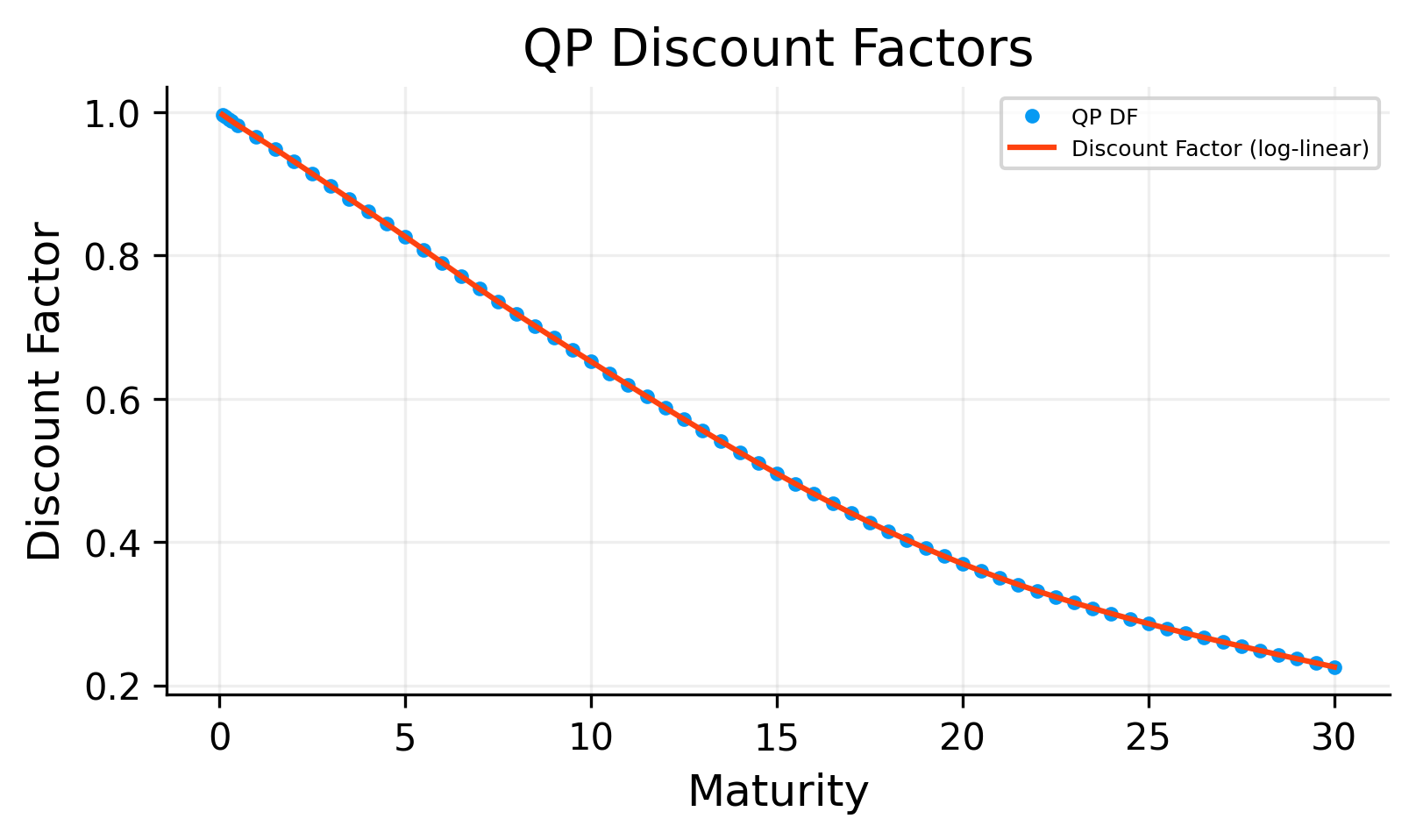

ax_df = fig.add_subplot(gs[1, 0])

pl.plot_discount_curves(ax_df, df_table, title="Discount Curves")

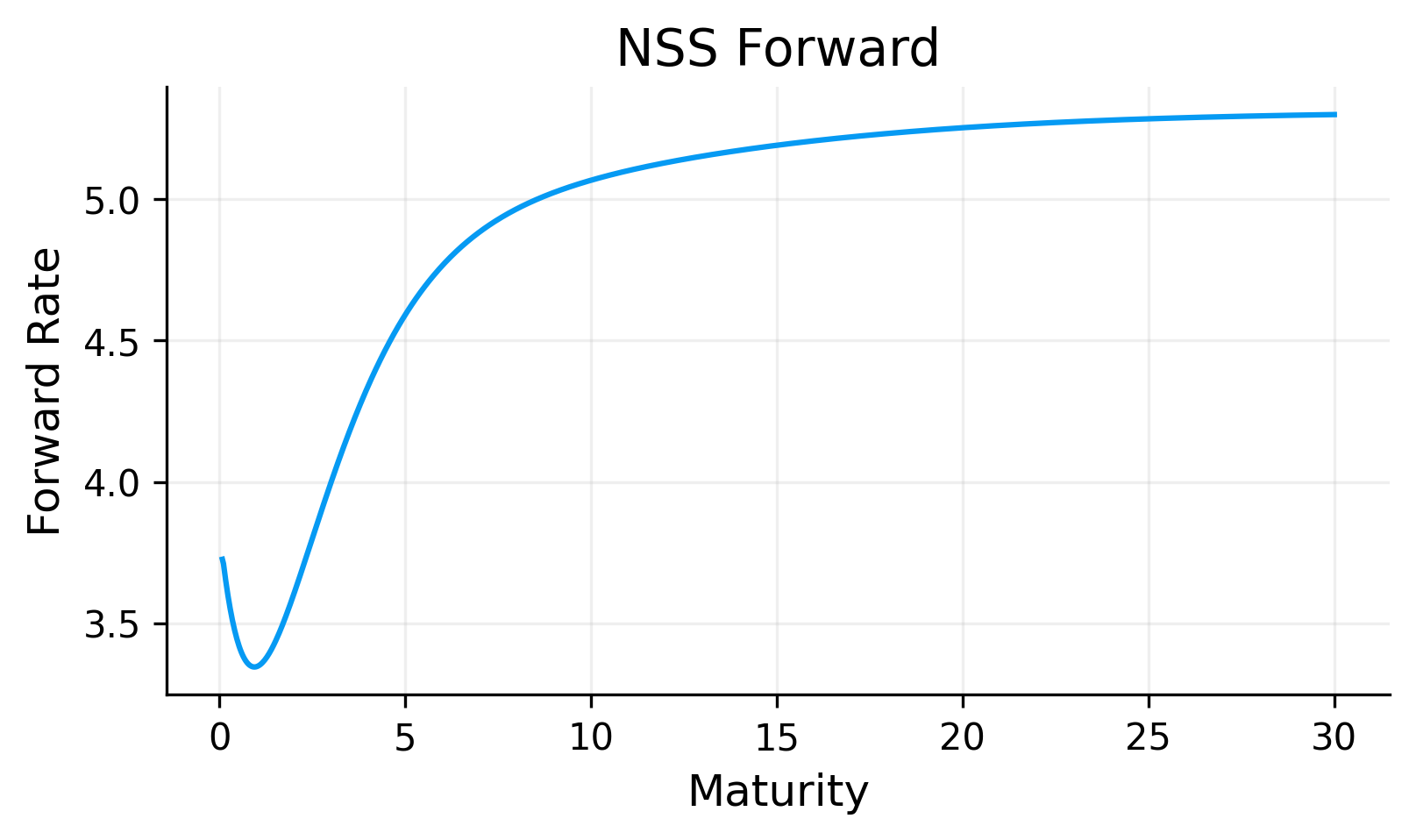

ax_fwd = fig.add_subplot(gs[1, 1])

pl.plot_forward_curves(ax_fwd, fwd_table, title="Forward Curves")

ax_total = fig.add_subplot(gs[1, 2])

pl.plot_total_pv(ax_total, metrics.total_pv, title="Synthetic Book Total PV")

ax_bucket = fig.add_subplot(gs[1, 3])

pl.plot_bucket_pv(ax_bucket, metrics.bucket_pv, title="Bucket PV (Last Date)")

ax_pv01 = fig.add_subplot(gs[2, 0])

pl.plot_risk_metric(ax_pv01, metrics.risk, metric="pv01", title="PV01")

ax_conv = fig.add_subplot(gs[2, 1])

pl.plot_risk_metric(ax_conv, metrics.risk, metric="convexity", title="Convexity")

ax_rmse = fig.add_subplot(gs[2, 2])

pl.plot_rmse_bars(ax_rmse, rmse_sorted, title="RMSE (IS/OOS)")

ax_bond = fig.add_subplot(gs[2, 3])

pl.plot_bond_metric_bar(ax_bond, bond_tbl, metric="pv01", title=f"Bond PV01 ({used_tenor} coupon)")

krd_axes = [fig.add_subplot(gs[3, i]) for i in range(4)]

ims = []

for ax, method in zip(krd_axes, methods, strict=False):

im = pl.plot_krd_heatmap(ax, krd_df, method=method, keys=keys, title=f"KRD - {method}")

if im is not None:

ims.append(im)

if ims:

fig.colorbar(ims[0], ax=krd_axes, shrink=0.8, location="right", label="KRD")

fig.suptitle("Project 01 - Yield Curve, Pricing, and Risk (Japan Data)", y=1.02)

plt.show()

display(bond_tbl)

display(rmse_sorted)